Features • Quickstart • Notebooks • Documentation • Reddit

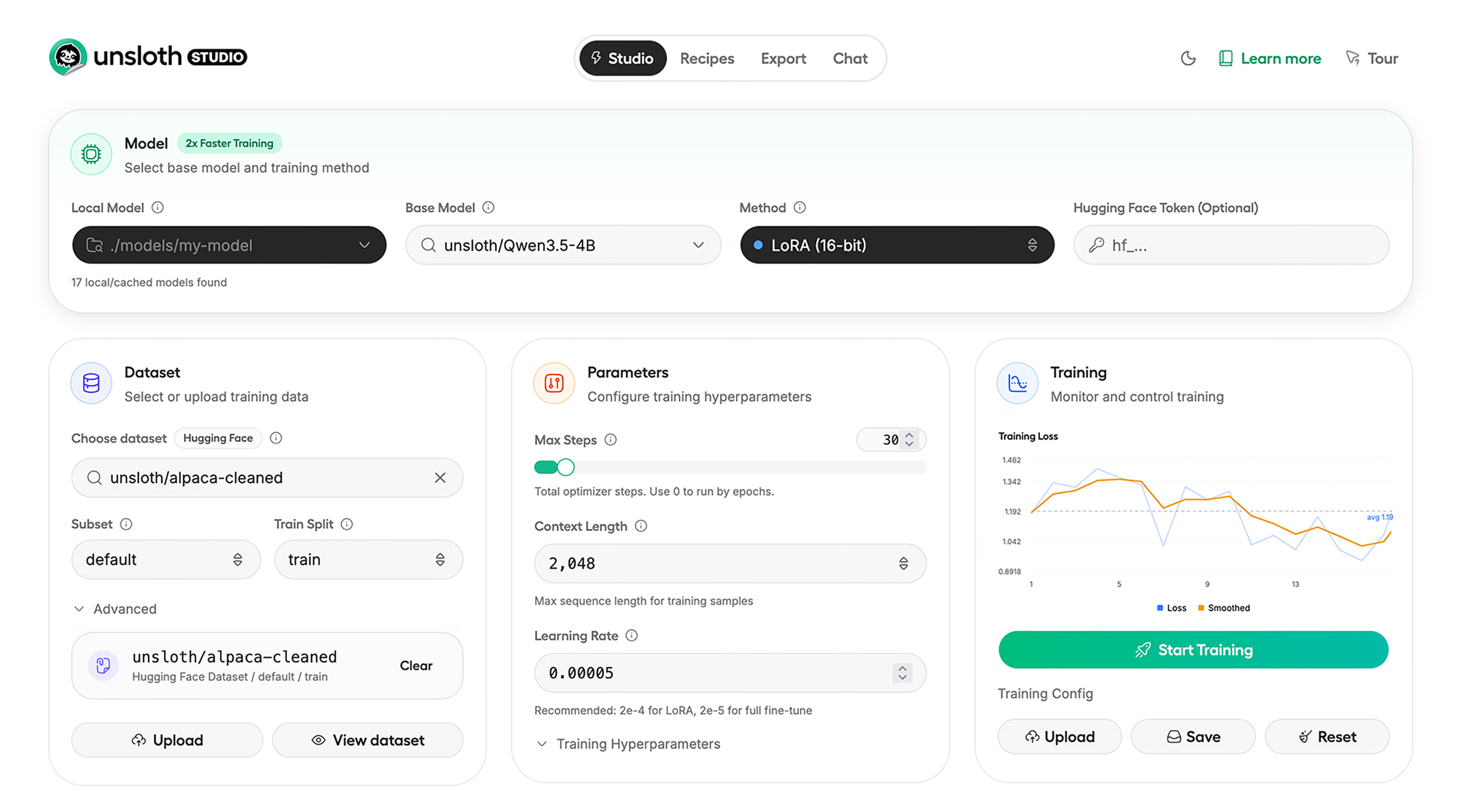

Unsloth Studio (Beta) lets you run and train text, [audio](https://unsloth.ai/docs/basics/text-to-speech-tts-fine-tuning), [embedding](https://unsloth.ai/docs/new/embedding-finetuning), [vision](https://unsloth.ai/docs/basics/vision-fine-tuning) models on Windows, Linux and macOS.

## ⭐ Features

Unsloth provides several key features for both inference and training:

### Inference

* **Search + download + run models** including GGUF, LoRA adapters, safetensors

* **Export models**: [Save or export](https://unsloth.ai/docs/new/studio/export) models to GGUF, 16-bit safetensors and other formats.

* **Tool calling**: Support for [self-healing tool calling](https://unsloth.ai/docs/new/studio/chat#auto-healing-tool-calling) and web search

* **[Code execution](https://unsloth.ai/docs/new/studio/chat#code-execution)**: lets LLMs test code in Claude artifacts and sandbox environments

* [Auto-tune inference parameters](https://unsloth.ai/docs/new/studio/chat#auto-parameter-tuning) and customize chat templates.

* We work directly with teams behind [gpt-oss](https://docs.unsloth.ai/new/gpt-oss-how-to-run-and-fine-tune#unsloth-fixes-for-gpt-oss), [Qwen3](https://www.reddit.com/r/LocalLLaMA/comments/1kaodxu/qwen3_unsloth_dynamic_ggufs_128k_context_bug_fixes/), [Llama 4](https://github.com/ggml-org/llama.cpp/pull/12889), [Mistral](models/tutorials/devstral-how-to-run-and-fine-tune.md), [Gemma 1-3](https://news.ycombinator.com/item?id=39671146), and [Phi-4](https://unsloth.ai/blog/phi4), where we’ve fixed bugs that improve model accuracy.

* Upload images, audio, PDFs, code, DOCX and more file types to chat with.

### Training

* Train and RL **500+ models** up to **2x faster** with up to **70% less VRAM**, with no accuracy loss.

* Custom Triton and mathematical **kernels**. See some collabs we did with [PyTorch](https://unsloth.ai/docs/get-started/reinforcement-learning-rl-guide/fp8-reinforcement-learning) and [Hugging Face](https://unsloth.ai/docs/new/faster-moe).

* **Data Recipes**: [Auto-create datasets](https://unsloth.ai/docs/new/studio/data-recipe) from **PDF, CSV, DOCX** etc. Edit data in a visual-node workflow.

* **[Reinforcement Learning](https://unsloth.ai/docs/get-started/reinforcement-learning-rl-guide)** (RL): The most efficient [RL](https://unsloth.ai/docs/get-started/reinforcement-learning-rl-guide) library, using **80% less VRAM** for GRPO, [FP8](https://unsloth.ai/docs/get-started/reinforcement-learning-rl-guide/fp8-reinforcement-learning) etc.

* Supports full fine-tuning, RL, pretraining, 4-bit, 16-bit and, FP8 training.

* **Observability**: Monitor training live, track loss and GPU usage and customize graphs.

* [Multi-GPU](https://unsloth.ai/docs/basics/multi-gpu-training-with-unsloth) training is supported, with major improvements coming soon.

## ⚡ Quickstart

Unsloth can be used in two ways: through **[Unsloth Studio](https://unsloth.ai/docs/new/studio/)**, the web UI, or through **Unsloth Core**, the code-based version. Each has different requirements.

### Unsloth Studio (web UI)

Unsloth Studio (Beta) works on **Windows, Linux, WSL** and **macOS**.

* **CPU:** Supported for Chat and Data Recipes currently

* **NVIDIA:** Training works on RTX 30/40/50, Blackwell, DGX Spark, Station and more

* **macOS:** Currently supports chat and Data Recipes. **MLX training** is coming very soon

* **AMD:** Chat + Data works. Train with [Unsloth Core](#unsloth-core-code-based). Studio support is out soon.

* **Coming soon:** Training support for Apple MLX, AMD, and Intel.

* **Multi-GPU:** Available now, with a major upgrade on the way

#### macOS, Linux, WSL:

```bash

curl -fsSL https://unsloth.ai/install.sh | sh

```

If you don't have `curl`, use `wget`. Launch after setup via:

```bash

source unsloth_studio/bin/activate

unsloth studio -H 0.0.0.0 -p 8888

```

#### Windows:

```powershell

irm https://unsloth.ai/install.ps1 | iex

```

Launch after setup via:

```powershell

unsloth studio -H 0.0.0.0 -p 8888

```

#### Docker

Use our [Docker image](https://hub.docker.com/r/unsloth/unsloth) ```unsloth/unsloth``` container. Run:

```bash

docker run -d -e JUPYTER_PASSWORD="mypassword" \

-p 8888:8888 -p 8000:8000 -p 2222:22 \

-v $(pwd)/work:/workspace/work \

--gpus all \

unsloth/unsloth

```

#### macOS, Linux, WSL developer installs:

```bash

git clone https://github.com/unslothai/unsloth

cd unsloth

./install.sh --local

unsloth studio -H 0.0.0.0 -p 8888

```

Then to update :

```bash

unsloth studio update --local

```

#### Windows PowerShell developer installs:

```powershell

git clone https://github.com/unslothai/unsloth.git

cd unsloth

Set-ExecutionPolicy -Scope Process -ExecutionPolicy Bypass

.\install.ps1 --local

unsloth studio -H 0.0.0.0 -p 8888

```

Then to update :

```bash

unsloth studio update --local

```

#### Nightly - MacOS, Linux, WSL:

```bash

git clone https://github.com/unslothai/unsloth

cd unsloth

git checkout nightly

./install.sh --local

unsloth studio -H 0.0.0.0 -p 8888

```

Then to launch every time:

```bash

unsloth studio -H 0.0.0.0 -p 8888

```

#### Nightly - Windows:

Run in Windows Powershell:

```bash

git clone https://github.com/unslothai/unsloth.git

cd unsloth

git checkout nightly

Set-ExecutionPolicy -Scope Process -ExecutionPolicy Bypass

.\install.ps1 --local

unsloth studio -H 0.0.0.0 -p 8888

```

Then to launch every time:

```bash

unsloth studio -H 0.0.0.0 -p 8888

```

### Unsloth Core (code-based)

#### Linux, WSL:

```bash

curl -LsSf https://astral.sh/uv/install.sh | sh

uv venv unsloth_env --python 3.13

source unsloth_env/bin/activate

uv pip install unsloth --torch-backend=auto

```

#### Windows:

```powershell

winget install -e --id Python.Python.3.13

winget install --id=astral-sh.uv -e

uv venv unsloth_env --python 3.13

.\unsloth_env\Scripts\activate

uv pip install unsloth --torch-backend=auto

```

For Windows, `pip install unsloth` works only if you have PyTorch installed. Read our [Windows Guide](https://unsloth.ai/docs/get-started/install/windows-installation).

You can use the same Docker image as Unsloth Studio.

#### AMD, Intel:

For RTX 50x, B200, 6000 GPUs: `uv pip install unsloth --torch-backend=auto`. Read our guides for: [Blackwell](https://unsloth.ai/docs/blog/fine-tuning-llms-with-blackwell-rtx-50-series-and-unsloth) and [DGX Spark](https://unsloth.ai/docs/blog/fine-tuning-llms-with-nvidia-dgx-spark-and-unsloth).

Unsloth Studio (Beta) lets you run and train text, [audio](https://unsloth.ai/docs/basics/text-to-speech-tts-fine-tuning), [embedding](https://unsloth.ai/docs/new/embedding-finetuning), [vision](https://unsloth.ai/docs/basics/vision-fine-tuning) models on Windows, Linux and macOS.

## ⭐ Features

Unsloth provides several key features for both inference and training:

### Inference

* **Search + download + run models** including GGUF, LoRA adapters, safetensors

* **Export models**: [Save or export](https://unsloth.ai/docs/new/studio/export) models to GGUF, 16-bit safetensors and other formats.

* **Tool calling**: Support for [self-healing tool calling](https://unsloth.ai/docs/new/studio/chat#auto-healing-tool-calling) and web search

* **[Code execution](https://unsloth.ai/docs/new/studio/chat#code-execution)**: lets LLMs test code in Claude artifacts and sandbox environments

* [Auto-tune inference parameters](https://unsloth.ai/docs/new/studio/chat#auto-parameter-tuning) and customize chat templates.

* We work directly with teams behind [gpt-oss](https://docs.unsloth.ai/new/gpt-oss-how-to-run-and-fine-tune#unsloth-fixes-for-gpt-oss), [Qwen3](https://www.reddit.com/r/LocalLLaMA/comments/1kaodxu/qwen3_unsloth_dynamic_ggufs_128k_context_bug_fixes/), [Llama 4](https://github.com/ggml-org/llama.cpp/pull/12889), [Mistral](models/tutorials/devstral-how-to-run-and-fine-tune.md), [Gemma 1-3](https://news.ycombinator.com/item?id=39671146), and [Phi-4](https://unsloth.ai/blog/phi4), where we’ve fixed bugs that improve model accuracy.

* Upload images, audio, PDFs, code, DOCX and more file types to chat with.

### Training

* Train and RL **500+ models** up to **2x faster** with up to **70% less VRAM**, with no accuracy loss.

* Custom Triton and mathematical **kernels**. See some collabs we did with [PyTorch](https://unsloth.ai/docs/get-started/reinforcement-learning-rl-guide/fp8-reinforcement-learning) and [Hugging Face](https://unsloth.ai/docs/new/faster-moe).

* **Data Recipes**: [Auto-create datasets](https://unsloth.ai/docs/new/studio/data-recipe) from **PDF, CSV, DOCX** etc. Edit data in a visual-node workflow.

* **[Reinforcement Learning](https://unsloth.ai/docs/get-started/reinforcement-learning-rl-guide)** (RL): The most efficient [RL](https://unsloth.ai/docs/get-started/reinforcement-learning-rl-guide) library, using **80% less VRAM** for GRPO, [FP8](https://unsloth.ai/docs/get-started/reinforcement-learning-rl-guide/fp8-reinforcement-learning) etc.

* Supports full fine-tuning, RL, pretraining, 4-bit, 16-bit and, FP8 training.

* **Observability**: Monitor training live, track loss and GPU usage and customize graphs.

* [Multi-GPU](https://unsloth.ai/docs/basics/multi-gpu-training-with-unsloth) training is supported, with major improvements coming soon.

## ⚡ Quickstart

Unsloth can be used in two ways: through **[Unsloth Studio](https://unsloth.ai/docs/new/studio/)**, the web UI, or through **Unsloth Core**, the code-based version. Each has different requirements.

### Unsloth Studio (web UI)

Unsloth Studio (Beta) works on **Windows, Linux, WSL** and **macOS**.

* **CPU:** Supported for Chat and Data Recipes currently

* **NVIDIA:** Training works on RTX 30/40/50, Blackwell, DGX Spark, Station and more

* **macOS:** Currently supports chat and Data Recipes. **MLX training** is coming very soon

* **AMD:** Chat + Data works. Train with [Unsloth Core](#unsloth-core-code-based). Studio support is out soon.

* **Coming soon:** Training support for Apple MLX, AMD, and Intel.

* **Multi-GPU:** Available now, with a major upgrade on the way

#### macOS, Linux, WSL:

```bash

curl -fsSL https://unsloth.ai/install.sh | sh

```

If you don't have `curl`, use `wget`. Launch after setup via:

```bash

source unsloth_studio/bin/activate

unsloth studio -H 0.0.0.0 -p 8888

```

#### Windows:

```powershell

irm https://unsloth.ai/install.ps1 | iex

```

Launch after setup via:

```powershell

unsloth studio -H 0.0.0.0 -p 8888

```

#### Docker

Use our [Docker image](https://hub.docker.com/r/unsloth/unsloth) ```unsloth/unsloth``` container. Run:

```bash

docker run -d -e JUPYTER_PASSWORD="mypassword" \

-p 8888:8888 -p 8000:8000 -p 2222:22 \

-v $(pwd)/work:/workspace/work \

--gpus all \

unsloth/unsloth

```

#### macOS, Linux, WSL developer installs:

```bash

git clone https://github.com/unslothai/unsloth

cd unsloth

./install.sh --local

unsloth studio -H 0.0.0.0 -p 8888

```

Then to update :

```bash

unsloth studio update --local

```

#### Windows PowerShell developer installs:

```powershell

git clone https://github.com/unslothai/unsloth.git

cd unsloth

Set-ExecutionPolicy -Scope Process -ExecutionPolicy Bypass

.\install.ps1 --local

unsloth studio -H 0.0.0.0 -p 8888

```

Then to update :

```bash

unsloth studio update --local

```

#### Nightly - MacOS, Linux, WSL:

```bash

git clone https://github.com/unslothai/unsloth

cd unsloth

git checkout nightly

./install.sh --local

unsloth studio -H 0.0.0.0 -p 8888

```

Then to launch every time:

```bash

unsloth studio -H 0.0.0.0 -p 8888

```

#### Nightly - Windows:

Run in Windows Powershell:

```bash

git clone https://github.com/unslothai/unsloth.git

cd unsloth

git checkout nightly

Set-ExecutionPolicy -Scope Process -ExecutionPolicy Bypass

.\install.ps1 --local

unsloth studio -H 0.0.0.0 -p 8888

```

Then to launch every time:

```bash

unsloth studio -H 0.0.0.0 -p 8888

```

### Unsloth Core (code-based)

#### Linux, WSL:

```bash

curl -LsSf https://astral.sh/uv/install.sh | sh

uv venv unsloth_env --python 3.13

source unsloth_env/bin/activate

uv pip install unsloth --torch-backend=auto

```

#### Windows:

```powershell

winget install -e --id Python.Python.3.13

winget install --id=astral-sh.uv -e

uv venv unsloth_env --python 3.13

.\unsloth_env\Scripts\activate

uv pip install unsloth --torch-backend=auto

```

For Windows, `pip install unsloth` works only if you have PyTorch installed. Read our [Windows Guide](https://unsloth.ai/docs/get-started/install/windows-installation).

You can use the same Docker image as Unsloth Studio.

#### AMD, Intel:

For RTX 50x, B200, 6000 GPUs: `uv pip install unsloth --torch-backend=auto`. Read our guides for: [Blackwell](https://unsloth.ai/docs/blog/fine-tuning-llms-with-blackwell-rtx-50-series-and-unsloth) and [DGX Spark](https://unsloth.ai/docs/blog/fine-tuning-llms-with-nvidia-dgx-spark-and-unsloth).  ### License

Unsloth uses a dual-licensing model of Apache 2.0 and AGPL-3.0. The core Unsloth package remains licensed under **[Apache 2.0](https://github.com/unslothai/unsloth?tab=Apache-2.0-1-ov-file)**, while certain optional components, such as the Unsloth Studio UI are licensed under the open-source license **[AGPL-3.0](https://github.com/unslothai/unsloth?tab=AGPL-3.0-2-ov-file)**.

This structure helps support ongoing Unsloth development while keeping the project open source and enabling the broader ecosystem to continue growing.

### Thank You to

- The [llama.cpp library](https://github.com/ggml-org/llama.cpp) that lets users run and save models with Unsloth

- The Hugging Face team and their libraries: [transformers](https://github.com/huggingface/transformers) and [TRL](https://github.com/huggingface/trl)

- The Pytorch and [Torch AO](https://github.com/unslothai/unsloth/pull/3391) team for their contributions

- NVIDIA for their [NeMo DataDesigner](https://github.com/NVIDIA-NeMo/DataDesigner) library and their contributions

- And of course for every single person who has contributed or has used Unsloth!

### License

Unsloth uses a dual-licensing model of Apache 2.0 and AGPL-3.0. The core Unsloth package remains licensed under **[Apache 2.0](https://github.com/unslothai/unsloth?tab=Apache-2.0-1-ov-file)**, while certain optional components, such as the Unsloth Studio UI are licensed under the open-source license **[AGPL-3.0](https://github.com/unslothai/unsloth?tab=AGPL-3.0-2-ov-file)**.

This structure helps support ongoing Unsloth development while keeping the project open source and enabling the broader ecosystem to continue growing.

### Thank You to

- The [llama.cpp library](https://github.com/ggml-org/llama.cpp) that lets users run and save models with Unsloth

- The Hugging Face team and their libraries: [transformers](https://github.com/huggingface/transformers) and [TRL](https://github.com/huggingface/trl)

- The Pytorch and [Torch AO](https://github.com/unslothai/unsloth/pull/3391) team for their contributions

- NVIDIA for their [NeMo DataDesigner](https://github.com/NVIDIA-NeMo/DataDesigner) library and their contributions

- And of course for every single person who has contributed or has used Unsloth!