|

Some checks are pending

Qwen Code CI / Test-6 (push) Blocked by required conditions

Qwen Code CI / Test-7 (push) Blocked by required conditions

Qwen Code CI / Test-8 (push) Blocked by required conditions

Qwen Code CI / Post Coverage Comment (push) Blocked by required conditions

Qwen Code CI / CodeQL (push) Waiting to run

E2E Tests / E2E Test (Linux) - sandbox:docker (push) Waiting to run

E2E Tests / E2E Test (Linux) - sandbox:none (push) Waiting to run

E2E Tests / E2E Test - macOS (push) Waiting to run

Qwen Code CI / Lint (push) Waiting to run

Qwen Code CI / Test (push) Blocked by required conditions

Qwen Code CI / Test-1 (push) Blocked by required conditions

Qwen Code CI / Test-2 (push) Blocked by required conditions

Qwen Code CI / Test-3 (push) Blocked by required conditions

Qwen Code CI / Test-4 (push) Blocked by required conditions

Qwen Code CI / Test-5 (push) Blocked by required conditions

* feat(cli,core): generate tool-use summaries for compact mode

After each tool batch completes, fire a parallel fast-model call to

generate a short git-commit-subject-style label summarizing what the

batch accomplished (e.g. "Read txt files", "Searched in auth/"). In

compact mode the label replaces the generic "Tool × N" header so N

parallel tool calls collapse to a single semantic row.

The fast-model call (~1s) runs fire-and-forget, overlapped with the

next turn's API stream, so there is no perceived latency. Missing

fast model, aborted turns, and model failures all degrade silently to

the existing rendering.

The summary is also emitted as a `tool_use_summary` history entry

with `precedingToolUseIds`, keeping the shape compatible with SDK

clients that want to render collapsed tool views on their own.

Gated by `experimental.emitToolUseSummaries` (default on). Can be

overridden per-session with `QWEN_CODE_EMIT_TOOL_USE_SUMMARIES=0|1`.

The system prompt and truncation rules (300 chars per tool field,

200 chars of trailing assistant text as intent prefix) match the

existing behavior seen in other tools that emit the same message

type, so SDK consumers see a consistent shape across clients.

* fix(core): bound cleanSummary quote-strip regex to avoid ReDoS

CodeQL js/polynomial-redos flagged the /^["'`]+|["'`]+$/g pattern in

cleanSummary because its input comes from an LLM (treated as

uncontrolled). The original regex is anchored and linear in practice,

but tightening the quantifier to {1,10} both satisfies the static

check and caps engine work on pathological model output with a long

run of quotes. Ten opening/closing quotes is well past anything a real

label would produce.

* fix(cli): render tool_use_summary inline so full mode also shows the label

The summary was only visible in compact mode because the full-mode

ToolGroupMessage ignored the compactLabel prop. Compact mode got away

with this because mergeCompactToolGroups triggers refreshStatic(),

which re-renders the merged tool_group with its newly-looked-up

label. Full mode has no such refresh path, so when the fast-model

call resolves *after* the tool_group has been committed to the

append-only <Static>, there is no way to retroactively decorate it.

Switch to rendering `tool_use_summary` as its own inline history item

(a single dim `● <label>` line). New items append cleanly to <Static>,

so the summary flows in naturally once the fast-model call resolves.

Compact mode still replaces the merged tool_group header with the

label and hides the standalone summary line via the `compactMode`

guard.

With this, the feature works under the default `ui.compactMode: false`

— not just the opt-in compact view.

* docs: tool-use-summaries feature guide, settings entry, and design doc

Three new docs matching the existing fast-model feature docs layout:

- docs/users/features/tool-use-summaries.md — user-facing guide

covering full + compact rendering, configuration (settings + env),

failure modes, cost, and cross-links to followup-suggestions.

- docs/users/configuration/settings.md — register the new

experimental.emitToolUseSummaries setting next to the other

fast-model-driven UI settings.

- docs/design/tool-use-summary/tool-use-summary-design.md — deep dive

matching the compact-mode-design.md competitive-analysis style.

Documents the Claude Code port (prompt, truncation, timing, gate),

the deviations (settings layer, default on, cleanSummary, dual

render paths), and the Ink <Static> append-only rationale that

drove the inline full-mode render vs header-replacement split.

* docs: add Recommended pairing section to tool-use-summaries

Full-mode rendering of the summary works, but for small same-type

batches (Read × 3 and similar) the label visibly restates what the

tool lines already show. Pairing with ui.compactMode: true folds

the whole batch into a single labeled row, which is the cleanest

transcript shape once the label is available.

Adds a dedicated section showing the paired settings.json snippet

and explicitly calling out when each mode wins (and when to turn

the feature off instead).

* fix: address review feedback on tool-use summary generation

Addresses multiple issues from @chiga0's review:

Blocking — compact-mode label invisible for single-batch turns.

mergeCompactToolGroups's adjacency-only gating left a trailing

tool_use_summary in the merged result whenever there was no second

batch to merge across. That pushed mergedHistory.length lock-step

with history.length and MainContent's refreshStatic heuristic

(currMLen <= prevMLen) never fired, so Ink's append-only <Static>

never repainted the tool_group with its newly-looked-up label.

Drop tool_use_summary items unconditionally now; gemini_thought

still survives to avoid unnecessary repaints. New tests cover

the single-batch case and the summary-before-user-message case.

Blocking — stale summary appears after Ctrl+C on the next turn.

summarySignal captured the CURRENT turn's AbortController, but the

summary resolves during the NEXT turn's streaming window. The next

turn's submitQuery allocates a fresh controller, so the captured

signal was never aborted — Ctrl+C during the new turn used to let

the previous turn's summary land in the transcript seconds later.

Fix: dedicated per-batch AbortController tracked in a ref set,

aborted eagerly from cancelOngoingRequest; resolve-time check reads

the live abort state and turnCancelledRef.

High — summarizer input pollution.

geminiTools contained error/cancelled tools; retry-loop warnings

and "Cancelled by user" strings were feeding the fast model.

cleanSummary can only reject error-shaped output, not prevent the

model from hallucinating a plausible label from bad input (the PR's

own tmux screenshot showed "Read txt files · 5 tools" where 4 of

the 5 were prior-retry failures). Filter to status === 'success'

before building the prompt; skip the call entirely if nothing's

left.

High — unstable label on merged groups.

getCompactLabel iterated all callIds and returned the first hit,

so asynchronous resolution order made the header visibly flip

from SB to SA when batch A resolved after batch B. Lock onto

item.tools[0].callId to keep stable "leading batch governs"

semantics.

High — force-expanded groups in compact mode had no label at all.

Compact mode routes non-force-expand groups through

CompactToolGroupDisplay (consumes compactLabel) and force-expand

groups through the full ToolGroupMessage (ignores compactLabel);

the standalone ● line was gated on !compactMode, creating a dead

zone — exactly the diagnostically valuable case. MainContent now

computes absorbedCallIds (which groups actually consume the

header replacement) and passes summaryAbsorbed to

HistoryItemDisplay; force-expand groups in compact mode get the

standalone line as the label's only path to the screen.

Medium — cleanSummary robustness.

Extend quote-strip to Unicode curly + CJK corner brackets; strip

markdown emphasis (**bold**, _italic_); broaden refusal-prefix

rejection to curly-apostrophe "I can't", Chinese "我无法 / 我不能 /

抱歉 / 无法", and "Failed to / Sorry, / Request failed". 7 new

cleanSummary tests cover the added cases.

Low — concurrent-rendering safety.

Move historyRef.current = history from render phase into

useLayoutEffect so bailed renders can't leave a dropped value.

Low — CompactToolGroupDisplay readability.

Extract renderSummaryHeader / renderDefaultHeader helpers and

document the toolCalls.length > 1 count-suffix guard so a future

"fix" to >= 1 doesn't reintroduce "Read config.json · 1 tools".

Docs — add Scope & Lifecycle section to tool-use-summaries.md

covering (1) one generation per batch shared by both modes,

(2) no backfill on toggle / session resume, (3) main-agent batches

only with the Task-tool clarification.

* fix: address second-round review feedback on tool-use summaries

Critical — force-expand groups lost their summary entirely.

Previous round's "drop tool_use_summary unconditionally" merge fix

also stripped summaries for force-expanded groups, defeating the

exact case (errors, confirmations, focused shell) where the

standalone ● label is the label's only path to the screen. The

merge function now takes an absorbedCallIds set: summaries whose

preceding callIds are all absorbed by a compact tool_group header

are dropped (so refreshStatic still fires), but force-expanded

summaries pass through to be rendered standalone by

HistoryItemDisplay. MainContent computes absorbedCallIds from raw

history and passes it in. New tests cover both the absorbed-drop

and the force-expand-preserve cases plus the empty-set default

for callers that don't compute absorption.

Suggestion — late-arriving summaries could land out of order.

A slow fast-model call could resolve after the next turn's

content was committed, planting the ● label between later items

in full mode. The resolve callback now captures the first batch

callId, locates the corresponding tool_group at resolve time,

and drops the summary if a newer tool_group has already appeared

in history. New test exercises this with a manually-resolved

fast-model promise.

Suggestion — truncateJson allocated full JSON for large strings.

A 10MB ReadFile result was being JSON.stringify'd in full only to

be sliced down to 300 chars. Added preTruncate that walks the

value (depth-bounded to 4) and slices string leaves to maxLength

before serialization. Tests verify the input never reaches its

full pre-cap form.

Suggestion — settings description over-claimed SDK emission.

The description said summaries are emitted to SDK clients as a

tool_use_summary message; the SDK plumbing isn't actually wired

in this PR (the factory is exported for follow-up). Updated

settings.json description and regenerated the vscode schema to

state CLI-only scope explicitly.

Suggestion — fastModel data-boundary not documented.

When fastModel uses a different provider than the main session

model, tool inputs/outputs cross a new auth boundary that users

may not expect. Added "Data flow & privacy" section to the user

feature doc spelling out: same-provider fast model = no scope

change; different-provider = strictly larger sharing scope; two

escape hatches (same-provider fast model OR feature off).

Code-level mitigation (metadata-only mode) deferred.

|

||

|---|---|---|

| .github | ||

| .husky | ||

| .qwen | ||

| .vscode | ||

| docs | ||

| docs-site | ||

| eslint-rules | ||

| integration-tests | ||

| packages | ||

| scripts | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .prettierrc.json | ||

| .yamllint.yml | ||

| AGENTS.md | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| esbuild.config.js | ||

| eslint.config.js | ||

| LICENSE | ||

| Makefile | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| vitest.config.ts | ||

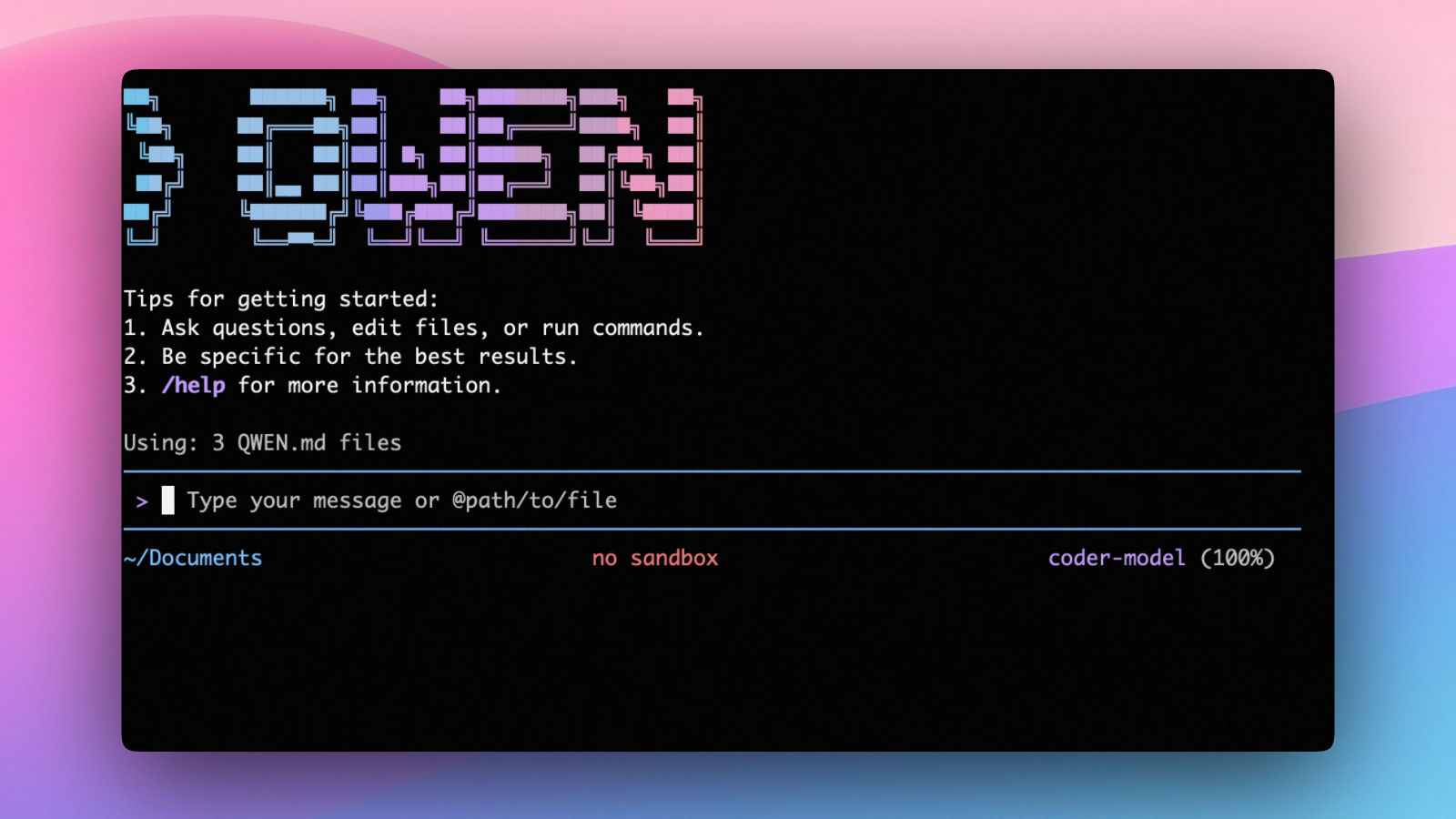

An open-source AI agent that lives in your terminal.

中文 | Deutsch | français | 日本語 | Русский | Português (Brasil)

🎉 News

-

2026-04-15: Qwen OAuth free tier has been discontinued. To continue using Qwen Code, switch to Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key. Run

qwen authto configure. -

2026-04-13: Qwen OAuth free tier policy update: daily quota adjusted to 100 requests/day (from 1,000).

-

2026-04-02: Qwen3.6-Plus is now live! Get an API key from Alibaba Cloud ModelStudio to access it through the OpenAI-compatible API.

-

2026-02-16: Qwen3.5-Plus is now live!

Why Qwen Code?

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

- Multi-protocol, flexible providers: use OpenAI / Anthropic / Gemini-compatible APIs, Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key.

- Open-source, co-evolving: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

- Agentic workflow, feature-rich: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

- Terminal-first, IDE-friendly: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

Installation

Quick Install (Recommended)

Linux / macOS

bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

Windows (Run as Administrator)

Works in both Command Prompt and PowerShell:

powershell -Command "Invoke-WebRequest 'https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat' -OutFile (Join-Path $env:TEMP 'install-qwen.bat'); & (Join-Path $env:TEMP 'install-qwen.bat')"

Note

: It's recommended to restart your terminal after installation to ensure environment variables take effect.

Manual Installation

Prerequisites

Make sure you have Node.js 20 or later installed. Download it from nodejs.org.

NPM

npm install -g @qwen-code/qwen-code@latest

Homebrew (macOS, Linux)

brew install qwen-code

Quick Start

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

On first use, you'll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Example prompts:

What does this project do?

Explain the codebase structure.

Help me refactor this function.

Generate unit tests for this module.

Click to watch a demo video

🦞 Use Qwen Code for Coding Tasks in Claw

Copy the prompt below and paste it into your agent:

Use Qwen Code as a coding agent. First, fetch and read this skill reference:

https://raw.githubusercontent.com/QwenLM/qwen-code/refs/heads/main/.qwen/skills/qwen-code-claw/SKILL.md

Then follow the instructions inside to install, authenticate, and use Qwen Code via acpx for all coding tasks.

Authentication

Qwen Code supports the following authentication methods:

- API Key (recommended): use an API key from Alibaba Cloud Model Studio (Beijing / intl) or any supported provider (OpenAI, Anthropic, Google GenAI, and other compatible endpoints).

- Coding Plan: subscribe to the Alibaba Cloud Coding Plan (Beijing / intl) for a fixed monthly fee with higher quotas.

⚠️ Qwen OAuth was discontinued on April 15, 2026. If you were previously using Qwen OAuth, please switch to one of the methods above. Run

qwenand then/authto reconfigure.

API Key (recommended)

Use an API key to connect to Alibaba Cloud Model Studio or any supported provider. Supports multiple protocols:

- OpenAI-compatible: Alibaba Cloud ModelStudio, ModelScope, OpenAI, OpenRouter, and other OpenAI-compatible providers

- Anthropic: Claude models

- Google GenAI: Gemini models

The recommended way to configure models and providers is by editing ~/.qwen/settings.json (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

Quick Setup in 3 Steps

Step 1: Create or edit ~/.qwen/settings.json

Here is a complete example:

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"description": "Qwen3-Coder via Dashscope",

"envKey": "DASHSCOPE_API_KEY"

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Step 2: Understand each field

| Field | What it does |

|---|---|

modelProviders |

Declares which models are available and how to connect to them. Keys like openai, anthropic, gemini represent the API protocol. |

modelProviders[].id |

The model ID sent to the API (e.g. qwen3.6-plus, gpt-4o). |

modelProviders[].envKey |

The name of the environment variable that holds your API key. |

modelProviders[].baseUrl |

The API endpoint URL (required for non-default endpoints). |

env |

A fallback place to store API keys (lowest priority; prefer .env files or export for sensitive keys). |

security.auth.selectedType |

The protocol to use on startup (openai, anthropic, gemini, vertex-ai). |

model.name |

The default model to use when Qwen Code starts. |

Step 3: Start Qwen Code — your configuration takes effect automatically:

qwen

Use the /model command at any time to switch between all configured models.

More Examples

Coding Plan (Alibaba Cloud ModelStudio) — fixed monthly fee, higher quotas

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.6-plus from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

},

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "glm-4.7",

"name": "glm-4.7 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "glm-4.7 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "kimi-k2.5",

"name": "kimi-k2.5 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "kimi-k2.5 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Subscribe to the Coding Plan and get your API key at Alibaba Cloud ModelStudio(Beijing) or Alibaba Cloud ModelStudio(intl).

Multiple providers (OpenAI + Anthropic + Gemini)

{

"modelProviders": {

"openai": [

{

"id": "gpt-4o",

"name": "GPT-4o",

"envKey": "OPENAI_API_KEY",

"baseUrl": "https://api.openai.com/v1"

}

],

"anthropic": [

{

"id": "claude-sonnet-4-20250514",

"name": "Claude Sonnet 4",

"envKey": "ANTHROPIC_API_KEY"

}

],

"gemini": [

{

"id": "gemini-2.5-pro",

"name": "Gemini 2.5 Pro",

"envKey": "GEMINI_API_KEY"

}

]

},

"env": {

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "gpt-4o"

}

}

Enable thinking mode (for supported models like qwen3.5-plus)

{

"modelProviders": {

"openai": [

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (thinking)",

"envKey": "DASHSCOPE_API_KEY",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.5-plus"

}

}

Tip: You can also set API keys via

exportin your shell or.envfiles, which take higher priority thansettings.json→env. See the authentication guide for full details.

Security note: Never commit API keys to version control. The

~/.qwen/settings.jsonfile is in your home directory and should stay private.

Local Model Setup (Ollama / vLLM)

You can also run models locally — no API key or cloud account needed. This is not an authentication method; instead, configure your local model endpoint in ~/.qwen/settings.json using the modelProviders field.

Ollama setup

- Install Ollama from ollama.com

- Pull a model:

ollama pull qwen3:32b - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "qwen3:32b",

"name": "Qwen3 32B (Ollama)",

"baseUrl": "http://localhost:11434/v1",

"description": "Qwen3 32B running locally via Ollama"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3:32b"

}

}

vLLM setup

- Install vLLM:

pip install vllm - Start the server:

vllm serve Qwen/Qwen3-32B - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "Qwen/Qwen3-32B",

"name": "Qwen3 32B (vLLM)",

"baseUrl": "http://localhost:8000/v1",

"description": "Qwen3 32B running locally via vLLM"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "Qwen/Qwen3-32B"

}

}

Usage

As an open-source terminal agent, you can use Qwen Code in four primary ways:

- Interactive mode (terminal UI)

- Headless mode (scripts, CI)

- IDE integration (VS Code, Zed)

- SDKs (TypeScript, Python, Java)

Interactive mode

cd your-project/

qwen

Run qwen in your project folder to launch the interactive terminal UI. Use @ to reference local files (for example @src/main.ts).

Headless mode

cd your-project/

qwen -p "your question"

Use -p to run Qwen Code without the interactive UI—ideal for scripts, automation, and CI/CD. Learn more: Headless mode.

IDE integration

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

SDKs

Build on top of Qwen Code with the available SDKs:

- TypeScript: Use the Qwen Code SDK

- Python: Use the Python SDK

- Java: Use the Java SDK

Python SDK example:

import asyncio

from qwen_code_sdk import is_sdk_result_message, query

async def main() -> None:

result = query(

"Summarize the repository layout.",

{

"cwd": "/path/to/project",

"path_to_qwen_executable": "qwen",

},

)

async for message in result:

if is_sdk_result_message(message):

print(message["result"])

asyncio.run(main())

Commands & Shortcuts

Session Commands

/help- Display available commands/clear- Clear conversation history/compress- Compress history to save tokens/stats- Show current session information/bug- Submit a bug report/exitor/quit- Exit Qwen Code

Keyboard Shortcuts

Ctrl+C- Cancel current operationCtrl+D- Exit (on empty line)Up/Down- Navigate command history

Learn more about Commands

Tip: In YOLO mode (

--yolo), vision switching happens automatically without prompts when images are detected. Learn more about Approval Mode

Configuration

Qwen Code can be configured via settings.json, environment variables, and CLI flags.

| File | Scope | Description |

|---|---|---|

~/.qwen/settings.json |

User (global) | Applies to all your Qwen Code sessions. Recommended for modelProviders and env. |

.qwen/settings.json |

Project | Applies only when running Qwen Code in this project. Overrides user settings. |

The most commonly used top-level fields in settings.json:

| Field | Description |

|---|---|

modelProviders |

Define available models per protocol (openai, anthropic, gemini, vertex-ai). |

env |

Fallback environment variables (e.g. API keys). Lower priority than shell export and .env files. |

security.auth.selectedType |

The protocol to use on startup (e.g. openai). |

model.name |

The default model to use when Qwen Code starts. |

See the Authentication section above for complete

settings.jsonexamples, and the settings reference for all available options.

Benchmark Results

Terminal-Bench Performance

| Agent | Model | Accuracy |

|---|---|---|

| Qwen Code | Qwen3-Coder-480A35 | 37.5% |

| Qwen Code | Qwen3-Coder-30BA3B | 31.3% |

Ecosystem

Looking for a graphical interface?

- AionUi A modern GUI for command-line AI tools including Qwen Code

- Gemini CLI Desktop A cross-platform desktop/web/mobile UI for Qwen Code

Troubleshooting

If you encounter issues, check the troubleshooting guide.

Common issues:

Qwen OAuth free tier was discontinued on 2026-04-15: Qwen OAuth is no longer available. Runqwen→/authand switch to API Key or Coding Plan. See the Authentication section above for setup instructions.

To report a bug from within the CLI, run /bug and include a short title and repro steps.

Connect with Us

- Discord: https://discord.gg/RN7tqZCeDK

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

Acknowledgments

This project is based on Google Gemini CLI. We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.