* feat(session): auto-title sessions via fast model, add /rename --auto The /rename work in #3093 generates kebab-case titles only when the user explicitly runs `/rename` with no args; until they do, the session picker shows the first user prompt (often truncated or misleading). This change adds a sentence-case auto-title that fires once per session after the first assistant turn, using the configured fast model. New service: `packages/core/src/services/sessionTitle.ts` — `tryGenerateSessionTitle(config, signal)` returns a discriminated outcome (`{ok: true, title, modelUsed}` | `{ok: false, reason}`) so callers can either handle failures generically or map reasons to actionable messages. Prompt shape: 3-7 words, sentence case, good/bad examples including a CJK row, JSON schema enforced via `baseLlmClient.generateJson`. `maxAttempts: 1` — titles are cosmetic metadata and shouldn't fight rate limits. Trigger point: `ChatRecordingService.maybeTriggerAutoTitle` runs after `recordAssistantTurn`. Fire-and-forget promise, guarded by: - `currentCustomTitle` — don't overwrite any existing title. - `autoTitleController` doubles as in-flight flag; a second turn while the first is still pending is a no-op. - `autoTitleAttempts` cap of 3 — the first assistant turn may be a pure tool-call with no user-visible text; retry for a handful of turns until a title lands. Cap bounds total waste. - `!config.isInteractive()` — headless CLI (`qwen -p`, CI) never auto- titles; spending fast-model tokens on a one-shot session is waste. - `autoTitleDisabledByEnv()` — `QWEN_DISABLE_AUTO_TITLE=1` opt-out. - `config.getFastModel()` falsy — skip entirely rather than falling back to the main model; auto-titling on main-model tokens is too expensive to be silent. Persistence: `CustomTitleRecordPayload` grows a `titleSource: 'auto' | 'manual'` field. Absent on pre-change records (treated as `undefined` → manual, safe default so a user's pre-upgrade `/rename` is never silently reclassified). `SessionPicker` renders `titleSource === 'auto'` titles in dim (secondary) color; manual stays full contrast. On resume, the persisted source is rehydrated into `currentTitleSource` — without this, finalize's re-append would rewrite an auto title as manual on every resume cycle. Cross-process manual-rename guard: when two CLI tabs target the same JSONL, in-memory state can diverge. Before writing an auto record, the IIFE re-reads the file via `sessionService.getSessionTitleInfo`. If a `/rename` from another process landed as manual, bail and sync local state — never clobber a deliberately-chosen manual title with a model guess. Cost is one 64KB tail read per successful generation. `finalize()` aborts the in-flight controller before re-appending the title record. Session switch / shutdown doesn't have to wait on a slow fast-model call. New user-facing command: `/rename --auto` regenerates via the same generator — explicit user trigger, overwrites whatever's there (manual or auto) because the user asked. Errors route through `autoFailureMessage(reason)` so `empty_history`, `model_error`, `aborted`, etc. each get actionable guidance rather than a generic "could not generate". `/rename -- --literal-name` is the sentinel for titles that start with `--`; unknown `--flag` tokens error with a hint pointing at the sentinel. Existing `/rename <name>` and bare `/rename` (kebab-case via existing path) are unchanged, except the kebab path now prefers fast model when available and runs its output through `stripTerminalControlSequences` (same ANSI/OSC-8 hardening as the sentence-case path). New shared util: `packages/core/src/utils/terminalSafe.ts` — `stripTerminalControlSequences(s)` strips OSC (\x1b]...\x07|\x1b\\), CSI (\x1b[...[a-zA-Z]), SS2/SS3 leaders, and C0/C1/DEL as a backstop. A model-returned `\x1b[2J` or OSC-8 hyperlink escape would otherwise execute on every SessionPicker render; both sentence-case and kebab paths now route titles through the helper before they reach the JSONL or the UI. Tail-read extractor: `extractLastJsonStringFields(text, primaryKey, otherKeys, lineContains)` reads multiple fields from the same matching line in a single pass. Two separate tail scans could return a mismatched pair (primary from a newer record, secondary from an older one with only the primary set); the new helper guarantees the pair is atomic. Validates a proper closing quote on the primary value so a crash-truncated trailing record can't win the latest-match race. `readLastJsonStringFieldsSync` is its file-reading wrapper — same tail-window fast path and full-file fallback as the single-field version, plus a `MAX_FULL_SCAN_BYTES = 64MB` cap so a corrupt multi-GB session file can't freeze the picker. Session reads now open with `O_NOFOLLOW` (falls back to plain RDONLY on Windows where the constant isn't exposed) — defense in depth against a symlink planted in `~/.qwen/projects/<proj>/chats/`. Character handling: `flattenToTail` on the LLM prompt drops a dangling low surrogate after `slice(-1000)` — otherwise a CJK supplementary char or emoji cut mid-pair produces invalid UTF-16 that some providers 400. `sanitizeTitle` applies the same surrogate scrub after max-length trim, and strips paired CJK brackets (`「」 『』 【】 〈〉 《》`) as whole units so a `【Draft】 Fix login` doesn't leave a dangling `】` after leading-char strip. `lineContains` in the title reader is tightened from the loose substring `'custom_title'` to `'"subtype":"custom_title"'` so user text containing the literal `custom_title` can't shadow a real record. Tests: 46 new unit tests across - `sessionTitle.test.ts` (22): success/all-failure-reasons, tool-call filter, tail-slice, surrogate scrub, ANSI/OSC-8 strip, CJK brackets. - `chatRecordingService.autoTitle.test.ts` (15): trigger/skip matrix, in-flight guard, abort propagation on finalize, manual/auto/legacy resume symmetry, cross-process race, env opt-out, retry-after- transient. - `sessionStorageUtils.test.ts` (13): single-pass extractor, straddle boundary, truncated trailing record, lineContains, multi-field atom. - `renameCommand.test.ts` (8): `--auto` success, all reasons, sentinel, unknown-flag hint, positional rejection, manual/SessionService fallbacks. * docs(session): design doc for auto session titles Matches the session-recap design doc shape (Overview / Triggers / Architecture / Prompt Design / History Filtering / Persistence / Concurrency / Configuration / Observability / Out of Scope) and adds a Security Hardening section unique to the title path — titles render directly in the picker and persist in user-readable JSONL, so LLM-returned control sequences are an attack surface the recap path doesn't have. Captures decisions a code-only reader has to reverse-engineer: - Why `maxAttempts: 1` (best-effort cosmetic metadata; no retry loop). - Why `autoTitleAttempts` cap is 3 (first turn can be pure tool-call). - Why the auto trigger does NOT fall back to the main model but session-recap does (auto-title fires on every turn; silently charging main-model tokens is a bill surprise). - Why `titleSource: undefined` stays unwritten on legacy records (no rewrite risks silently reclassifying user intent). - Why the cross-process re-read sits between the LLM await and the append (manual wins at both in-process and on-disk layers). - Why `finalize()`'s abort tolerates a controller swap (in-flight identity check). - Why JSON-schema function calling instead of tag extraction (avoid reasoning preamble bleed; cross-provider reliability). Placed at docs/design/session-title/ alongside session-recap, compact-mode, fork-subagent, and other per-feature design docs. No sidebar index update required — the design folder is unindexed. * test(rename): pin model choice in bare /rename kebab path Addresses reviewer feedback: the bare `/rename` model selection (`config.getFastModel() ?? config.getModel()`) had no test pinning it either way. Previous tests mocked `getHistory: []`, which exits the function before the model is ever chosen, so a silent regression to either direction (always-main or always-fast) would pass CI. Two explicit cases now: - fastModel set → `generateContent` called with `model: 'qwen-turbo'`. - fastModel unset → `generateContent` called with `model: 'main-model'`. The tests intentionally mock a non-empty history so the kebab path reaches the generateContent call site instead of bailing on empty input. |

||

|---|---|---|

| .github | ||

| .husky | ||

| .qwen | ||

| .vscode | ||

| docs | ||

| docs-site | ||

| eslint-rules | ||

| integration-tests | ||

| packages | ||

| scripts | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .prettierrc.json | ||

| .yamllint.yml | ||

| AGENTS.md | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| esbuild.config.js | ||

| eslint.config.js | ||

| LICENSE | ||

| Makefile | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| vitest.config.ts | ||

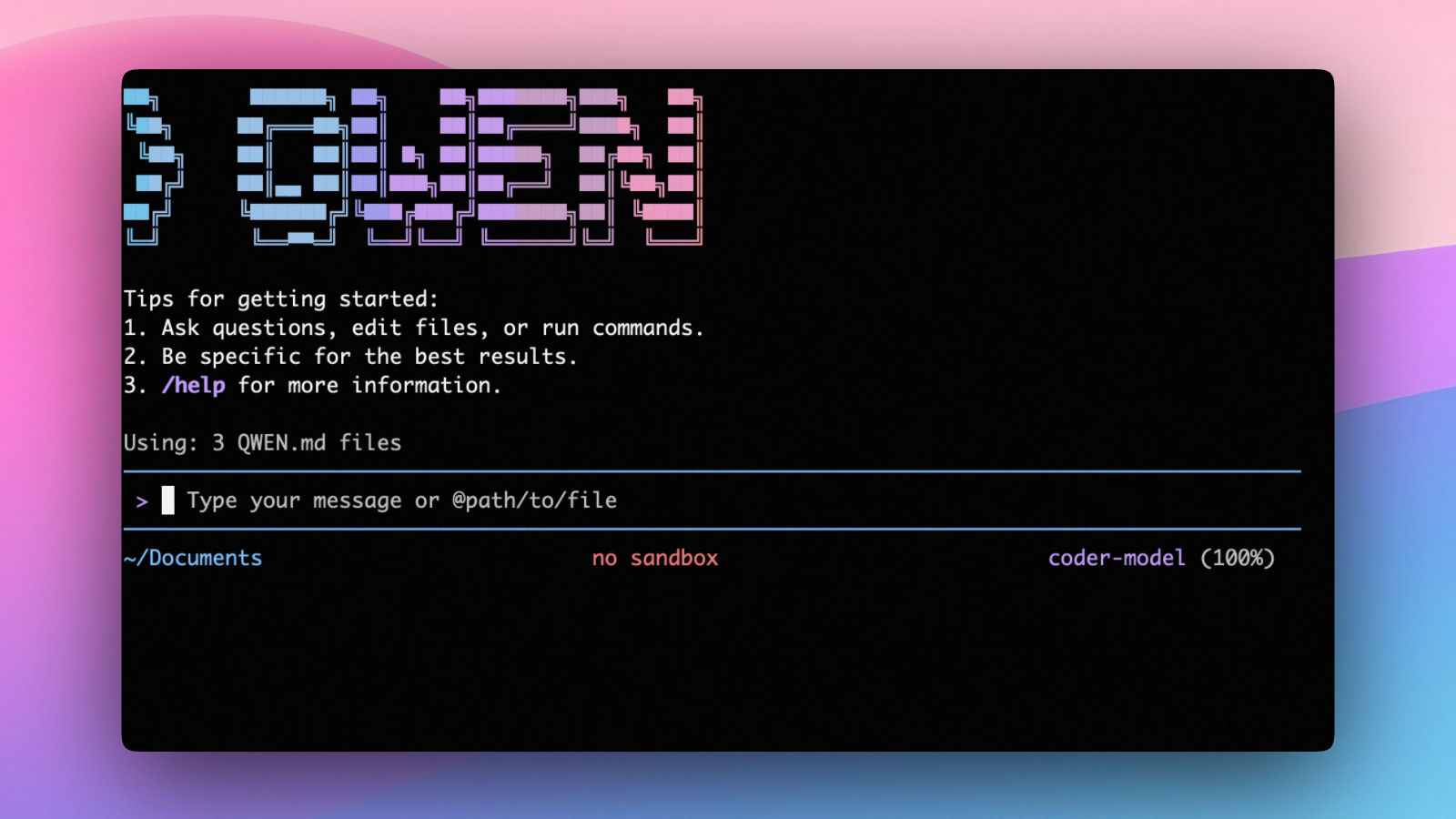

An open-source AI agent that lives in your terminal.

中文 | Deutsch | français | 日本語 | Русский | Português (Brasil)

🎉 News

-

2026-04-15: Qwen OAuth free tier has been discontinued. To continue using Qwen Code, switch to Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key. Run

qwen authto configure. -

2026-04-13: Qwen OAuth free tier policy update: daily quota adjusted to 100 requests/day (from 1,000).

-

2026-04-02: Qwen3.6-Plus is now live! Get an API key from Alibaba Cloud ModelStudio to access it through the OpenAI-compatible API.

-

2026-02-16: Qwen3.5-Plus is now live!

Why Qwen Code?

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

- Multi-protocol, flexible providers: use OpenAI / Anthropic / Gemini-compatible APIs, Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key.

- Open-source, co-evolving: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

- Agentic workflow, feature-rich: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

- Terminal-first, IDE-friendly: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

Installation

Quick Install (Recommended)

Linux / macOS

bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

Windows (Run as Administrator)

Works in both Command Prompt and PowerShell:

powershell -Command "Invoke-WebRequest 'https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat' -OutFile (Join-Path $env:TEMP 'install-qwen.bat'); & (Join-Path $env:TEMP 'install-qwen.bat')"

Note

: It's recommended to restart your terminal after installation to ensure environment variables take effect.

Manual Installation

Prerequisites

Make sure you have Node.js 20 or later installed. Download it from nodejs.org.

NPM

npm install -g @qwen-code/qwen-code@latest

Homebrew (macOS, Linux)

brew install qwen-code

Quick Start

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

On first use, you'll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Example prompts:

What does this project do?

Explain the codebase structure.

Help me refactor this function.

Generate unit tests for this module.

Click to watch a demo video

🦞 Use Qwen Code for Coding Tasks in Claw

Copy the prompt below and paste it into your agent:

Use Qwen Code as a coding agent. First, fetch and read this skill reference:

https://raw.githubusercontent.com/QwenLM/qwen-code/refs/heads/main/.qwen/skills/qwen-code-claw/SKILL.md

Then follow the instructions inside to install, authenticate, and use Qwen Code via acpx for all coding tasks.

Authentication

Qwen Code supports the following authentication methods:

- API Key (recommended): use an API key from Alibaba Cloud Model Studio (Beijing / intl) or any supported provider (OpenAI, Anthropic, Google GenAI, and other compatible endpoints).

- Coding Plan: subscribe to the Alibaba Cloud Coding Plan (Beijing / intl) for a fixed monthly fee with higher quotas.

⚠️ Qwen OAuth was discontinued on April 15, 2026. If you were previously using Qwen OAuth, please switch to one of the methods above. Run

qwenand then/authto reconfigure.

API Key (recommended)

Use an API key to connect to Alibaba Cloud Model Studio or any supported provider. Supports multiple protocols:

- OpenAI-compatible: Alibaba Cloud ModelStudio, ModelScope, OpenAI, OpenRouter, and other OpenAI-compatible providers

- Anthropic: Claude models

- Google GenAI: Gemini models

The recommended way to configure models and providers is by editing ~/.qwen/settings.json (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

Quick Setup in 3 Steps

Step 1: Create or edit ~/.qwen/settings.json

Here is a complete example:

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"description": "Qwen3-Coder via Dashscope",

"envKey": "DASHSCOPE_API_KEY"

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Step 2: Understand each field

| Field | What it does |

|---|---|

modelProviders |

Declares which models are available and how to connect to them. Keys like openai, anthropic, gemini represent the API protocol. |

modelProviders[].id |

The model ID sent to the API (e.g. qwen3.6-plus, gpt-4o). |

modelProviders[].envKey |

The name of the environment variable that holds your API key. |

modelProviders[].baseUrl |

The API endpoint URL (required for non-default endpoints). |

env |

A fallback place to store API keys (lowest priority; prefer .env files or export for sensitive keys). |

security.auth.selectedType |

The protocol to use on startup (openai, anthropic, gemini, vertex-ai). |

model.name |

The default model to use when Qwen Code starts. |

Step 3: Start Qwen Code — your configuration takes effect automatically:

qwen

Use the /model command at any time to switch between all configured models.

More Examples

Coding Plan (Alibaba Cloud ModelStudio) — fixed monthly fee, higher quotas

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.6-plus from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

},

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "glm-4.7",

"name": "glm-4.7 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "glm-4.7 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "kimi-k2.5",

"name": "kimi-k2.5 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "kimi-k2.5 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Subscribe to the Coding Plan and get your API key at Alibaba Cloud ModelStudio(Beijing) or Alibaba Cloud ModelStudio(intl).

Multiple providers (OpenAI + Anthropic + Gemini)

{

"modelProviders": {

"openai": [

{

"id": "gpt-4o",

"name": "GPT-4o",

"envKey": "OPENAI_API_KEY",

"baseUrl": "https://api.openai.com/v1"

}

],

"anthropic": [

{

"id": "claude-sonnet-4-20250514",

"name": "Claude Sonnet 4",

"envKey": "ANTHROPIC_API_KEY"

}

],

"gemini": [

{

"id": "gemini-2.5-pro",

"name": "Gemini 2.5 Pro",

"envKey": "GEMINI_API_KEY"

}

]

},

"env": {

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "gpt-4o"

}

}

Enable thinking mode (for supported models like qwen3.5-plus)

{

"modelProviders": {

"openai": [

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (thinking)",

"envKey": "DASHSCOPE_API_KEY",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.5-plus"

}

}

Tip: You can also set API keys via

exportin your shell or.envfiles, which take higher priority thansettings.json→env. See the authentication guide for full details.

Security note: Never commit API keys to version control. The

~/.qwen/settings.jsonfile is in your home directory and should stay private.

Local Model Setup (Ollama / vLLM)

You can also run models locally — no API key or cloud account needed. This is not an authentication method; instead, configure your local model endpoint in ~/.qwen/settings.json using the modelProviders field.

Ollama setup

- Install Ollama from ollama.com

- Pull a model:

ollama pull qwen3:32b - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "qwen3:32b",

"name": "Qwen3 32B (Ollama)",

"baseUrl": "http://localhost:11434/v1",

"description": "Qwen3 32B running locally via Ollama"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3:32b"

}

}

vLLM setup

- Install vLLM:

pip install vllm - Start the server:

vllm serve Qwen/Qwen3-32B - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "Qwen/Qwen3-32B",

"name": "Qwen3 32B (vLLM)",

"baseUrl": "http://localhost:8000/v1",

"description": "Qwen3 32B running locally via vLLM"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "Qwen/Qwen3-32B"

}

}

Usage

As an open-source terminal agent, you can use Qwen Code in four primary ways:

- Interactive mode (terminal UI)

- Headless mode (scripts, CI)

- IDE integration (VS Code, Zed)

- TypeScript SDK

Interactive mode

cd your-project/

qwen

Run qwen in your project folder to launch the interactive terminal UI. Use @ to reference local files (for example @src/main.ts).

Headless mode

cd your-project/

qwen -p "your question"

Use -p to run Qwen Code without the interactive UI—ideal for scripts, automation, and CI/CD. Learn more: Headless mode.

IDE integration

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

TypeScript SDK

Build on top of Qwen Code with the TypeScript SDK:

Commands & Shortcuts

Session Commands

/help- Display available commands/clear- Clear conversation history/compress- Compress history to save tokens/stats- Show current session information/bug- Submit a bug report/exitor/quit- Exit Qwen Code

Keyboard Shortcuts

Ctrl+C- Cancel current operationCtrl+D- Exit (on empty line)Up/Down- Navigate command history

Learn more about Commands

Tip: In YOLO mode (

--yolo), vision switching happens automatically without prompts when images are detected. Learn more about Approval Mode

Configuration

Qwen Code can be configured via settings.json, environment variables, and CLI flags.

| File | Scope | Description |

|---|---|---|

~/.qwen/settings.json |

User (global) | Applies to all your Qwen Code sessions. Recommended for modelProviders and env. |

.qwen/settings.json |

Project | Applies only when running Qwen Code in this project. Overrides user settings. |

The most commonly used top-level fields in settings.json:

| Field | Description |

|---|---|

modelProviders |

Define available models per protocol (openai, anthropic, gemini, vertex-ai). |

env |

Fallback environment variables (e.g. API keys). Lower priority than shell export and .env files. |

security.auth.selectedType |

The protocol to use on startup (e.g. openai). |

model.name |

The default model to use when Qwen Code starts. |

See the Authentication section above for complete

settings.jsonexamples, and the settings reference for all available options.

Benchmark Results

Terminal-Bench Performance

| Agent | Model | Accuracy |

|---|---|---|

| Qwen Code | Qwen3-Coder-480A35 | 37.5% |

| Qwen Code | Qwen3-Coder-30BA3B | 31.3% |

Ecosystem

Looking for a graphical interface?

- AionUi A modern GUI for command-line AI tools including Qwen Code

- Gemini CLI Desktop A cross-platform desktop/web/mobile UI for Qwen Code

Troubleshooting

If you encounter issues, check the troubleshooting guide.

Common issues:

Qwen OAuth free tier was discontinued on 2026-04-15: Qwen OAuth is no longer available. Runqwen→/authand switch to API Key or Coding Plan. See the Authentication section above for setup instructions.

To report a bug from within the CLI, run /bug and include a short title and repro steps.

Connect with Us

- Discord: https://discord.gg/RN7tqZCeDK

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

Acknowledgments

This project is based on Google Gemini CLI. We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.