* feat(core): improve Anthropic proxy compatibility and enable global prompt cache scope

- Use authToken instead of apiKey to send Authorization: Bearer header,

avoiding dual-header conflicts with IdeaLab-style proxies

- Set User-Agent to claude-cli format and add x-app header for proxy

Team rule compatibility

- Add adaptive thinking support for Claude 4.6+ models

- Enable prompt-caching-scope-2026-01-05 beta header and scope: global

on system prompt cache_control to improve cross-session cache hit rates

* test: update tests for User-Agent, beta header, and cache_control changes

* fix(core): scope anthropic proxy workarounds to non-native baseURLs only

Address review feedback on PR #4020 by narrowing each workaround to where

it actually applies, instead of shipping it globally.

- Gate `Authorization: Bearer` (`authToken`), `claude-cli` User-Agent, and

`x-app: cli` to non-Anthropic-native baseURLs. Direct `api.anthropic.com`

users keep the SDK-default `x-api-key` (`apiKey`) auth and a truthful

`QwenCode` User-Agent so usage isn't misattributed in Anthropic's

logs/quotas, and so a stricter Anthropic backend doesn't 401 on a

`Bearer`-shaped header.

- Gate the `prompt-caching-scope-2026-01-05` beta on `enableCacheControl`.

When the converter isn't attaching `cache_control` to the body the beta

is dead weight and risks 4xx responses from anthropic-compatible

backends that don't recognize it. Restores the `betas.length === 0`

early-return for the all-disabled case.

- Detect adaptive-thinking models with numeric major/minor compare instead

of `[6-9]`. The character class missed `claude-haiku-4-6` entirely and

would silently fall through to `budget_tokens` on `claude-opus-4-10` /

`claude-opus-5-1` once those ship — tripping HTTP 400 with a shape the

server no longer accepts.

- Honor explicit `reasoning.budget_tokens` before the adaptive branch.

Adaptive omits `budget_tokens` from the wire shape, so checking it

second silently dropped a user-supplied escape-hatch budget on Claude

4.6+ models.

- Add `scope: 'global'` on the tool `cache_control` entry so the largest,

slowest-changing prefix actually participates in cross-session caching

under the new beta — the system-only attachment was capturing maybe

half the available hit-rate improvement.

- Replace the misleading `as { type: 'ephemeral' }` cast on the system

block (which erased `scope` from the type while leaving it on the

wire) with a `AnthropicTextBlockParam` type that mirrors the existing

`AnthropicToolParam` widening, so types match the runtime shape.

* fix(core): keep enableCacheControl live in the converter

Follow-up on PR #4020 review: `Config.setModel()` mutates

`enableCacheControl` in place (it's in `MODEL_GENERATION_CONFIG_FIELDS`),

but the converter captured it once at construction. On a hot flip the

generator's per-request `prompt-caching-scope-2026-01-05` beta gate would

sample the new value while the converter still emitted the old body-side

`cache_control` — beta-header and body could disagree.

- Thread the live `contentGeneratorConfig.enableCacheControl` into the

converter via a per-call options override on both

`convertGeminiRequestToAnthropic` and `convertGeminiToolsToAnthropic`,

falling back to the constructor-time default when the caller doesn't

pass one. The generator samples the value once per `buildRequest` and

forwards it to both convert calls so the body and beta header always

agree within a request, even across `setModel` flips.

- Regression test: hot-flip `enableCacheControl` from `true` to `false`

on a live generator, verify the 2nd request drops both the beta header

AND the body-side `cache_control` in lockstep.

- Tighten two existing beta-header tests that used `toContain` only on

`interleaved-thinking` / `effort` — they now also assert

`prompt-caching-scope-2026-01-05` is present (per-request keep-default

and streaming paths), so accidental removal trips the test.

- Add coverage for the two previously-uncovered branches of

`isAnthropicNativeBaseUrl`: `*.anthropic.com` subdomains

(Anthropic-native) and a malformed baseURL (URL parse failure → proxy

fallthrough). Also add an `anthropic.com.evil.com` hostname-spoof case

mirroring the existing DeepSeek spoof test.

* refactor(core): consolidate anthropic generator shapes & document edge cases

Follow-up on PR #4020 review:

- Extract `AnthropicThinkingParam` type alias. The thinking union

`{ type: 'enabled'; budget_tokens } | { type: 'adaptive' }` was repeated

verbatim in three places: the `MessageCreateParamsWithThinking` field,

the streaming-request intersection, and `buildThinkingConfig`'s return

type. Once a third shape ships, forgetting one site would silently

narrow a runtime value — single alias keeps them locked.

- Compute `useProxyIdentity` once in the constructor and pass it into

`buildHeaders`. Previously `useBearerAuth` and `useProxyIdentity` named

the same predicate at two call sites; collapsing them clarifies that

Bearer auth + `claude-cli` UA + `x-app: cli` are one bundle that

should never be split.

- Document that `modelSupportsAdaptiveThinking`'s regex is intentionally

unanchored so reseller-prefixed names (`bedrock/claude-opus-4-7`,

`vertex_ai/claude-sonnet-4-6@…`, `idealab:claude-opus-4-6`, …) keep

matching. Tightening to `^claude-` would silently regress those.

- Soften the `prompt-caching-scope` beta comment so it describes what

the code enforces (gate on the `enableCacheControl` flag) rather than

promising a stronger "only ship when cache_control is on the body"

invariant — the converter still skips `cache_control` on niche shapes

(e.g. no system text, no tools, last user block isn't text). The

looser gate is intentional; Anthropic-native ignores unused betas.

- Pin the wire shape for the `reasoning: undefined` + 4.6+ model corner.

`resolveEffectiveEffort` returns undefined on `reasoning === undefined`,

so `buildThinkingConfig` ships `{ type: 'adaptive' }` with no

`output_config` and no `effort-2025-11-24` beta. If Anthropic ever

starts requiring `output_config.effort` alongside adaptive, this test

will fail at CI rather than at runtime as a server 400.

* fix(core): gate cache-scope on Anthropic-native baseURL, mirror auth gate

Follow-up on PR #4020 review: the `prompt-caching-scope-2026-01-05` beta

header and the body-side `scope: 'global'` field together comprise an

Anthropic-only wire-shape extension. Shipping them to non-Anthropic

backends (DeepSeek, IdeaLab) leaned on "unknown betas are ignored" —

true on Anthropic-native, but unverified for proxies and silently

inconsistent with the auth/identity gate, which already uses

`isAnthropicNativeBaseUrl` to bind Bearer / claude-cli / x-app to the

proxy path only.

- Add `useGlobalCacheScope` predicate on the generator. True iff

`enableCacheControl !== false` AND the resolved baseURL is

Anthropic-native. Plumbed per-request into both

`convertGeminiRequestToAnthropic` and `convertGeminiToolsToAnthropic`;

the same predicate also gates the `prompt-caching-scope-2026-01-05`

beta in `buildPerRequestHeaders` so beta + scope field always travel

together.

- Converter emits `cache_control: { type: 'ephemeral' }` (per-session)

when scope is off and `{ type: 'ephemeral', scope: 'global' }` when on.

Non-Anthropic baseURLs go back to their pre-PR per-session caching

shape; existing prompt caching keeps working with no new beta.

- Document the intentional `scope: 'global'` omission on

`addCacheControlToMessages`. The last user message changes every

turn (live prompt + immediate tool_results), so cross-session reuse

has effectively zero hit rate; cross-session caching is concentrated

on system + tool prefixes only.

Tests:

- DeepSeek baseURL pins the proxy auth/identity path

(`authToken` / claude-cli UA / `x-app: cli`). Documents the

contract assumption that DeepSeek's anthropic-compatible endpoint

accepts `Authorization: Bearer` — any future deviation surfaces

here rather than at runtime for users.

- Non-Anthropic baseURL strips the cache-scope beta AND

`scope: 'global'` from the wire shape, while keeping per-session

`cache_control: { type: 'ephemeral' }` on system / tools.

- Hot-flip test extended to assert tool `cache_control` flips alongside

system / user / beta header.

- Converter-level tests for per-call `enableCacheControl` and

`useGlobalCacheScope` overrides — both directions of the constructor

default (true→false, false→true) and the scope-independent-of-source

case (cache on, scope off → per-session shape).

- baseConfig in the per-request anthropic-beta block now targets

`api.anthropic.com` so cache-scope assertions remain meaningful; the

proxy-baseURL behavior is covered separately.

* docs(core): tighten useGlobalCacheScope JSDoc — baseUrl is NOT hot-mutated

#4020 review: the JSDoc claimed `Config.setModel()` mutates both

`enableCacheControl` AND `baseUrl` in place. Per the current Config

implementation, only the qwen-oauth hot-update path mutates

`enableCacheControl` in place; non-qwen-oauth providers go through

the refresh path which recreates the ContentGenerator (so `baseUrl`

is captured fresh at construct time, not mutated).

Tightened the wording to reflect actual behavior + kept the

read-both-each-request defense (cheap and avoids stale-cache

surprises if the hot-update list ever expands).

* fix(core)!: suppress env back-fill so proxy auth doesn't leak real Anthropic key

#4020 review (tanzhenxin, severity high): the IdeaLab-proxy branch

spread `{ authToken: <key> }` and omitted `apiKey` entirely. The

Anthropic SDK constructor destructures with defaults

(`apiKey = readEnv('ANTHROPIC_API_KEY') ?? null`), and destructuring

defaults only fire for `undefined` — so an omitted `apiKey` lets

`ANTHROPIC_API_KEY` back-fill it. The SDK's auth resolver then prefers

`apiKey` over `authToken`, shipping `X-Api-Key` (not

`Authorization: Bearer`) on the wire. Concrete impact: a user with

`ANTHROPIC_API_KEY=sk-ant-…` exported (normal for anyone also running

Claude Code in the same shell) configuring qwen-code with an IdeaLab

proxy plus an IdeaLab token would leak their real Anthropic key as

`X-Api-Key` to the third-party proxy endpoint.

- Pass `apiKey: null` explicitly on the proxy branch and `authToken: null`

on the Anthropic-native branch. Explicit `null` suppresses the

destructuring default; the env back-fill no longer fires.

- New helper `resolveEffectiveBaseUrl` mirrors the SDK's own

destructuring order (config → `ANTHROPIC_BASE_URL` env → SDK default).

`isAnthropicNativeBaseUrl` now consults the env too, so a user

configuring the proxy purely through `ANTHROPIC_BASE_URL` (qwen-code

`baseUrl` unset) gets the proxy identity bundle instead of silently

shipping native auth + UA + cache-scope beta to the proxy.

Tests:

- ANTHROPIC_API_KEY env + proxy baseURL → ctor receives `apiKey: null`

and `authToken: our-key`. Locks in the credential-leak fix.

- ANTHROPIC_AUTH_TOKEN env + Anthropic-native baseURL → ctor receives

`authToken: null` and `apiKey: our-key`. Symmetric guard for the

inverse direction.

- ANTHROPIC_BASE_URL env points to proxy, config.baseUrl unset → proxy

identity bundle (claude-cli UA, x-app, Bearer auth) applies.

- ANTHROPIC_BASE_URL unset → SDK default api.anthropic.com path keeps

native identity (predicate doesn't misclassify the SDK default as a

proxy).

- config.baseUrl wins over ANTHROPIC_BASE_URL — mirrors the SDK's own

resolution order.

- Existing 7 identity tests updated from `toBeUndefined()` to

`toBeNull()` to match the new explicit-suppression contract.

* refactor(core): gate cache-scope beta on body presence, not just predicate

#4020 review (Copilot): the comment promised "beta and body-side

scope: 'global' field always ship together" but the gate was just

`useGlobalCacheScope()`. In the degenerate case where the predicate is

true but the request body has no system text AND no tools, the beta

would still ship without any matching `cache_control.scope: 'global'`

on the wire — overstating the contract and shipping dead weight.

- New `hasGlobalCacheScopeOnWire(req)` scans the assembled request body

(system block when shaped as `TextBlockParam[]`, tools array) for any

`cache_control: { …, scope: 'global' }` entry. `buildPerRequestHeaders`

gates the `prompt-caching-scope-2026-01-05` beta on this scan, so the

beta and the body field share a single source of truth. No window

between sampling the predicate and emitting the body where the two

could diverge.

- `useGlobalCacheScope()` is still sampled once per `buildRequest` and

threaded into the converter to decide whether to ATTACH

`scope: 'global'` to the body. The body-scan downstream then derives

the beta from what actually landed.

Tests:

- New: empty systemInstruction + no tools + Anthropic-native + cache on

→ beta NOT shipped (degenerate body-scan case).

- New: empty systemInstruction + non-empty tools → beta shipped

(tool scope:'global' triggers the scan).

- Existing per-request beta tests now include a `systemInstruction` so

the body has the scope field; degenerate case is covered by the new

dedicated test.

Also tightened two stale comments (#3217834451, #3217834505) that

claimed `Config.setModel()` mutates both `enableCacheControl` and

`baseUrl` in place — only `enableCacheControl` is hot-mutated (qwen-oauth

path); non-qwen-oauth providers recreate the generator on refresh, so

`baseUrl` is captured fresh at construct time. Comments now describe the

real in-place mutation and note the qwen-oauth boundary.

* fix(core): trim config.baseUrl and document x-app in buildHeaders

#4020 review (Copilot): two low-stakes follow-ups on

|

||

|---|---|---|

| .github | ||

| .husky | ||

| .qwen | ||

| .vscode | ||

| docs | ||

| docs-site | ||

| eslint-rules | ||

| integration-tests | ||

| packages | ||

| scripts | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .prettierrc.json | ||

| .yamllint.yml | ||

| AGENTS.md | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| esbuild.config.js | ||

| eslint.config.js | ||

| LICENSE | ||

| Makefile | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| vitest.config.ts | ||

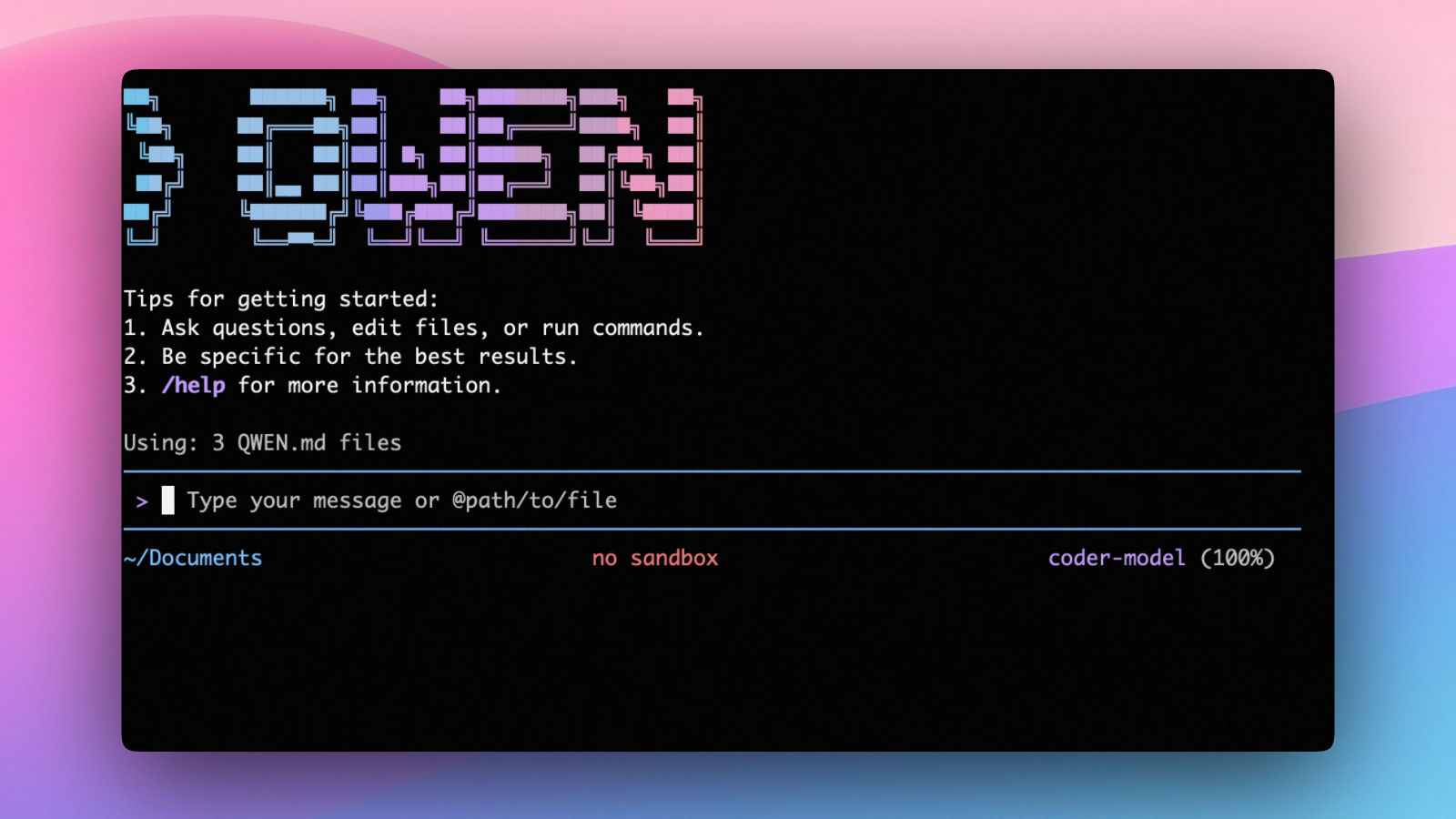

An open-source AI agent that lives in your terminal.

中文 | Deutsch | français | 日本語 | Русский | Português (Brasil)

🎉 News

-

2026-04-15: Qwen OAuth free tier has been discontinued. To continue using Qwen Code, switch to Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key. Run

qwen authto configure. -

2026-04-13: Qwen OAuth free tier policy update: daily quota adjusted to 100 requests/day (from 1,000).

-

2026-04-02: Qwen3.6-Plus is now live! Get an API key from Alibaba Cloud ModelStudio to access it through the OpenAI-compatible API.

-

2026-02-16: Qwen3.5-Plus is now live!

Why Qwen Code?

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

- Multi-protocol, flexible providers: use OpenAI / Anthropic / Gemini-compatible APIs, Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key.

- Open-source, co-evolving: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

- Agentic workflow, feature-rich: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

- Terminal-first, IDE-friendly: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

Installation

Quick Install (Recommended)

Linux / macOS

bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

Windows (Run as Administrator)

Works in both Command Prompt and PowerShell:

powershell -Command "Invoke-WebRequest 'https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat' -OutFile (Join-Path $env:TEMP 'install-qwen.bat'); & (Join-Path $env:TEMP 'install-qwen.bat')"

Note

: It's recommended to restart your terminal after installation to ensure environment variables take effect.

Manual Installation

Prerequisites

Make sure you have Node.js 22 or later installed. Download it from nodejs.org.

NPM

npm install -g @qwen-code/qwen-code@latest

Homebrew (macOS, Linux)

brew install qwen-code

Quick Start

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

On first use, you'll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Example prompts:

What does this project do?

Explain the codebase structure.

Help me refactor this function.

Generate unit tests for this module.

Click to watch a demo video

🦞 Use Qwen Code for Coding Tasks in Claw

Copy the prompt below and paste it into your agent:

Use Qwen Code as a coding agent. First, fetch and read this skill reference:

https://raw.githubusercontent.com/QwenLM/qwen-code/refs/heads/main/.qwen/skills/qwen-code-claw/SKILL.md

Then follow the instructions inside to install, authenticate, and use Qwen Code via acpx for all coding tasks.

Authentication

Qwen Code supports the following authentication methods:

- API Key (recommended): use an API key from Alibaba Cloud Model Studio (Beijing / intl) or any supported provider (OpenAI, Anthropic, Google GenAI, and other compatible endpoints).

- Coding Plan: subscribe to the Alibaba Cloud Coding Plan (Beijing / intl) for a fixed monthly fee with higher quotas.

⚠️ Qwen OAuth was discontinued on April 15, 2026. If you were previously using Qwen OAuth, please switch to one of the methods above. Run

qwenand then/authto reconfigure.

API Key (recommended)

Use an API key to connect to Alibaba Cloud Model Studio or any supported provider. Supports multiple protocols:

- OpenAI-compatible: Alibaba Cloud ModelStudio, ModelScope, OpenAI, OpenRouter, and other OpenAI-compatible providers

- Anthropic: Claude models

- Google GenAI: Gemini models

The recommended way to configure models and providers is by editing ~/.qwen/settings.json (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

Quick Setup in 3 Steps

Step 1: Create or edit ~/.qwen/settings.json

Here is a complete example:

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"description": "Qwen3-Coder via Dashscope",

"envKey": "DASHSCOPE_API_KEY"

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Step 2: Understand each field

| Field | What it does |

|---|---|

modelProviders |

Declares which models are available and how to connect to them. Keys like openai, anthropic, gemini represent the API protocol. |

modelProviders[].id |

The model ID sent to the API (e.g. qwen3.6-plus, gpt-4o). |

modelProviders[].envKey |

The name of the environment variable that holds your API key. |

modelProviders[].baseUrl |

The API endpoint URL (required for non-default endpoints). |

env |

A fallback place to store API keys (lowest priority; prefer .env files or export for sensitive keys). |

security.auth.selectedType |

The protocol to use on startup (openai, anthropic, gemini, vertex-ai). |

model.name |

The default model to use when Qwen Code starts. |

Step 3: Start Qwen Code — your configuration takes effect automatically:

qwen

Use the /model command at any time to switch between all configured models.

More Examples

Coding Plan (Alibaba Cloud ModelStudio) — fixed monthly fee, higher quotas

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.6-plus from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

},

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "glm-4.7",

"name": "glm-4.7 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "glm-4.7 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "kimi-k2.5",

"name": "kimi-k2.5 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "kimi-k2.5 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Subscribe to the Coding Plan and get your API key at Alibaba Cloud ModelStudio(Beijing) or Alibaba Cloud ModelStudio(intl).

Multiple providers (OpenAI + Anthropic + Gemini)

{

"modelProviders": {

"openai": [

{

"id": "gpt-4o",

"name": "GPT-4o",

"envKey": "OPENAI_API_KEY",

"baseUrl": "https://api.openai.com/v1"

}

],

"anthropic": [

{

"id": "claude-sonnet-4-20250514",

"name": "Claude Sonnet 4",

"envKey": "ANTHROPIC_API_KEY"

}

],

"gemini": [

{

"id": "gemini-2.5-pro",

"name": "Gemini 2.5 Pro",

"envKey": "GEMINI_API_KEY"

}

]

},

"env": {

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "gpt-4o"

}

}

Enable thinking mode (for supported models like qwen3.5-plus)

{

"modelProviders": {

"openai": [

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (thinking)",

"envKey": "DASHSCOPE_API_KEY",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.5-plus"

}

}

Tip: You can also set API keys via

exportin your shell or.envfiles, which take higher priority thansettings.json→env. See the authentication guide for full details.

Security note: Never commit API keys to version control. The

~/.qwen/settings.jsonfile is in your home directory and should stay private.

Local Model Setup (Ollama / vLLM)

You can also run models locally — no API key or cloud account needed. This is not an authentication method; instead, configure your local model endpoint in ~/.qwen/settings.json using the modelProviders field.

Set generationConfig.contextWindowSize inside the matching provider entry

and adjust it to the context length configured on your local server.

Ollama setup

- Install Ollama from ollama.com

- Pull a model:

ollama pull qwen3:32b - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "qwen3:32b",

"name": "Qwen3 32B (Ollama)",

"baseUrl": "http://localhost:11434/v1",

"description": "Qwen3 32B running locally via Ollama",

"generationConfig": {

"contextWindowSize": 131072

}

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3:32b"

}

}

vLLM setup

- Install vLLM:

pip install vllm - Start the server:

vllm serve Qwen/Qwen3-32B - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "Qwen/Qwen3-32B",

"name": "Qwen3 32B (vLLM)",

"baseUrl": "http://localhost:8000/v1",

"description": "Qwen3 32B running locally via vLLM",

"generationConfig": {

"contextWindowSize": 131072

}

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "Qwen/Qwen3-32B"

}

}

Usage

As an open-source terminal agent, you can use Qwen Code in four primary ways:

- Interactive mode (terminal UI)

- Headless mode (scripts, CI)

- IDE integration (VS Code, Zed)

- SDKs (TypeScript, Python, Java)

Interactive mode

cd your-project/

qwen

Run qwen in your project folder to launch the interactive terminal UI. Use @ to reference local files (for example @src/main.ts).

Headless mode

cd your-project/

qwen -p "your question"

Use -p to run Qwen Code without the interactive UI—ideal for scripts, automation, and CI/CD. Learn more: Headless mode.

IDE integration

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

SDKs

Build on top of Qwen Code with the available SDKs:

- TypeScript: Use the Qwen Code SDK

- Python: Use the Python SDK

- Java: Use the Java SDK

Python SDK example:

import asyncio

from qwen_code_sdk import is_sdk_result_message, query

async def main() -> None:

result = query(

"Summarize the repository layout.",

{

"cwd": "/path/to/project",

"path_to_qwen_executable": "qwen",

},

)

async for message in result:

if is_sdk_result_message(message):

print(message["result"])

asyncio.run(main())

Commands & Shortcuts

Session Commands

/help- Display available commands/clear- Clear conversation history/compress- Compress history to save tokens/stats- Show current session information/bug- Submit a bug report/exitor/quit- Exit Qwen Code

Keyboard Shortcuts

Ctrl+C- Cancel current operationCtrl+D- Exit (on empty line)Up/Down- Navigate command history

Learn more about Commands

Tip: In YOLO mode (

--yolo), vision switching happens automatically without prompts when images are detected. Learn more about Approval Mode

Configuration

Qwen Code can be configured via settings.json, environment variables, and CLI flags.

| File | Scope | Description |

|---|---|---|

~/.qwen/settings.json |

User (global) | Applies to all your Qwen Code sessions. Recommended for modelProviders and env. |

.qwen/settings.json |

Project | Applies only when running Qwen Code in this project. Overrides user settings. |

The most commonly used top-level fields in settings.json:

| Field | Description |

|---|---|

modelProviders |

Define available models per protocol (openai, anthropic, gemini, vertex-ai). |

env |

Fallback environment variables (e.g. API keys). Lower priority than shell export and .env files. |

security.auth.selectedType |

The protocol to use on startup (e.g. openai). |

model.name |

The default model to use when Qwen Code starts. |

See the Authentication section above for complete

settings.jsonexamples, and the settings reference for all available options.

Benchmark Results

Terminal-Bench Performance

| Agent | Model | Accuracy |

|---|---|---|

| Qwen Code | Qwen3-Coder-480A35 | 37.5% |

| Qwen Code | Qwen3-Coder-30BA3B | 31.3% |

Ecosystem

Looking for a graphical interface?

- AionUi A modern GUI for command-line AI tools including Qwen Code

- Gemini CLI Desktop A cross-platform desktop/web/mobile UI for Qwen Code

Troubleshooting

If you encounter issues, check the troubleshooting guide.

Common issues:

Qwen OAuth free tier was discontinued on 2026-04-15: Qwen OAuth is no longer available. Runqwen→/authand switch to API Key or Coding Plan. See the Authentication section above for setup instructions.

To report a bug from within the CLI, run /bug and include a short title and repro steps.

Connect with Us

- Discord: https://discord.gg/RN7tqZCeDK

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

Acknowledgments

This project is based on Google Gemini CLI. We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.