* docs: add auto-memory implementation log

* feat(core): add managed auto-memory storage scaffold

* feat(core): load managed auto-memory index

* feat(core): add managed auto-memory recall

* feat(core): add managed auto-memory extraction

* feat(cli): add managed auto-memory dream commands

* feat(core): add auxiliary side-query foundation

* feat(memory): add model-driven recall selection

* feat(memory): add model-driven extraction planner

* feat(core): add background task runtime foundation

* feat(memory): schedule auto dream in background

* feat(core): add background agent runner foundation

* feat(memory): add extraction agent planner

* feat(core): add dream agent planner

* feat(core): rebuild managed memory index

* feat(memory): add governance status commands

* feat(memory): add managed forget flow

* feat(core): harden background agent planning

* feat(memory): complete managed parity closure

* test(memory): add managed lifecycle integration coverage

* feat: same to cc

* feat(memory-ui): add memory saved notification and memory count badge

Feature 3 - Memory Saved Notification:

- Add HistoryItemMemorySaved type to types.ts

- Create MemorySavedMessage component for rendering '● Saved/Updated N memories'

- In useGeminiStream: detect in-turn memory writes via mapToDisplay's

memoryWriteCount field and emit 'memory_saved' history item after turn

- In client.ts: capture background dream/extract promises and expose

via consumePendingMemoryTaskPromises(); useGeminiStream listens

post-turn and emits 'Updated N memories' notification for background tasks

Feature 4 - Memory Count Badge:

- Add isMemoryOp field to IndividualToolCallDisplay

- Add memoryWriteCount/memoryReadCount to HistoryItemToolGroup

- Add detectMemoryOp() in useReactToolScheduler using isAutoMemPath

- ToolGroupMessage renders '● Recalled N memories, Wrote N memories' badge

at the top of tool groups that touch memory files

Fix: process.env bracket-access in paths.ts (noPropertyAccessFromIndexSignature)

Fix: MemoryDialog.test.tsx mock useSettings to satisfy SettingsProvider requirement

* fix(memory-ui): auto-approve memory writes, collapse memory tool groups, fix MEMORY.md path

Problem 1 - Auto-approve memory file operations:

- write-file.ts: getDefaultPermission() checks isAutoMemPath; returns 'allow'

for managed auto-memory files, 'ask' for all other files

- edit.ts: same pattern

Problem 2 - Feature 4 UX: collapse memory-only tool groups:

- ToolGroupMessage: detect when all tool calls have isMemoryOp set (pure memory

group) and all are complete; render compact '● Recalled/Wrote N memories

(ctrl+o to expand)' instead of individual tool call rows

- ctrl+o toggles expand/collapse when isFocused and group is memory-only

- Mixed groups (memory + other tools) keep badge-at-top behaviour

- Expanded state shows individual tool calls with '● Memory operations

(ctrl+o to collapse)' header

Problem 3 - MEMORY.md path mismatch:

- prompt.ts: Step 2 now references full absolute path ${memoryDir}/MEMORY.md

so the model writes to the correct location inside the memory directory,

not to the parent project directory

Fix tests:

- write-file.test.ts: add getProjectRoot to mockConfigInternal

- prompt.test.ts: update assertion to match full-path section header

* fix(memory-ui): fix duplicate notification, broken ctrl+o, and Edit tool detection

- Remove duplicate 'Saved N memories' notification: the tool group badge already

shows 'Wrote N memories'; the separate HistoryItemMemorySaved addItem after

onComplete was double-counting. Keep only the background-task path

(consumePendingMemoryTaskPromises).

- Remove ctrl+o expand: Ink's Static area freezes items on first render and

cannot respond to user input. useInput/useState(isExpanded) in a Static item

is a no-op. Removed the dead code; memory-only groups now always render as

the compact summary (no fake interactive hint).

- Fix Edit tool detection: detectMemoryOp was checking for 'edit_file' but the

real tool name constant is 'edit'. Also removed non-existent 'create_file'

(write_file covers all writes). Now editing MEMORY.md is correctly identified

as a memory write op, collapses to 'Wrote N memories', and is auto-approved.

* fix(dream): run /dream as a visible submit_prompt turn, not a silent background agent

The previous implementation ran an AgentHeadless background agent that could

take 5+ minutes with zero UI feedback — user saw a blank screen for the entire

duration and then at most one line of text.

Fix: /dream now returns submit_prompt with the consolidation task prompt so it

runs as a regular AI conversation turn. Tool calls (read_file, write_file, edit,

grep_search, list_directory, glob) are immediately visible as collapsed tool

groups as the model works through the memory files — identical UX to Claude Code.

Also export buildConsolidationTaskPrompt from dreamAgentPlanner so dreamCommand

can reuse the same detailed consolidation prompt that was already written.

* fix(memory): auto-allow ls/glob/grep on memory base directory

Add getMemoryBaseDir() to getDefaultPermission() allow list in ls.ts,

glob.ts, and grep.ts — mirrors the existing pattern in read-file.ts.

Without this, ListFiles/Glob/Grep on ~/.qwen/* would trigger an

approval dialog, blocking /dream at its very first step.

* fix(background): prevent permission prompt hangs in background agents

Match Claude Code's headless-agent intent: background memory agents must never

block on interactive permission prompts.

Wrap background runtime config so getApprovalMode() returns YOLO, ensuring any

ask decision is auto-approved instead of hanging forever. Add regression test

covering the wrapped approval mode.

* fix(memory): run auto extract through forked agent

Make managed auto-memory extraction follow the Claude Code architecture:

background extraction now uses a forked agent to read/write memory files

directly, instead of planning patches and applying them with a separate

filesystem pipeline.

Keep the old patch/model path only as fallback if the forked agent fails.

Add regression tests covering the new execution path and tool whitelist.

* refactor(memory): remove legacy extract fallback pipeline

Delete the old patch/model/heuristic extraction path entirely.

Managed auto-memory extract now runs only through the forked-agent

execution flow, with no planner/apply fallback stages remaining.

Also remove obsolete exports/tests and update scheduler/integration

coverage to use the forked-agent-only architecture.

* refactor(memory): move auxiliary files out of memory/ directory

meta.json, extract-cursor.json, and consolidation.lock are internal

bookkeeping files, not user-visible memories. Move them one level up

to the project state dir (parent of memory/) so that the memory/

directory contains only MEMORY.md and topic files, matching the

clean layout of the upstream reference implementation.

Add getAutoMemoryProjectStateDir() helper in paths.ts and update the

three path accessors + store.test.ts path assertions accordingly.

* fix(memory): record lastDreamAt after manual /dream run

The /dream command submits a prompt to the main agent (submit_prompt),

which writes memory files directly. Because it bypasses dreamScheduler,

meta.json was never updated and /memory always showed 'never'.

Fix by:

- Exporting writeDreamManualRunToMetadata() from dream.ts

- Adding optional onComplete callback to SubmitPromptActionReturn and

SubmitPromptResult (types.ts / commands/types.ts)

- Propagating onComplete through slashCommandProcessor.ts

- Firing onComplete after turn completion in useGeminiStream.ts

- Providing the callback in dreamCommand.ts to write lastDreamAt

* fix(memory): remove scope params from /remember in managed auto-memory mode

--global/--project are legacy save_memory tool concepts. In managed

auto-memory mode the forked agent decides the appropriate type

(user/feedback/project/reference) based on the content of the fact.

Also improve the prompt wording to explicitly ask the agent to choose

the correct type, reducing the tendency to default to 'project'.

* feat(ui): show '✦ dreaming' indicator in footer during background dream

Subscribe to getManagedAutoMemoryDreamTaskRegistry() in Footer via a

useDreamRunning() hook. While any dream task for the current project is

pending or running, display '✦ dreaming' in the right section of the

footer bar, between Debug Mode and context usage.

* refactor(memory): align dream/extract infrastructure with Claude Code patterns

Five improvements based on Claude Code parity audit:

1. Memoize getAutoMemoryRoot (paths.ts)

- Add _autoMemoryRootCache Map, keyed by projectRoot

- findCanonicalGitRoot() walks the filesystem per call; memoize avoids

repeated git-tree traversal on hot-path schedulers/scanners

- Expose clearAutoMemoryRootCache() for test teardown

2. Lock file stores PID + isProcessRunning reclaim (dreamScheduler.ts)

- acquireDreamLock() writes process.pid to the lock file body

- lockExists() reads PID and calls process.kill(pid, 0); dead/missing

PID reclaims the lock immediately instead of waiting 2h

- Stale threshold reduced to 1h (PID-reuse guard, same as CC)

3. Session scan throttle (dreamScheduler.ts)

- Add SESSION_SCAN_INTERVAL_MS = 10min (same as CC)

- Add lastSessionScanAt Map<projectRoot, number> to ManagedAutoMemoryDreamRuntime

- When time-gate passes but session-gate doesn't, throttle prevents

re-scanning the filesystem on every user turn

4. mtime-based session counting (dreamScheduler.ts)

- Replace fragile recentSessionIdsSinceDream Set in meta.json with

filesystem mtime scan (listSessionsTouchedSince)

- Mirrors Claude Code's listSessionsTouchedSince: reads session JSONL

files from Storage.getProjectDir()/chats/, filters by mtime > lastDreamAt

- Immune to meta.json corruption/loss; no per-turn metadata write

- ManagedAutoMemoryDreamRuntime accepts injectable SessionScannerFn

for clean unit testing without real session files

5. Extraction mutual exclusion extended to write_file/edit (extractScheduler.ts)

- historySliceUsesMemoryTool() now checks write_file/edit/replace/create_file

tool calls whose file_path is within isAutoMemPath()

- Previously only detected save_memory; missed direct file writes by

the main agent, causing redundant background extraction

* docs(memory): add user-facing memory docs, i18n for all locales, simplify /forget

- Add docs/users/features/memory.md: comprehensive user-facing guide covering

QWEN.md instructions, auto-memory behaviour, all memory commands, and

troubleshooting; replaces the placeholder auto-memory.md

- Update docs/users/features/_meta.ts: rename entry auto-memory → memory

- Update docs/users/features/commands.md: add /init, /remember, /forget,

/dream rows; fix /memory description; remove /init duplicate

- Update docs/users/configuration/settings.md: add memory.* settings section

(enableManagedAutoMemory, enableManagedAutoDream) between tools and permissions

- Remove /forget --apply flag: preview-then-apply flow replaced with direct

deletion; update forgetCommand.ts, en.js, zh.js accordingly

- Add all auto-memory i18n keys to de, ja, pt, ru locales (18 keys each):

Open auto-memory folder, Auto-memory/Auto-dream status lines, never/on/off,

✦ dreaming, /forget and /remember usage strings, all managed-memory messages

- Remove dead save_memory branch from extractScheduler.partWritesToMemory()

- Add ✦ dreaming indicator to Footer.tsx with i18n; fix Footer.test.tsx mocks

- Refactor MemoryDialog.tsx auto-dream status line to use i18n

- Remove save_memory tool (memoryTool.ts/test); clean up webui references

- Add extractionPlanner.ts, const.ts and associated tests

- Delete stale docs/users/configuration/memory.md and

docs/developers/tools/memory.md (content superseded)

* refactor(memory): remove all Claude Code references from comments and test names

* test(memory): remove empty placeholder test files that cause vitest to fail

* fix eslint

* fix test in windows

* fix test

* fix(memory): address critical review findings from PR #3087

- fix(read-file): narrow auto-allow from getMemoryBaseDir() (~/.qwen) to

isAutoMemPath(projectRoot) to prevent exposing settings.json / OAuth

credentials without user approval (wenshao review)

- fix(forget): per-entry deletion instead of whole-file unlink

- assign stable per-entry IDs (relativePath:index for multi-entry files)

so the model can target individual entries without removing siblings

- rewrite file keeping unmatched entries; only unlink when file becomes

empty (wenshao review)

- fix(entries): round-trip correctness for multi-entry new-format bodies

- parseAutoMemoryEntries: plain-text line closes current entry and opens

a new one (was silently ignored when current was already set)

- renderAutoMemoryBody: emit blank line between adjacent entries so the

parser can detect entry boundaries on re-read (wenshao review)

- fix(entries): resolve two CodeQL polynomial-regex alerts

- indentedMatch: \s{2,}(?:[-*]\s+)? → [\t ]{2,}(?:[-*][\t ]+)?

- topLevelMatch: :\s*(.+)$ → :[ \t]*(\S.*)$

(github-advanced-security review)

- fix(scan.test): use forward-slash literal for relativePath expectation

since listMarkdownFiles() normalises all separators to '/' on all

platforms including Windows

* fix(memory): replace isAutoMemPath startsWith with path.relative()

Using path.relative() instead of string startsWith() is more robust

across platforms — it correctly handles Windows path-separator

differences and avoids potential edge cases where a path prefix match

could succeed on non-separator boundaries.

Addresses github-actions review item 3 (PR #3087).

* feat(telemetry): add auto-memory telemetry instrumentation

Add OpenTelemetry logs + metrics for the five auto-memory lifecycle

events: extract, dream, recall, forget, and remember.

Telemetry layer (packages/core/src/telemetry/):

- constants.ts: 5 new event-name constants

(qwen-code.memory.{extract,dream,recall,forget,remember})

- types.ts: 5 new event classes with typed constructor params

(MemoryExtractEvent, MemoryDreamEvent, MemoryRecallEvent,

MemoryForgetEvent, MemoryRememberEvent)

- metrics.ts: 8 new OTel instruments (5 Counters + 3 Histograms)

with recordMemoryXxx() helpers; registered inside initializeMetrics()

- loggers.ts: logMemoryExtract/Dream/Recall/Forget/Remember() — each

emits a structured log record and calls its recordXxx() counterpart

- index.ts: re-exports all new symbols

Instrumentation call-sites:

- extractScheduler.ts ManagedAutoMemoryExtractRuntime.runTask():

emits extract event with trigger=auto, completed/failed status,

patches_count, touched_topics, and wall-clock duration

- dream.ts runManagedAutoMemoryDream():

emits dream event with trigger=auto, updated/noop status,

deduped_entries, touched_topics, and duration; covers both

agent-planner and mechanical fallback paths

- recall.ts resolveRelevantAutoMemoryPromptForQuery():

emits recall event with strategy, docs_scanned/selected, and

duration; covers model, heuristic, and none paths

- forget.ts forgetManagedAutoMemoryEntries():

emits forget event with removed_entries_count, touched_topics,

and selection_strategy (model/heuristic/none)

- rememberCommand.ts action():

emits remember event with topic=managed|legacy at command

invocation time (before agent decides the actual memory type)

* refactor(telemetry): remove memory forget/remember telemetry events

Remove EVENT_MEMORY_FORGET and EVENT_MEMORY_REMEMBER along with all

associated infrastructure that is no longer needed:

- constants.ts: remove EVENT_MEMORY_FORGET, EVENT_MEMORY_REMEMBER

- types.ts: remove MemoryForgetEvent, MemoryRememberEvent classes

- metrics.ts: remove MEMORY_FORGET_COUNT, MEMORY_REMEMBER_COUNT constants,

memoryForgetCounter, memoryRememberCounter module vars,

their initialization in initializeMetrics(), and

recordMemoryForgetMetrics(), recordMemoryRememberMetrics() functions

- loggers.ts: remove logMemoryForget(), logMemoryRemember() functions

and their imports

- index.ts: remove all re-exports for the above symbols

- memory/forget.ts: remove logMemoryForget call-site and import

- cli/rememberCommand.ts: remove logMemoryRemember call-sites and import

* change default value

* fix forked agent

* refactor(background): unify fork primitives into runForkedAgent + cleanup

- Merge runForkedQuery into runForkedAgent via TypeScript overloads:

with cacheSafeParams → GeminiChat single-turn path (ForkedQueryResult)

without cacheSafeParams → AgentHeadless multi-turn path (ForkedAgentResult)

- Delete forkedQuery.ts; move its test to background/forkedAgent.cache.test.ts

- Remove forkedQuery export from followup/index.ts

- Migrate all callers (suggestionGenerator, speculation, btwCommand, client)

to import from background/forkedAgent

- Add getFastModel() / setFastModel() to Config; expose in CLI config init

and ModelDialog / modelCommand

- Remove resolveFastModel() from AppContainer — now delegated to config.getFastModel()

- Strip Claude Code references from code comments

* fix(memory): address wenshao's critical review findings

- dream.ts: writeDreamManualRunToMetadata now persists lastDreamSessionId

and resets recentSessionIdsSinceDream, preventing auto-dream from firing

again in the same session after a manual /dream

- config.ts: gate managed auto-memory injection on getManagedAutoMemoryEnabled();

when disabled, previously saved memories are no longer injected into new sessions

- rememberCommand.ts: remove legacy save_memory branch (tool was removed);

fall back to submit_prompt directing agent to write to QWEN.md instead

- BuiltinCommandLoader.ts: only register /dream and /forget when managed

auto-memory is enabled, matching the feature's runtime availability

- forget.ts: return early in forgetManagedAutoMemoryMatches when matches is

empty, avoiding unnecessary directory scaffolding as a side effect

* fix test

* fix ci test

* feat(memory): align extract/dream agents to Claude Code patterns

- fix(client): move saveCacheSafeParams before early-return paths so

extract agents always have cache params available (fixes extract never

triggering in skipNextSpeakerCheck mode)

- feat(extract): add read-only shell tool + memory-scoped write

permissions; create inline createMemoryScopedAgentConfig() with

PermissionManager wrapper (isToolEnabled + evaluate) that allows only

read-only shell commands and write/edit within the auto-memory dir

- feat(extract): align prompt to Claude Code patterns — manifest block

listing existing files, parallel read-then-write strategy, two-step

save (memory file then index)

- feat(dream): remove mechanical fallback; runManagedAutoMemoryDream is

now agent-only and throws without config

- feat(dream): align prompt to Claude Code 4-phase structure

(Orient/Gather/Consolidate/Prune+Index); add narrow transcript grep,

relative→absolute date conversion, stale index pruning, index size cap

- fix(permissions): add isToolEnabled() to MemoryScopedPermissionManager

to prevent TypeError crash in CoreToolScheduler._schedule

- test: update dreamScheduler tests to mock dream.js; replace removed

mechanical-dedup test with scheduler infrastructure verification

* move doc to design

* refactor(memory): unify extract+dream background task management into MemoryBackgroundTaskHub

- Add memoryTaskHub.ts: single BackgroundTaskRegistry + BackgroundTaskDrainer shared

by all memory background tasks; exposes listExtractTasks() / listDreamTasks()

typed query helpers and a unified drain() method

- extractScheduler: ManagedAutoMemoryExtractRuntime accepts hub via constructor

(defaults to defaultMemoryTaskHub); test factory gets isolated fresh hub

- dreamScheduler: same pattern — sessionScanner + hub injection; BackgroundTask-

Scheduler initialized from injected hub; test factory gets isolated hub

- status.ts: replace two separate getRegistry() calls with defaultMemoryTaskHub

typed query methods

- Footer.tsx (useDreamRunning): subscribe to shared registry, filter by

DREAM_TASK_TYPE so extract tasks do not trigger the dream spinner

- index.ts: re-export memoryTaskHub.ts so defaultMemoryTaskHub/DREAM_TASK_TYPE/

EXTRACT_TASK_TYPE are available as top-level package exports

* refactor(background): introduce general-purpose BackgroundTaskHub

Replace memory-specific MemoryBackgroundTaskHub with a domain-agnostic

BackgroundTaskHub in the background/ layer. Any future background task

runtime (3rd, 4th, …) plugs in by accepting a hub via constructor

injection — no new infrastructure required.

Changes:

- Add background/taskHub.ts: BackgroundTaskHub (registry + drainer +

createScheduler() + listByType(taskType, projectRoot?)) and the

globalBackgroundTaskHub singleton. Zero knowledge of any task type.

- Delete memory/memoryTaskHub.ts: its narrow listExtractTasks /

listDreamTasks helpers are replaced by the generic listByType() call.

- Move EXTRACT_TASK_TYPE to extractScheduler.ts (owned by the runtime

that defines it); replace 3 hardcoded string literals with the const.

- Move DREAM_TASK_TYPE to dreamScheduler.ts; use hub.createScheduler()

instead of manually wiring new BackgroundTaskScheduler(reg, drain).

- status.ts: globalBackgroundTaskHub.listByType(EXTRACT_TASK_TYPE, ...)

- Footer.tsx: globalBackgroundTaskHub.registry (shared, filtered by type)

- index.ts: export background/taskHub.js; drop memory/memoryTaskHub.js

* test(background): add BackgroundTaskHub unit tests and hub isolation checks

- background/taskHub.test.ts (11 tests):

- createScheduler(): tasks registered via scheduler appear in hub registry;

multiple calls return distinct scheduler instances

- listByType(): filters by taskType, filters by projectRoot, returns []

for unknown types, two types co-exist in registry but stay separated

- drain(): resolves false on timeout, resolves true when tasks complete,

resolves true immediately when no tasks in flight

- isolation: tasks in hubA do not appear in hubB

- globalBackgroundTaskHub: is a BackgroundTaskHub instance with registry/drainer

- extractScheduler.test.ts (+1 test):

- factory-created runtimes have isolated registries; tasks in runtimeA

are invisible to runtimeB; all tasks carry EXTRACT_TASK_TYPE

- dreamScheduler.test.ts (+1 test):

- factory-created runtimes have isolated registries; tasks in runtimeA

are invisible to runtimeB; all tasks carry DREAM_TASK_TYPE

* refactor(memory): consolidate all memory state into MemoryManager

Replace BackgroundTaskRegistry/Drainer/Scheduler/Hub helper classes and

module-level globals with a single MemoryManager class owned by Config.

## Changes

### New

- packages/core/src/memory/manager.ts — MemoryManager with:

- scheduleExtract / scheduleDream (inline queuing + deduplication logic)

- recall / forget / selectForgetCandidates / forgetMatches

- getStatus / drain / appendToUserMemory

- subscribe(listener) compatible with useSyncExternalStore

- storeWith() atomic record registration (no double-notify)

- Distinct skippedReason 'scan_throttled' vs 'min_sessions' for dream

- packages/core/src/utils/forkedAgent.ts — pure cache util (moved from background/)

- packages/core/src/utils/sideQuery.ts — pure util (moved from auxiliary/)

### Deleted

- background/taskRegistry, taskDrainer, taskScheduler, taskHub and all tests

- background/forkedAgent (moved to utils/)

- auxiliary/sideQuery (moved to utils/)

- memory/extractScheduler, dreamScheduler, state and all tests

### Modified

- config/config.ts — Config owns MemoryManager instance; getMemoryManager()

- core/client.ts — all memory ops via config.getMemoryManager()

- core/client.test.ts — mock MemoryManager instead of individual modules

- memory/status.ts — accepts MemoryManager param, drops globalBackgroundTaskHub

- index.ts — memory exports reduced from 14 modules to 5 (manager/types/paths/store/const)

- cli/commands/dreamCommand.ts — via config.getMemoryManager()

- cli/commands/forgetCommand.ts — via config.getMemoryManager()

- cli/components/Footer.tsx — useSyncExternalStore replacing setInterval polling

- cli/components/Footer.test.tsx — add getMemoryManager mock

|

||

|---|---|---|

| .github | ||

| .husky | ||

| .qwen | ||

| .vscode | ||

| docs | ||

| docs-site | ||

| eslint-rules | ||

| integration-tests | ||

| packages | ||

| scripts | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .prettierrc.json | ||

| .yamllint.yml | ||

| AGENTS.md | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| esbuild.config.js | ||

| eslint.config.js | ||

| LICENSE | ||

| Makefile | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| vitest.config.ts | ||

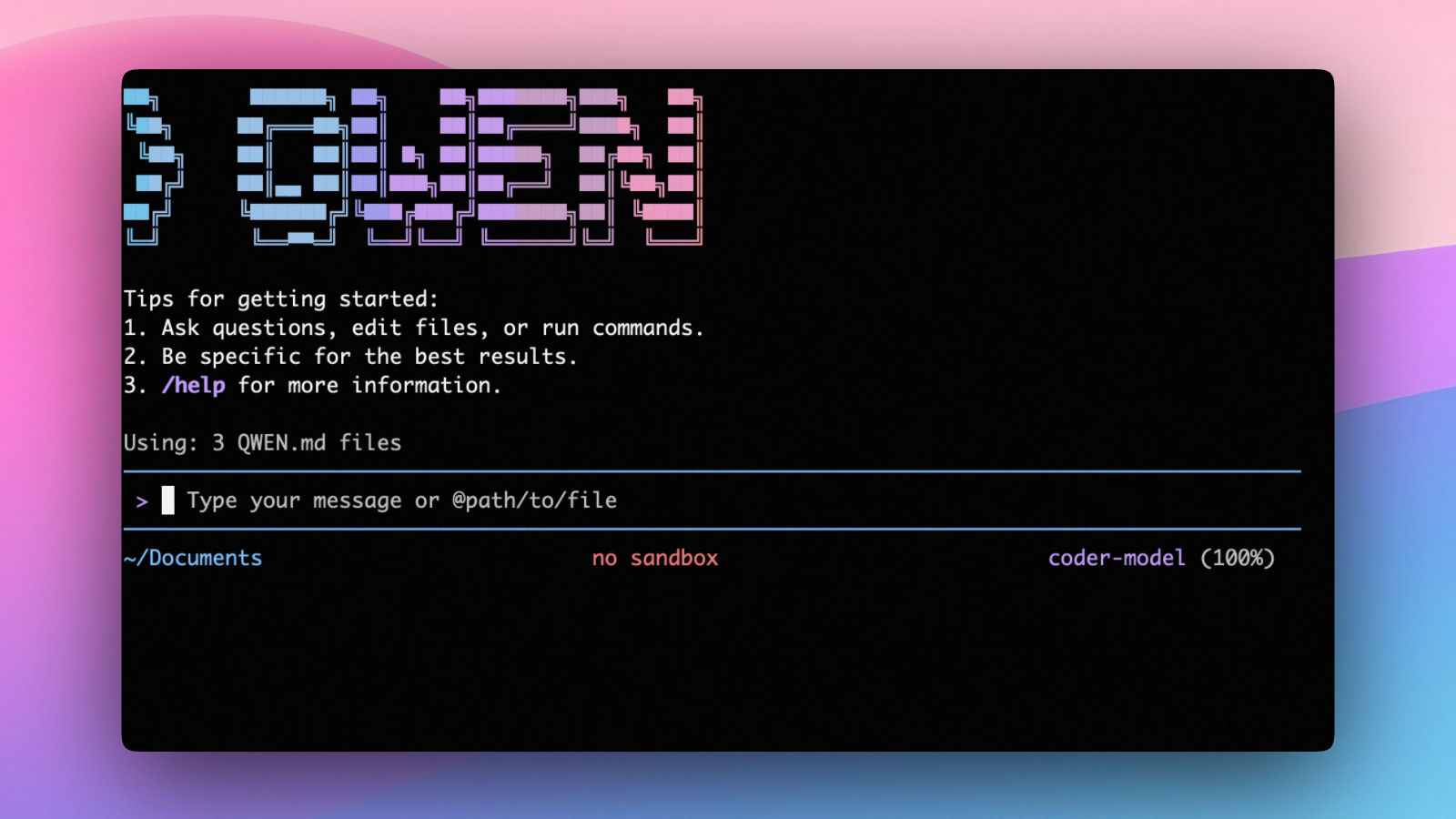

An open-source AI agent that lives in your terminal.

中文 | Deutsch | français | 日本語 | Русский | Português (Brasil)

🎉 News

-

2026-04-15: Qwen OAuth free tier has been discontinued. To continue using Qwen Code, switch to Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key. Run

qwen authto configure. -

2026-04-13: Qwen OAuth free tier policy update: daily quota adjusted to 100 requests/day (from 1,000).

-

2026-04-02: Qwen3.6-Plus is now live! Sign in via Qwen OAuth to use it directly, or get an API key from Alibaba Cloud ModelStudio to access it through the OpenAI-compatible API.

-

2026-02-16: Qwen3.5-Plus is now live!

Why Qwen Code?

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

- Multi-protocol, flexible providers: use OpenAI / Anthropic / Gemini-compatible APIs, Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key.

- Open-source, co-evolving: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

- Agentic workflow, feature-rich: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

- Terminal-first, IDE-friendly: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

Installation

Quick Install (Recommended)

Linux / macOS

bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

Windows (Run as Administrator CMD)

curl -fsSL -o %TEMP%\install-qwen.bat https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat && %TEMP%\install-qwen.bat

Note

: It's recommended to restart your terminal after installation to ensure environment variables take effect.

Manual Installation

Prerequisites

Make sure you have Node.js 20 or later installed. Download it from nodejs.org.

NPM

npm install -g @qwen-code/qwen-code@latest

Homebrew (macOS, Linux)

brew install qwen-code

Quick Start

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

On first use, you'll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Example prompts:

What does this project do?

Explain the codebase structure.

Help me refactor this function.

Generate unit tests for this module.

Click to watch a demo video

🦞 Use Qwen Code for Coding Tasks in Claw

Copy the prompt below and paste it into your agent:

Use Qwen Code as a coding agent. First, fetch and read this skill reference:

https://raw.githubusercontent.com/QwenLM/qwen-code/refs/heads/main/.qwen/skills/qwen-code-claw/SKILL.md

Then follow the instructions inside to install, authenticate, and use Qwen Code via acpx for all coding tasks.

Authentication

Qwen Code supports two authentication methods:

- Qwen OAuth (recommended & free): sign in with your

qwen.aiaccount in a browser. - API-KEY: use an API key to connect to any supported provider (OpenAI, Anthropic, Google GenAI, Alibaba Cloud ModelStudio, and other compatible endpoints).

Qwen OAuth (recommended)

Start qwen, then run:

/auth

Choose Qwen OAuth and complete the browser flow. Your credentials are cached locally so you usually won't need to log in again.

Note: In non-interactive or headless environments (e.g., CI, SSH, containers), you typically cannot complete the OAuth browser login flow. In these cases, please use the API-KEY authentication method.

API-KEY (flexible)

Use this if you want more flexibility over which provider and model to use. Supports multiple protocols:

- OpenAI-compatible: Alibaba Cloud ModelStudio, ModelScope, OpenAI, OpenRouter, and other OpenAI-compatible providers

- Anthropic: Claude models

- Google GenAI: Gemini models

The recommended way to configure models and providers is by editing ~/.qwen/settings.json (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

Quick Setup in 3 Steps

Step 1: Create or edit ~/.qwen/settings.json

Here is a complete example:

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"description": "Qwen3-Coder via Dashscope",

"envKey": "DASHSCOPE_API_KEY"

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Step 2: Understand each field

| Field | What it does |

|---|---|

modelProviders |

Declares which models are available and how to connect to them. Keys like openai, anthropic, gemini represent the API protocol. |

modelProviders[].id |

The model ID sent to the API (e.g. qwen3.6-plus, gpt-4o). |

modelProviders[].envKey |

The name of the environment variable that holds your API key. |

modelProviders[].baseUrl |

The API endpoint URL (required for non-default endpoints). |

env |

A fallback place to store API keys (lowest priority; prefer .env files or export for sensitive keys). |

security.auth.selectedType |

The protocol to use on startup (openai, anthropic, gemini, vertex-ai). |

model.name |

The default model to use when Qwen Code starts. |

Step 3: Start Qwen Code — your configuration takes effect automatically:

qwen

Use the /model command at any time to switch between all configured models.

More Examples

Coding Plan (Alibaba Cloud ModelStudio) — fixed monthly fee, higher quotas

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.6-plus from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

},

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "glm-4.7",

"name": "glm-4.7 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "glm-4.7 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "kimi-k2.5",

"name": "kimi-k2.5 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "kimi-k2.5 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Subscribe to the Coding Plan and get your API key at Alibaba Cloud ModelStudio(Beijing) or Alibaba Cloud ModelStudio(intl).

Multiple providers (OpenAI + Anthropic + Gemini)

{

"modelProviders": {

"openai": [

{

"id": "gpt-4o",

"name": "GPT-4o",

"envKey": "OPENAI_API_KEY",

"baseUrl": "https://api.openai.com/v1"

}

],

"anthropic": [

{

"id": "claude-sonnet-4-20250514",

"name": "Claude Sonnet 4",

"envKey": "ANTHROPIC_API_KEY"

}

],

"gemini": [

{

"id": "gemini-2.5-pro",

"name": "Gemini 2.5 Pro",

"envKey": "GEMINI_API_KEY"

}

]

},

"env": {

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "gpt-4o"

}

}

Enable thinking mode (for supported models like qwen3.5-plus)

{

"modelProviders": {

"openai": [

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (thinking)",

"envKey": "DASHSCOPE_API_KEY",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.5-plus"

}

}

Tip: You can also set API keys via

exportin your shell or.envfiles, which take higher priority thansettings.json→env. See the authentication guide for full details.

Security note: Never commit API keys to version control. The

~/.qwen/settings.jsonfile is in your home directory and should stay private.

Usage

As an open-source terminal agent, you can use Qwen Code in four primary ways:

- Interactive mode (terminal UI)

- Headless mode (scripts, CI)

- IDE integration (VS Code, Zed)

- TypeScript SDK

Interactive mode

cd your-project/

qwen

Run qwen in your project folder to launch the interactive terminal UI. Use @ to reference local files (for example @src/main.ts).

Headless mode

cd your-project/

qwen -p "your question"

Use -p to run Qwen Code without the interactive UI—ideal for scripts, automation, and CI/CD. Learn more: Headless mode.

IDE integration

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

TypeScript SDK

Build on top of Qwen Code with the TypeScript SDK:

Commands & Shortcuts

Session Commands

/help- Display available commands/clear- Clear conversation history/compress- Compress history to save tokens/stats- Show current session information/bug- Submit a bug report/exitor/quit- Exit Qwen Code

Keyboard Shortcuts

Ctrl+C- Cancel current operationCtrl+D- Exit (on empty line)Up/Down- Navigate command history

Learn more about Commands

Tip: In YOLO mode (

--yolo), vision switching happens automatically without prompts when images are detected. Learn more about Approval Mode

Configuration

Qwen Code can be configured via settings.json, environment variables, and CLI flags.

| File | Scope | Description |

|---|---|---|

~/.qwen/settings.json |

User (global) | Applies to all your Qwen Code sessions. Recommended for modelProviders and env. |

.qwen/settings.json |

Project | Applies only when running Qwen Code in this project. Overrides user settings. |

The most commonly used top-level fields in settings.json:

| Field | Description |

|---|---|

modelProviders |

Define available models per protocol (openai, anthropic, gemini, vertex-ai). |

env |

Fallback environment variables (e.g. API keys). Lower priority than shell export and .env files. |

security.auth.selectedType |

The protocol to use on startup (e.g. openai). |

model.name |

The default model to use when Qwen Code starts. |

See the Authentication section above for complete

settings.jsonexamples, and the settings reference for all available options.

Benchmark Results

Terminal-Bench Performance

| Agent | Model | Accuracy |

|---|---|---|

| Qwen Code | Qwen3-Coder-480A35 | 37.5% |

| Qwen Code | Qwen3-Coder-30BA3B | 31.3% |

Ecosystem

Looking for a graphical interface?

- AionUi A modern GUI for command-line AI tools including Qwen Code

- Gemini CLI Desktop A cross-platform desktop/web/mobile UI for Qwen Code

Troubleshooting

If you encounter issues, check the troubleshooting guide.

To report a bug from within the CLI, run /bug and include a short title and repro steps.

Connect with Us

- Discord: https://discord.gg/RN7tqZCeDK

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

Acknowledgments

This project is based on Google Gemini CLI. We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.