|

Some checks are pending

Qwen Code CI / Lint (push) Waiting to run

Qwen Code CI / Test (push) Blocked by required conditions

Qwen Code CI / Test-1 (push) Blocked by required conditions

Qwen Code CI / Test-2 (push) Blocked by required conditions

Qwen Code CI / Test-3 (push) Blocked by required conditions

Qwen Code CI / Test-4 (push) Blocked by required conditions

Qwen Code CI / Test-5 (push) Blocked by required conditions

Qwen Code CI / Test-6 (push) Blocked by required conditions

Qwen Code CI / Test-7 (push) Blocked by required conditions

Qwen Code CI / Test-8 (push) Blocked by required conditions

Qwen Code CI / Post Coverage Comment (push) Blocked by required conditions

Qwen Code CI / CodeQL (push) Waiting to run

E2E Tests / E2E Test (Linux) - sandbox:docker (push) Waiting to run

E2E Tests / E2E Test (Linux) - sandbox:none (push) Waiting to run

E2E Tests / E2E Test - macOS (push) Waiting to run

* feat(cli): add dual-output sidecar mode for TUI

Adds an optional **dual-output** mode for the interactive TUI: while Qwen

Code keeps rendering normally on stdout, it concurrently emits a structured

JSON event stream on a second channel (--json-fd / --json-file) and

optionally watches a JSONL command file (--input-file) for prompts and

tool-permission responses written by an external program.

This unlocks programmatic embedding of the TUI from IDE extensions, web

frontends, CI agents, or automation scripts without forcing them to give

up the rich interactive UI in favor of --output-format=stream-json.

## Design

The TUI already has a battle-tested JSON event emitter

(`StreamJsonOutputAdapter`). This change makes that adapter pluggable on

its output stream and wires a small `DualOutputBridge` that forwards TUI

events to a second instance of the adapter writing to fd / file.

For tool approvals, when a tool enters awaiting_approval the bridge emits

`control_request` (subtype `can_use_tool`); whichever side resolves first

(TUI's native UI or `confirmation_response` via --input-file) wins, and a

`control_response` is mirrored back so all observers stay in sync.

`session_start` is announced once when the bridge is constructed so

consumers can correlate the channel with a session before any other event

arrives.

## CLI surface

- `--json-fd <n>` — write JSON events to fd n (n >= 3; provided via spawn

stdio).

- `--json-file <path>` — write JSON events to a file / FIFO / /dev/fd/N.

- `--input-file <path>` — watch this file for JSONL commands.

`--json-fd` and `--json-file` are mutually exclusive. fds 0/1/2 are

rejected to prevent corrupting the TUI.

## Wire protocol

Output: existing stream-json schema with `includePartialMessages` always

enabled, plus:

- `system` / `subtype: session_start` — emitted once on bridge

construction.

- `control_request` / `subtype: can_use_tool` — pending tool approval.

- `control_response` — final approval outcome (mirrors TUI-native or

external resolution).

Input (--input-file):

{"type":"submit","text":"What does this function do?"}

{"type":"confirmation_response","request_id":"...","allowed":true}

`submit` is queued and retried when the TUI returns to idle.

`confirmation_response` is dispatched immediately — a pending tool call

is blocking and the response cannot wait behind earlier submits.

See `docs/users/features/dual-output.md` for the full schema, latency

notes, failure modes, and a spawn example.

## What changes when the flags are absent

Nothing. The bridge and watcher are constructed only when the relevant

flags are set; otherwise the React Context providers carry `null` and

every callsite short-circuits. No overhead, no behavioral change for

existing users.

## Failure handling

- Bad fd / unopenable path → warning on stderr, dual output stays

disabled, TUI launches normally.

- Consumer disconnect (EPIPE) → bridge silently disables itself, TUI

keeps running.

- Any exception inside the adapter → caught, logged, bridge disabled.

The TUI is never crashed by a dual-output failure.

## Files

New:

- packages/cli/src/dualOutput/{DualOutputBridge,DualOutputContext,index}.{ts,tsx}

- packages/cli/src/remoteInput/{RemoteInputWatcher,RemoteInputContext,index}.{ts,tsx}

- packages/cli/src/nonInteractive/io/index.ts

- docs/users/features/dual-output.md

Modified:

- packages/core/src/config/config.ts — 3 new ConfigParameters fields + getters

- packages/cli/src/config/config.ts — yargs options + mutex validation

- packages/cli/src/gemini.tsx — instantiate bridge / watcher in

startInteractiveUI, wrap with Context Providers, register cleanup

- packages/cli/src/ui/AppContainer.tsx — connect RemoteInput to

submitQuery, bridge tool confirmations

- packages/cli/src/ui/hooks/useGeminiStream.ts — call

dualOutput?.processEvent(...) at five existing event points

- packages/cli/src/nonInteractive/io/{Base,Stream}JsonOutputAdapter.ts —

StreamJsonOutputAdapter accepts an injected output stream; base adapter

exposes emitPermissionRequest / emitControlResponse through a new

emitControlMessageImpl hook (default no-op in batch mode).

## Tests

- packages/cli/src/dualOutput/DualOutputBridge.test.ts — fd validation,

auto session_start, control-event routing, post-shutdown safety.

- packages/cli/src/remoteInput/RemoteInputWatcher.test.ts — submit

forwarding, immediate confirmation dispatch, busy/idle retry,

malformed-line tolerance, shutdown.

- packages/cli/src/nonInteractive/io/StreamJsonOutputAdapter.dualOutput.test.ts —

custom outputStream injection and new emitPermissionRequest /

emitControlResponse paths.

tsc --noEmit -p packages/cli/tsconfig.json is clean.

vitest run src/nonInteractive src/dualOutput src/remoteInput → 297 passed,

1 skipped, 11 files.

* feat(cli): dual-output capability handshake, session_end, control_error, settings.json

Incremental improvements on top of the initial dual-output PR based on

reviewer feedback. All extensions are additive; older consumers that

ignore unknown fields keep working.

## Capability handshake in session_start

`session_start.data` now carries three new fields so consumers can

feature-detect without sniffing the stream:

- `protocol_version` (integer, currently 1) — bumped on any protocol

change consumers might care about.

- `version` (string) — the Qwen Code CLI version, threaded in from

`gemini.tsx`.

- `supported_events` (string[]) — the event kinds this bridge version

is known to emit, exported as `SUPPORTED_EVENTS` from the module.

## session_end on bridge shutdown

DualOutputBridge.shutdown() now emits a final

`system` / `session_end` event carrying `session_id` before closing the

stream. Gives consumers a definitive termination signal rather than

requiring them to infer it from EPIPE. Idempotent — calling shutdown

twice emits exactly one session_end.

## control_error emission path

`ControlErrorResponse` (already defined in types.ts) now has a first-

class emission path: `BaseJsonOutputAdapter.emitControlError(requestId,

message)` → `control_response` with `subtype: 'error'`. Wired into

AppContainer's remote-input confirmation handler so that a

`confirmation_response` referencing an unknown / already-resolved

request_id produces a structured error reply instead of silently

dropping, letting consumers retry or surface the error.

## settings.json support

New `dualOutput` top-level settings block with `jsonFile` and

`inputFile` properties. `--json-fd` has no settings equivalent (fd

passing is a spawn-time concern). CLI flag wins over settings when

both are present, so scripted one-off runs still work unchanged.

`requiresRestart: true` since the bridge is constructed once at

startup.

## Documentation

`docs/users/features/dual-output.md` gains three major sections:

- **Use cases** — concrete integration scenarios (terminal+chat dual

sync, IDE extensions, web frontends, CI observers, multi-agent

orchestration, session replay, observability, QA).

- **Why two output flags?** — detailed rationale for coexisting

`--json-fd` and `--json-file`, including the PTY constraint

(`node-pty` / `bun-pty` expose no stdio array, and `forkpty(3)` /

`login_tty` actively close fds >= 3 before exec).

- **Comparison with Claude Code's stream-json** — schema-parity

matrix, transport-topology differences, permission-control-plane

behavioral notes, and a "room to improve" section as a design

horizon.

- **Runnable demos** — seven copy-paste POCs: event observer, remote

submit, permission bridge, Node embedder with capability

feature-detection, session_end handling, failure drills.

- **Settings-based configuration** — example settings.json snippet and

precedence rules.

## Tests

- DualOutputBridge.test.ts: new cases for capability handshake shape,

session_end on shutdown, shutdown idempotency, and emitControlError.

- StreamJsonOutputAdapter.dualOutput.test.ts: new case for

emitControlError at the adapter level.

302 passed, 1 skipped, 11 files. tsc --noEmit -p packages/cli is clean.

* docs(dual-output): shrink Claude Code comparison to one honest sentence

After actually reading the Claude Code source (src/cli/structuredIO.ts,

src/bridge/*, src/utils/messages/systemInit.ts), the previous

"Comparison with Claude Code's stream-json" section was overstated:

- Claude Code has no equivalent of TUI + sidecar running simultaneously.

Its stream-json only works with --print (non-interactive); the bridge

in src/bridge/* is Anthropic's own remote worker protocol, not a

local embedding surface.

- CC uses `system/init` (not `session_start`) and has no session_end in

the wire protocol, so the schema-parity table contained false ticks.

- Framing this PR as "parity with Claude Code" is therefore inaccurate;

it's filling a gap Claude Code does not address.

Replace the whole multi-section comparison (schema matrix, transport

table, permission notes, borrow list, roadmap) with a single sentence

stating the accurate relation: same event format in spirit, different

topology — CC's is non-interactive only.

* fix(cli): address review feedback on dual-output sidecar mode

- Fix control_response mirror: external-initiated confirmations now

emit control_response via the same mirror useEffect as TUI-native

resolutions, making the emission path symmetric for all observers.

- Fix ENOENT: --json-file with a non-existent path now falls back to

createWriteStream (auto-creates the file) instead of throwing.

- Fix race: add reading guard to RemoteInputWatcher.readNewLines()

preventing duplicate command processing on rapid appends.

- Refactor confirmationHandler to use refs (pendingToolCallsRef,

dualOutputRef) and register once (deps: [remoteInput]) to eliminate

teardown/re-registration churn.

- Add debug logging to shutdown bare catch for ops correlation.

- Add ENOENT fallback test case for DualOutputBridge.

- Regenerate settings.schema.json for dualOutput section.

Generated with AI

Co-authored-by: Qwen-Coder <qwen-coder@alibabacloud.com>

* fix(cli): make RemoteInputWatcher poll interval configurable for CI reliability

RemoteInputWatcher.test.ts was timing out in CI (5s default) because

fs.watchFile's 500ms poll interval is unreliable under load. Fix:

- Accept optional `pollIntervalMs` in constructor (default 500ms).

- Tests use 100ms poll interval for faster feedback.

- Increase per-test timeout to 15s and waitFor timeout to 10s.

- Increase "TUI busy" wait from 800ms to 1500ms for CI headroom.

Generated with AI

Co-authored-by: Qwen-Coder <qwen-coder@alibabacloud.com>

* fix(cli): eliminate fs.watchFile timing dependency in RemoteInputWatcher tests

Tests were flaky across all CI platforms (macOS/ubuntu/windows) because

fs.watchFile polling (even at 100ms) is unreliable under CI load.

Fix: expose checkForNewInput() as a public method that directly triggers

file reading and returns a Promise. Tests now call it synchronously after

writing to the input file — no polling, no timeouts, deterministic.

Also fixes:

- Windows ENOTEMPTY: add delay in afterEach before rmSync

- Add active check in readNewLines to respect shutdown state

- readNewLines now returns Promise<void> for awaitable reads

Generated with AI

Co-authored-by: Qwen-Coder <qwen-coder@alibabacloud.com>

---------

Co-authored-by: 秦奇 <gary.gq@alibaba-inc.com>

Co-authored-by: Qwen-Coder <qwen-coder@alibabacloud.com>

|

||

|---|---|---|

| .github | ||

| .husky | ||

| .qwen | ||

| .vscode | ||

| docs | ||

| docs-site | ||

| eslint-rules | ||

| integration-tests | ||

| packages | ||

| scripts | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .prettierrc.json | ||

| .yamllint.yml | ||

| AGENTS.md | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| esbuild.config.js | ||

| eslint.config.js | ||

| LICENSE | ||

| Makefile | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| vitest.config.ts | ||

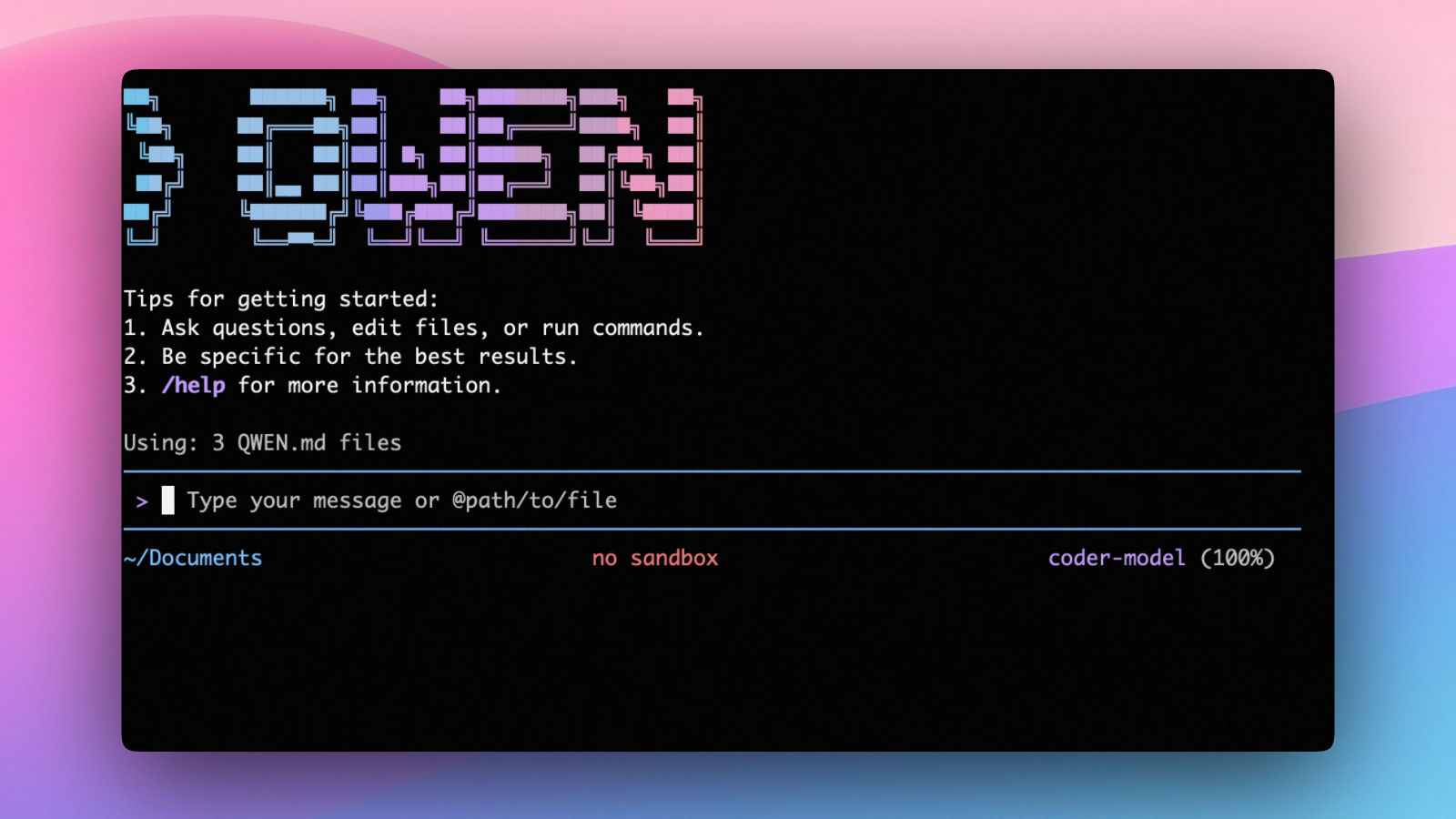

An open-source AI agent that lives in your terminal.

中文 | Deutsch | français | 日本語 | Русский | Português (Brasil)

🎉 News

-

2026-04-15: Qwen OAuth free tier has been discontinued. To continue using Qwen Code, switch to Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key. Run

qwen authto configure. -

2026-04-13: Qwen OAuth free tier policy update: daily quota adjusted to 100 requests/day (from 1,000).

-

2026-04-02: Qwen3.6-Plus is now live! Get an API key from Alibaba Cloud ModelStudio to access it through the OpenAI-compatible API.

-

2026-02-16: Qwen3.5-Plus is now live!

Why Qwen Code?

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

- Multi-protocol, flexible providers: use OpenAI / Anthropic / Gemini-compatible APIs, Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key.

- Open-source, co-evolving: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

- Agentic workflow, feature-rich: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

- Terminal-first, IDE-friendly: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

Installation

Quick Install (Recommended)

Linux / macOS

bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

Windows (Run as Administrator)

Works in both Command Prompt and PowerShell:

powershell -Command "Invoke-WebRequest 'https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat' -OutFile (Join-Path $env:TEMP 'install-qwen.bat'); & (Join-Path $env:TEMP 'install-qwen.bat')"

Note

: It's recommended to restart your terminal after installation to ensure environment variables take effect.

Manual Installation

Prerequisites

Make sure you have Node.js 20 or later installed. Download it from nodejs.org.

NPM

npm install -g @qwen-code/qwen-code@latest

Homebrew (macOS, Linux)

brew install qwen-code

Quick Start

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

On first use, you'll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Example prompts:

What does this project do?

Explain the codebase structure.

Help me refactor this function.

Generate unit tests for this module.

Click to watch a demo video

🦞 Use Qwen Code for Coding Tasks in Claw

Copy the prompt below and paste it into your agent:

Use Qwen Code as a coding agent. First, fetch and read this skill reference:

https://raw.githubusercontent.com/QwenLM/qwen-code/refs/heads/main/.qwen/skills/qwen-code-claw/SKILL.md

Then follow the instructions inside to install, authenticate, and use Qwen Code via acpx for all coding tasks.

Authentication

Qwen Code supports the following authentication methods:

- API Key (recommended): use an API key from Alibaba Cloud Model Studio (Beijing / intl) or any supported provider (OpenAI, Anthropic, Google GenAI, and other compatible endpoints).

- Coding Plan: subscribe to the Alibaba Cloud Coding Plan (Beijing / intl) for a fixed monthly fee with higher quotas.

⚠️ Qwen OAuth was discontinued on April 15, 2026. If you were previously using Qwen OAuth, please switch to one of the methods above. Run

qwenand then/authto reconfigure.

API Key (recommended)

Use an API key to connect to Alibaba Cloud Model Studio or any supported provider. Supports multiple protocols:

- OpenAI-compatible: Alibaba Cloud ModelStudio, ModelScope, OpenAI, OpenRouter, and other OpenAI-compatible providers

- Anthropic: Claude models

- Google GenAI: Gemini models

The recommended way to configure models and providers is by editing ~/.qwen/settings.json (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

Quick Setup in 3 Steps

Step 1: Create or edit ~/.qwen/settings.json

Here is a complete example:

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"description": "Qwen3-Coder via Dashscope",

"envKey": "DASHSCOPE_API_KEY"

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Step 2: Understand each field

| Field | What it does |

|---|---|

modelProviders |

Declares which models are available and how to connect to them. Keys like openai, anthropic, gemini represent the API protocol. |

modelProviders[].id |

The model ID sent to the API (e.g. qwen3.6-plus, gpt-4o). |

modelProviders[].envKey |

The name of the environment variable that holds your API key. |

modelProviders[].baseUrl |

The API endpoint URL (required for non-default endpoints). |

env |

A fallback place to store API keys (lowest priority; prefer .env files or export for sensitive keys). |

security.auth.selectedType |

The protocol to use on startup (openai, anthropic, gemini, vertex-ai). |

model.name |

The default model to use when Qwen Code starts. |

Step 3: Start Qwen Code — your configuration takes effect automatically:

qwen

Use the /model command at any time to switch between all configured models.

More Examples

Coding Plan (Alibaba Cloud ModelStudio) — fixed monthly fee, higher quotas

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.6-plus from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

},

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "glm-4.7",

"name": "glm-4.7 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "glm-4.7 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "kimi-k2.5",

"name": "kimi-k2.5 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "kimi-k2.5 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Subscribe to the Coding Plan and get your API key at Alibaba Cloud ModelStudio(Beijing) or Alibaba Cloud ModelStudio(intl).

Multiple providers (OpenAI + Anthropic + Gemini)

{

"modelProviders": {

"openai": [

{

"id": "gpt-4o",

"name": "GPT-4o",

"envKey": "OPENAI_API_KEY",

"baseUrl": "https://api.openai.com/v1"

}

],

"anthropic": [

{

"id": "claude-sonnet-4-20250514",

"name": "Claude Sonnet 4",

"envKey": "ANTHROPIC_API_KEY"

}

],

"gemini": [

{

"id": "gemini-2.5-pro",

"name": "Gemini 2.5 Pro",

"envKey": "GEMINI_API_KEY"

}

]

},

"env": {

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "gpt-4o"

}

}

Enable thinking mode (for supported models like qwen3.5-plus)

{

"modelProviders": {

"openai": [

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (thinking)",

"envKey": "DASHSCOPE_API_KEY",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.5-plus"

}

}

Tip: You can also set API keys via

exportin your shell or.envfiles, which take higher priority thansettings.json→env. See the authentication guide for full details.

Security note: Never commit API keys to version control. The

~/.qwen/settings.jsonfile is in your home directory and should stay private.

Local Model Setup (Ollama / vLLM)

You can also run models locally — no API key or cloud account needed. This is not an authentication method; instead, configure your local model endpoint in ~/.qwen/settings.json using the modelProviders field.

Ollama setup

- Install Ollama from ollama.com

- Pull a model:

ollama pull qwen3:32b - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "qwen3:32b",

"name": "Qwen3 32B (Ollama)",

"baseUrl": "http://localhost:11434/v1",

"description": "Qwen3 32B running locally via Ollama"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3:32b"

}

}

vLLM setup

- Install vLLM:

pip install vllm - Start the server:

vllm serve Qwen/Qwen3-32B - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "Qwen/Qwen3-32B",

"name": "Qwen3 32B (vLLM)",

"baseUrl": "http://localhost:8000/v1",

"description": "Qwen3 32B running locally via vLLM"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "Qwen/Qwen3-32B"

}

}

Usage

As an open-source terminal agent, you can use Qwen Code in four primary ways:

- Interactive mode (terminal UI)

- Headless mode (scripts, CI)

- IDE integration (VS Code, Zed)

- TypeScript SDK

Interactive mode

cd your-project/

qwen

Run qwen in your project folder to launch the interactive terminal UI. Use @ to reference local files (for example @src/main.ts).

Headless mode

cd your-project/

qwen -p "your question"

Use -p to run Qwen Code without the interactive UI—ideal for scripts, automation, and CI/CD. Learn more: Headless mode.

IDE integration

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

TypeScript SDK

Build on top of Qwen Code with the TypeScript SDK:

Commands & Shortcuts

Session Commands

/help- Display available commands/clear- Clear conversation history/compress- Compress history to save tokens/stats- Show current session information/bug- Submit a bug report/exitor/quit- Exit Qwen Code

Keyboard Shortcuts

Ctrl+C- Cancel current operationCtrl+D- Exit (on empty line)Up/Down- Navigate command history

Learn more about Commands

Tip: In YOLO mode (

--yolo), vision switching happens automatically without prompts when images are detected. Learn more about Approval Mode

Configuration

Qwen Code can be configured via settings.json, environment variables, and CLI flags.

| File | Scope | Description |

|---|---|---|

~/.qwen/settings.json |

User (global) | Applies to all your Qwen Code sessions. Recommended for modelProviders and env. |

.qwen/settings.json |

Project | Applies only when running Qwen Code in this project. Overrides user settings. |

The most commonly used top-level fields in settings.json:

| Field | Description |

|---|---|

modelProviders |

Define available models per protocol (openai, anthropic, gemini, vertex-ai). |

env |

Fallback environment variables (e.g. API keys). Lower priority than shell export and .env files. |

security.auth.selectedType |

The protocol to use on startup (e.g. openai). |

model.name |

The default model to use when Qwen Code starts. |

See the Authentication section above for complete

settings.jsonexamples, and the settings reference for all available options.

Benchmark Results

Terminal-Bench Performance

| Agent | Model | Accuracy |

|---|---|---|

| Qwen Code | Qwen3-Coder-480A35 | 37.5% |

| Qwen Code | Qwen3-Coder-30BA3B | 31.3% |

Ecosystem

Looking for a graphical interface?

- AionUi A modern GUI for command-line AI tools including Qwen Code

- Gemini CLI Desktop A cross-platform desktop/web/mobile UI for Qwen Code

Troubleshooting

If you encounter issues, check the troubleshooting guide.

Common issues:

Qwen OAuth free tier was discontinued on 2026-04-15: Qwen OAuth is no longer available. Runqwen→/authand switch to API Key or Coding Plan. See the Authentication section above for setup instructions.

To report a bug from within the CLI, run /bug and include a short title and repro steps.

Connect with Us

- Discord: https://discord.gg/RN7tqZCeDK

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

Acknowledgments

This project is based on Google Gemini CLI. We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.