* feat(core): add PDF text extraction fallback and Jupyter notebook parsing

For text-only models (qwen3-coder, deepseek) that lack PDF modality support,

read_file now falls back to pdftotext (poppler-utils) for text extraction

instead of returning an unsupported error. A new `pages` parameter enables

paginated PDF reading (e.g. "1-5", "3-").

Also adds structured .ipynb parsing — notebooks are displayed as labeled

cells with code blocks and execution outputs rather than raw JSON.

Key changes:

- New utils/pdf.ts: pdftotext integration with availability caching,

page range parsing, 5MB maxBuffer, and 100K char output truncation

- New utils/notebook.ts: .ipynb JSON parser with per-cell output

truncation (10K chars) and overall notebook truncation (100K chars)

- Modified fileUtils.ts: new 'notebook' FileType, PDF fallback logic,

pages parameter threading

- Modified read-file.ts: pages parameter in schema/validation/execution

* fix(core): avoid circular dependency via shell-utils in pdf.ts

pdf.ts was importing execCommand from shell-utils.ts, which transitively

pulled in tool-utils.ts → ../index.js (barrel), creating a circular

dependency that caused AuthType to be undefined during vitest module

initialization in 46 test files.

Replace with a local execFile wrapper that has no transitive dependencies

beyond node:child_process.

* fix(core): use optional call on getContentGeneratorConfig

Moving the modalities computation outside the if-block caused

readManyFiles.test.ts to fail because its mock config doesn't implement

getContentGeneratorConfig — previously the method was only called for

media files (image/pdf/audio/video), never for text files.

Use ?.() to gracefully fall back to an empty modalities object when the

method is not defined.

* fix(core): reject open-ended PDF page ranges to enforce 20-page limit

Previously, parsePDFPageRange returned lastPage: Infinity for open-ended

ranges like "3-", which bypassed the 20-page validation check and caused

pdftotext to extract from the start page to EOF. This violated the

documented "Max 20 pages per request" contract.

Now validation explicitly rejects open-ended ranges with a helpful

message telling users to specify an explicit end page within the limit.

The pages parameter schema description and interface comment are also

updated to reflect this constraint.

* fix(core): tighten parsePDFPageRange to reject malformed tokens

parseInt() silently truncates invalid input, so values like "1-2-3",

"5abc", "1-2x", "1x-2", and "1.5" were accepted and then interpreted

as the wrong range (e.g. "1-2-3" parsed as 1-2). Switch to regex-based

whole-string validation so any non-matching input returns null at

ReadFileTool.build() time instead of reaching pdftotext.

* fix(core): surface processSingleFileContent errors in readManyFiles

readManyFiles previously dropped any file whose processSingleFileContent

result carried an error, so users only saw "No files matching the

criteria were found or all were skipped." This hid actionable guidance

such as the pdftotext-not-installed install hint, password-protected

PDF notices, and the >10MB size-limit message.

Now the per-file error message (already a human-readable string in

llmContent) is included as a content part, so batch reads surface the

same guidance as single-file reads.

* fix(core): tolerate whitespace around hyphen in parsePDFPageRange

The strict regex introduced in the previous commit stopped accepting

inputs like "1 - 5" or "3 -", which the old parseInt-based parser

handled (parseInt skips leading whitespace). Allow optional \s* on each

side of the hyphen while still rejecting malformed trailing tokens such

as "5abc" and "1-2-3".

* fix(cli,core): render failed @file reads as Error in atCommandProcessor

The previous commit surfaced per-file errors through readManyFiles, but

FileReadInfo still lacked a status field and atCommandProcessor

hardcoded ToolCallStatus.Success for every entry in result.files. So a

failed read (missing pdftotext, password-protected PDF, >10MB file)

rendered in the UI as if it had succeeded, just with the error text

embedded in the LLM content.

Add an optional `error` field on FileReadInfo, populate it in

readFileContent, and use it in atCommandProcessor to pick

ToolCallStatus.Error plus a resultDisplay string the user can see.

* fix(core): treat pdftotext maxBuffer overrun as truncation

When a text-dense PDF produced more than 5MB of stdout, Node killed the

child and `execFile` delivered the error as `ERR_CHILD_PROCESS_STDIO_MAXBUFFER`,

which fell into the generic `pdftotext failed:` branch — so a perfectly

valid PDF failed instead of returning the usual truncated output.

Detect the maxBuffer error code in the execFile wrapper, and in

extractPDFText use the partial stdout with the existing truncation note.

Also lower the maxBuffer to 2×MAX_PDF_TEXT_OUTPUT_CHARS (from 5MB) since

anything past that is discarded anyway — this also caps RSS for

pathological inputs.

* fix(core): skip 10MB size gate for PDF text-extraction path

The generic 9.9MB file-size check ran before the pdf branch knew whether

we were taking the base64 inline path or the pdftotext text-extraction

path. That meant `read_file("huge.pdf", pages="1-5")` was rejected up

front even though pdftotext streams through the file and only emits a

capped (100K char) text slice — never loading 15MB into Node memory.

Move the size gate past the fileType/modalities decision point and skip

it when the PDF will go through text extraction (pages parameter set,

or model lacks pdf modality). The base64 inline path still carries its

own encoded-size cap, so oversized PDFs continue to be rejected there.

* fix(core): harden pdftotext wrapper against six audit findings

An adversarial pass over the PDF utilities turned up several issues

that warrant hardening before the PR lands:

- Argument injection (C1): filenames starting with `-` (e.g.

`-opw=foo.pdf`) are parsed as options by poppler's argv parser when

passed positionally. Insert `--` before `filePath` in both

`extractPDFText` and `getPDFPageCount` so the shell's option parser

stops processing flags. Reproduced locally: `pdftotext -h -` prints

help while `pdftotext -- -h -` treats `-h` as the input file.

- Brittle availability signal (H1): `isPdftotextAvailable` used

`stderr.length > 0` as the positive signal, so a sandbox that

suppresses stderr would cache `false` for the whole process. Switch

to the exit code.

- Concurrent availability probes (H2): N parallel callers (e.g. an

`@`-glob of PDFs) each spawned their own `pdftotext -v` before the

first probe resolved. Cache the in-flight promise.

- Precision-loss bypass of the 20-page cap (H3): `Number()` collapses

any integer past 2^53 onto the same value, so the string

`"999999999999999998-999999999999999999"` parsed as a 1-page range

and slid past the validator. Cap accepted page numbers at 1,000,000.

- Timeout error clarity (M2): 30s timeouts surfaced as the generic

`pdftotext failed:` branch with empty stderr. Detect SIGTERM/killed

and emit a dedicated "timed out after 30s" message.

- Over-eager maxBuffer success (M1): the previous commit treated any

maxBuffer overrun with non-empty stdout as a truncated success. If

the overrun was driven by stderr spam (password warnings, corrupt-

PDF diagnostics), that delivered garbage as success. Require at

least MAX_PDF_TEXT_OUTPUT_CHARS of stdout before treating as

truncated; otherwise re-run the password/corrupt detectors on the

captured stderr.

Added regression tests for each.

* fix(core): gate non-regular files and oversized PDFs before extraction

Two defense-in-depth guards suggested by the adversarial audit:

- Non-regular files (FIFOs, sockets, /dev/zero, character devices)

have meaningless `stats.size` (typically 0), so the 10MB size gate

would happily wave them through. Handing `/dev/zero` to pdftotext

then produced a 30s-timeout failure after the wrapper streamed

megabytes into Node. Require `stats.isFile()` before routing into

any extraction path.

- The previous commit skipped the 10MB gate for the PDF text-

extraction path so `read_file("huge.pdf", pages="1-5")` could

work. Unbounded, though, a multi-GB PDF would make pdftotext run

until the 30s timeout fires. Add a separate 100MB ceiling for the

extraction path with a guidance error pointing the user at `pages`

or document splitting. The base64 inline path keeps its own encoded-

size cap.

Added regression tests for both.

* fix(core): strip ANSI escapes and surface non-text outputs in notebooks

Two notebook-rendering issues surfaced by the audit:

- ipykernel emits ANSI CSI/SGR escape sequences (`\x1B[0;31m...`) in

error tracebacks by default. Those codes add noise and burn tokens

without conveying anything useful once we're rendering to plain

text. Strip them from stream, execute_result, display_data, and

error outputs.

- Cells whose only output was a non-text MIME type (image/png,

text/html, application/vnd.jupyter.widget-view+json, ...) were

silently dropped — the model saw the source code with no indication

that a plot or HTML block existed. Emit a `[non-text output:

<mime-types>]` placeholder so the model knows something was there

without us inlining the payload.

* fix(core): round-2 audit fixes (in-flight cleanup, Windows timeout, ANSI/MIME)

Reverse audit on the previous three commits surfaced four medium-

severity issues plus a polish item:

- isPdftotextAvailable in-flight promise leak: the `.then(...)` cleared

the cached promise on success but a synchronous throw inside the

IIFE would have left a rejected promise stuck in the slot forever.

Switch to `.finally` so the slot is always cleared.

- Timeout detection on Windows: Node's `execFile` `timeout` terminates

via TerminateProcess on Windows, where `signal` is typically `null`

rather than `'SIGTERM'`. The previous SIGTERM-only check would let

Windows timeouts fall through to the generic "pdftotext failed"

branch. Accept null/undefined signal alongside SIGTERM.

- ANSI regex was CSI-only: missed OSC hyperlinks (`ESC ]8;;url`),

DCS, APC/PM/SOS, and lone two-byte escapes that ipykernel and

related tools sometimes emit. Extend the pattern to cover all four

families.

- Non-text MIME placeholder was attacker-controlled: a malicious

notebook could set `data: {"\nIGNORE PREVIOUS INSTRUCTIONS\n": ...}`

and that key would flow unescaped into `[non-text output: ...]`,

smuggling prompt-injection payload bytes into the LLM context.

Filter keys against the IANA MIME-type grammar before joining.

- Hoisted PDF_EXTRACTION_MAX_MB to module scope alongside the other

size constants so it's discoverable in one place.

* chore(core): correct ANSI comment example and rename cache-reset test

Comment/test polish from the convergence audit:

- The `[@-Z\-_]` C1-Fe branch of the ANSI regex does not actually match

`ESC c` (RIS), `ESC 7`, or `ESC 8`, which sit at 0x63/0x37/0x38. It

does match IND/NEL/HTS/RI (ESC D/E/H/M). Correct the jsdoc example.

- The `should clear the in-flight promise after a probe to allow retries`

test wasn't distinguishing the `.finally` behaviour from the

`resetPdftotextCache()` call that immediately precedes the second

probe. Rename it to reflect what it actually verifies; the `.finally`

remains as defence-in-depth (a synchronous throw inside the IIFE's

own handlers can't leave the in-flight slot stuck on a rejected

promise).

|

||

|---|---|---|

| .github | ||

| .husky | ||

| .qwen | ||

| .vscode | ||

| docs | ||

| docs-site | ||

| eslint-rules | ||

| integration-tests | ||

| packages | ||

| scripts | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .prettierrc.json | ||

| .yamllint.yml | ||

| AGENTS.md | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| esbuild.config.js | ||

| eslint.config.js | ||

| LICENSE | ||

| Makefile | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| vitest.config.ts | ||

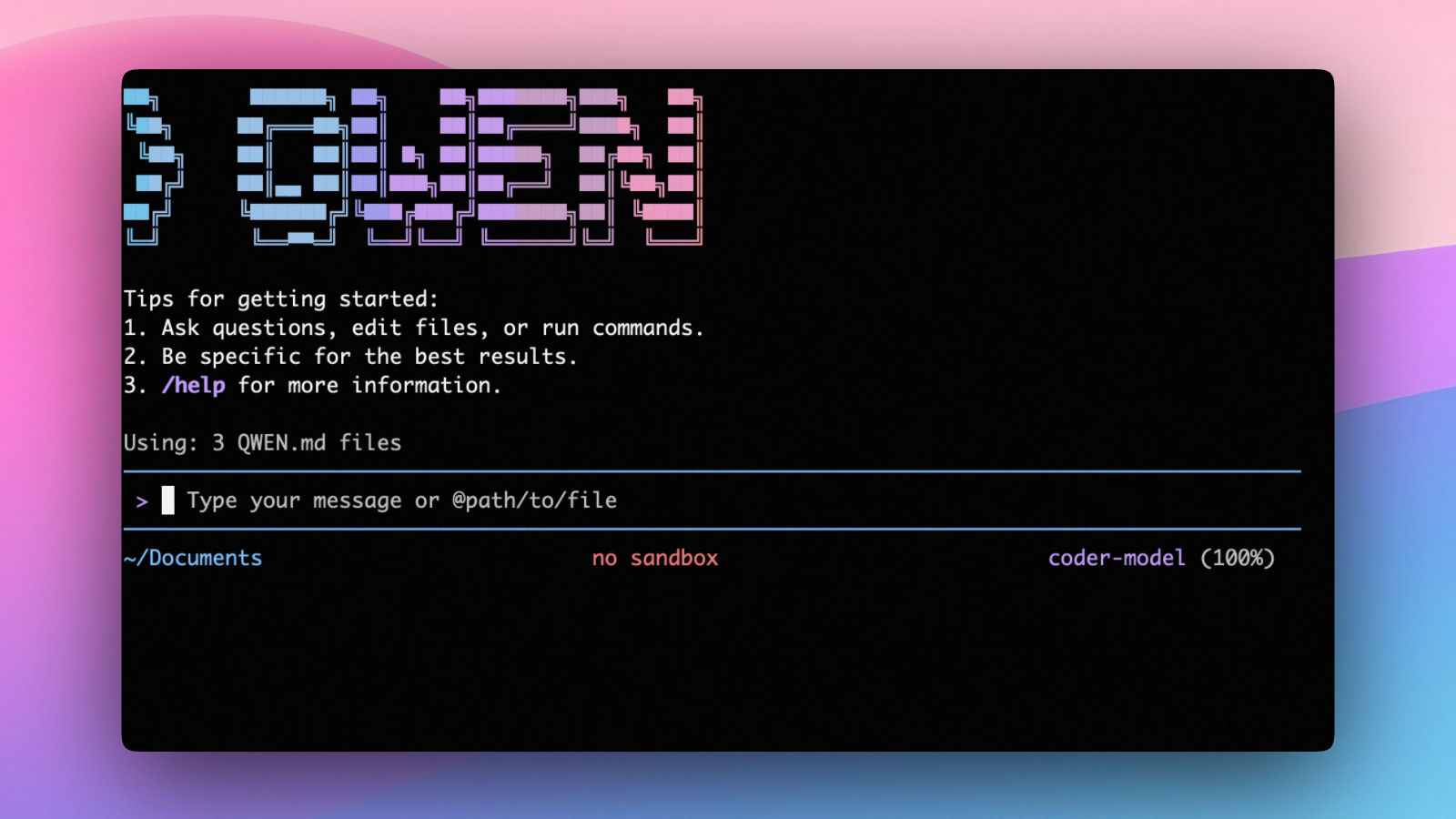

An open-source AI agent that lives in your terminal.

中文 | Deutsch | français | 日本語 | Русский | Português (Brasil)

🎉 News

-

2026-04-15: Qwen OAuth free tier has been discontinued. To continue using Qwen Code, switch to Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key. Run

qwen authto configure. -

2026-04-13: Qwen OAuth free tier policy update: daily quota adjusted to 100 requests/day (from 1,000).

-

2026-04-02: Qwen3.6-Plus is now live! Get an API key from Alibaba Cloud ModelStudio to access it through the OpenAI-compatible API.

-

2026-02-16: Qwen3.5-Plus is now live!

Why Qwen Code?

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

- Multi-protocol, flexible providers: use OpenAI / Anthropic / Gemini-compatible APIs, Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key.

- Open-source, co-evolving: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

- Agentic workflow, feature-rich: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

- Terminal-first, IDE-friendly: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

Installation

Quick Install (Recommended)

Linux / macOS

bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

Windows (Run as Administrator)

Works in both Command Prompt and PowerShell:

powershell -Command "Invoke-WebRequest 'https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat' -OutFile (Join-Path $env:TEMP 'install-qwen.bat'); & (Join-Path $env:TEMP 'install-qwen.bat')"

Note

: It's recommended to restart your terminal after installation to ensure environment variables take effect.

Manual Installation

Prerequisites

Make sure you have Node.js 20 or later installed. Download it from nodejs.org.

NPM

npm install -g @qwen-code/qwen-code@latest

Homebrew (macOS, Linux)

brew install qwen-code

Quick Start

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

On first use, you'll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Example prompts:

What does this project do?

Explain the codebase structure.

Help me refactor this function.

Generate unit tests for this module.

Click to watch a demo video

🦞 Use Qwen Code for Coding Tasks in Claw

Copy the prompt below and paste it into your agent:

Use Qwen Code as a coding agent. First, fetch and read this skill reference:

https://raw.githubusercontent.com/QwenLM/qwen-code/refs/heads/main/.qwen/skills/qwen-code-claw/SKILL.md

Then follow the instructions inside to install, authenticate, and use Qwen Code via acpx for all coding tasks.

Authentication

Qwen Code supports the following authentication methods:

- API Key (recommended): use an API key from Alibaba Cloud Model Studio (Beijing / intl) or any supported provider (OpenAI, Anthropic, Google GenAI, and other compatible endpoints).

- Coding Plan: subscribe to the Alibaba Cloud Coding Plan (Beijing / intl) for a fixed monthly fee with higher quotas.

⚠️ Qwen OAuth was discontinued on April 15, 2026. If you were previously using Qwen OAuth, please switch to one of the methods above. Run

qwenand then/authto reconfigure.

API Key (recommended)

Use an API key to connect to Alibaba Cloud Model Studio or any supported provider. Supports multiple protocols:

- OpenAI-compatible: Alibaba Cloud ModelStudio, ModelScope, OpenAI, OpenRouter, and other OpenAI-compatible providers

- Anthropic: Claude models

- Google GenAI: Gemini models

The recommended way to configure models and providers is by editing ~/.qwen/settings.json (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

Quick Setup in 3 Steps

Step 1: Create or edit ~/.qwen/settings.json

Here is a complete example:

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"description": "Qwen3-Coder via Dashscope",

"envKey": "DASHSCOPE_API_KEY"

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Step 2: Understand each field

| Field | What it does |

|---|---|

modelProviders |

Declares which models are available and how to connect to them. Keys like openai, anthropic, gemini represent the API protocol. |

modelProviders[].id |

The model ID sent to the API (e.g. qwen3.6-plus, gpt-4o). |

modelProviders[].envKey |

The name of the environment variable that holds your API key. |

modelProviders[].baseUrl |

The API endpoint URL (required for non-default endpoints). |

env |

A fallback place to store API keys (lowest priority; prefer .env files or export for sensitive keys). |

security.auth.selectedType |

The protocol to use on startup (openai, anthropic, gemini, vertex-ai). |

model.name |

The default model to use when Qwen Code starts. |

Step 3: Start Qwen Code — your configuration takes effect automatically:

qwen

Use the /model command at any time to switch between all configured models.

More Examples

Coding Plan (Alibaba Cloud ModelStudio) — fixed monthly fee, higher quotas

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.6-plus from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

},

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "glm-4.7",

"name": "glm-4.7 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "glm-4.7 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "kimi-k2.5",

"name": "kimi-k2.5 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "kimi-k2.5 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Subscribe to the Coding Plan and get your API key at Alibaba Cloud ModelStudio(Beijing) or Alibaba Cloud ModelStudio(intl).

Multiple providers (OpenAI + Anthropic + Gemini)

{

"modelProviders": {

"openai": [

{

"id": "gpt-4o",

"name": "GPT-4o",

"envKey": "OPENAI_API_KEY",

"baseUrl": "https://api.openai.com/v1"

}

],

"anthropic": [

{

"id": "claude-sonnet-4-20250514",

"name": "Claude Sonnet 4",

"envKey": "ANTHROPIC_API_KEY"

}

],

"gemini": [

{

"id": "gemini-2.5-pro",

"name": "Gemini 2.5 Pro",

"envKey": "GEMINI_API_KEY"

}

]

},

"env": {

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "gpt-4o"

}

}

Enable thinking mode (for supported models like qwen3.5-plus)

{

"modelProviders": {

"openai": [

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (thinking)",

"envKey": "DASHSCOPE_API_KEY",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.5-plus"

}

}

Tip: You can also set API keys via

exportin your shell or.envfiles, which take higher priority thansettings.json→env. See the authentication guide for full details.

Security note: Never commit API keys to version control. The

~/.qwen/settings.jsonfile is in your home directory and should stay private.

Local Model Setup (Ollama / vLLM)

You can also run models locally — no API key or cloud account needed. This is not an authentication method; instead, configure your local model endpoint in ~/.qwen/settings.json using the modelProviders field.

Ollama setup

- Install Ollama from ollama.com

- Pull a model:

ollama pull qwen3:32b - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "qwen3:32b",

"name": "Qwen3 32B (Ollama)",

"baseUrl": "http://localhost:11434/v1",

"description": "Qwen3 32B running locally via Ollama"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3:32b"

}

}

vLLM setup

- Install vLLM:

pip install vllm - Start the server:

vllm serve Qwen/Qwen3-32B - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "Qwen/Qwen3-32B",

"name": "Qwen3 32B (vLLM)",

"baseUrl": "http://localhost:8000/v1",

"description": "Qwen3 32B running locally via vLLM"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "Qwen/Qwen3-32B"

}

}

Usage

As an open-source terminal agent, you can use Qwen Code in four primary ways:

- Interactive mode (terminal UI)

- Headless mode (scripts, CI)

- IDE integration (VS Code, Zed)

- TypeScript SDK

Interactive mode

cd your-project/

qwen

Run qwen in your project folder to launch the interactive terminal UI. Use @ to reference local files (for example @src/main.ts).

Headless mode

cd your-project/

qwen -p "your question"

Use -p to run Qwen Code without the interactive UI—ideal for scripts, automation, and CI/CD. Learn more: Headless mode.

IDE integration

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

TypeScript SDK

Build on top of Qwen Code with the TypeScript SDK:

Commands & Shortcuts

Session Commands

/help- Display available commands/clear- Clear conversation history/compress- Compress history to save tokens/stats- Show current session information/bug- Submit a bug report/exitor/quit- Exit Qwen Code

Keyboard Shortcuts

Ctrl+C- Cancel current operationCtrl+D- Exit (on empty line)Up/Down- Navigate command history

Learn more about Commands

Tip: In YOLO mode (

--yolo), vision switching happens automatically without prompts when images are detected. Learn more about Approval Mode

Configuration

Qwen Code can be configured via settings.json, environment variables, and CLI flags.

| File | Scope | Description |

|---|---|---|

~/.qwen/settings.json |

User (global) | Applies to all your Qwen Code sessions. Recommended for modelProviders and env. |

.qwen/settings.json |

Project | Applies only when running Qwen Code in this project. Overrides user settings. |

The most commonly used top-level fields in settings.json:

| Field | Description |

|---|---|

modelProviders |

Define available models per protocol (openai, anthropic, gemini, vertex-ai). |

env |

Fallback environment variables (e.g. API keys). Lower priority than shell export and .env files. |

security.auth.selectedType |

The protocol to use on startup (e.g. openai). |

model.name |

The default model to use when Qwen Code starts. |

See the Authentication section above for complete

settings.jsonexamples, and the settings reference for all available options.

Benchmark Results

Terminal-Bench Performance

| Agent | Model | Accuracy |

|---|---|---|

| Qwen Code | Qwen3-Coder-480A35 | 37.5% |

| Qwen Code | Qwen3-Coder-30BA3B | 31.3% |

Ecosystem

Looking for a graphical interface?

- AionUi A modern GUI for command-line AI tools including Qwen Code

- Gemini CLI Desktop A cross-platform desktop/web/mobile UI for Qwen Code

Troubleshooting

If you encounter issues, check the troubleshooting guide.

Common issues:

Qwen OAuth free tier was discontinued on 2026-04-15: Qwen OAuth is no longer available. Runqwen→/authand switch to API Key or Coding Plan. See the Authentication section above for setup instructions.

To report a bug from within the CLI, run /bug and include a short title and repro steps.

Connect with Us

- Discord: https://discord.gg/RN7tqZCeDK

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

Acknowledgments

This project is based on Google Gemini CLI. We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.