* feat(core): add run_in_background support for Agent tool Enable sub-agents to run asynchronously via `run_in_background: true` parameter. Background agents execute independently from the parent, which receives an immediate launch confirmation and continues working. A notification is injected into the parent conversation when the background agent completes. Key changes: - BackgroundTaskRegistry tracks lifecycle of background agents - Agent tool gains async execution path with fire-and-forget semantics - Background agents use YOLO approval mode to prevent deadlock - Independent AbortControllers survive parent ESC cancellation - CLI bridges notifications via useMessageQueue for between-turn delivery - State race guards prevent complete/fail after cancellation - Session cleanup aborts all running background agents * feat(background): improve notification formatting and UI handling - Add prefix/separator protocol to distinguish background notifications from user input - Show concise summary in UI while sending full details to LLM - Add 'notification' history item type with specialized display - Add 'background' agent status for background-running agents - Prevent notifications from polluting prompt history (up-arrow) - Truncate long descriptions in display text This improves the UX for background agents by showing cleaner, more concise notifications while preserving full context for the LLM. Co-authored-by: Qwen-Coder <qwen-coder@alibabacloud.com> * fix(background): reject run_in_background in non-interactive mode Headless mode skips AppContainer, so the notification callback is never registered and background agent results would be silently dropped. Return an error prompting the model to retry without run_in_background. * refactor(background): replace prefix/separator protocol with typed notification queue Replace the stringly-typed \x00__BG_NOTIFY__\x00 prefix/separator encoding with a typed notification path using SendMessageType.Notification. - Add SendMessageType.Notification to the enum - Change BackgroundNotificationCallback to emit (displayText, modelText) - Move notification queue from AppContainer into useGeminiStream (mirrors the cron queue pattern): register on registry, queue structured items, drain on idle via submitQuery - prepareQueryForGemini short-circuits for Notification type (skips slash commands, shell mode, @-commands, prompt history logging) - Remove BACKGROUND_NOTIFICATION_PREFIX/SEPARATOR constants * refactor(background): move abortAll to Config.shutdown Background agent cleanup belongs in Config.shutdown() alongside other resource teardown (skillManager, toolRegistry, arenaRuntime), not in AppContainer's registerCleanup. This also ensures headless mode gets cleanup for free. * fix(background): persist notification items for session resume Background agent notifications were missing after session resume because they were never recorded in the chat history. The model text was absent from the API history and the display item was lost. - Add recordNotification() to ChatRecordingService — stores as user-role message with subtype 'notification' and displayText payload - Thread notificationDisplayText through submitQuery → sendMessageStream - Restore as HistoryItemNotification in resumeHistoryUtils * fix(background): replace YOLO with deny-by-default for background agents Background agents were using YOLO approval mode which auto-approves all tool calls — too permissive. Replace with shouldAvoidPermissionPrompts which auto-denies tool calls that need interactive approval, matching claw-code's approach. The permission flow for background agents is now: 1. L3/L4 permission rules (allow/deny) — same as foreground 2. Approval mode overrides (AUTO_EDIT for edits) — same as foreground 3. PermissionRequest hooks — can override the denial 4. Auto-deny — if no hook decided, deny because prompts are unavailable * fix(background): add missing getBackgroundTaskRegistry mock in useGeminiStream tests * refactor(core): move fork subagent params from execute() to construction time Identity-shaping fork inputs (parent history, generationConfig, tool decls, env-skip flag) were threaded through `AgentHeadless.execute()`'s options bag and re-passed by the SubagentStop hook retry loop. They belong on the agent's construction-time configs, not its per-invocation options. - PromptConfig gains `renderedSystemPrompt` (verbatim, bypasses templating and userMemory injection) and drops the `systemPrompt`/`initialMessages` XOR so fork can carry both. createChat skips env bootstrap when `initialMessages` is non-empty. - AgentHeadless.execute() shrinks to (context, signal?). Fork dispatch in agent.ts builds synthetic PromptConfig/ModelConfig/ToolConfig from the parent's cache-safe params and calls AgentHeadless.create directly (bypassing SubagentManager). Parent's tool decls flow through verbatim including the `agent` tool itself for cache parity. - Recursive-fork prevention switches from fork-side tool stripping to a runtime guard. The previous `isInForkChild(history)` helper was dead code (it scanned the main GeminiClient's history, not the fork child's chat). Replaced with `isInForkExecution()` backed by AsyncLocalStorage: the fork's background execution runs inside `runInForkContext`, and the ALS frame propagates through the standard async chain into nested AgentTool.execute() calls where the guard fires. * refactor(core): move agent tool files into dedicated tools/agent/ directory Move agent.ts, agent.test.ts, and fork-subagent.ts under tools/agent/ and update all import paths accordingly. * refactor(core): remove dead temp and top_p fields from ModelConfig These fields were never populated from subagent frontmatter and served no purpose in the fork path either. The ModelConfig interface retains only the actively-used model field. * refactor(core): read parent generation config directly instead of getCacheSafeParams Fork subagent now reads system instruction and tool declarations from the live GeminiChat via getGenerationConfig() instead of the global getCacheSafeParams() snapshot. This removes the cross-module coupling between the agent tool and the followup infrastructure. * fix(core): prevent duplicate tool declarations when toolConfig has only inline decls prepareTools() treated asStrings.length === 0 as "add all registry tools", which is correct when no tools are specified at all, but wrong when the caller provides only inline FunctionDeclaration[] (no string names). The fork path passes parent tool declarations as inline decls for cache parity, so prepareTools was adding the full registry set on top — duplicating every non-excluded tool. Add onlyInlineDecls.length === 0 to the condition so that pure-inline toolConfigs bypass the registry entirely. * feat(core): support agent-level `background: true` in frontmatter Subagent definitions can now declare `background: true` in their YAML frontmatter to always run as background tasks. This is OR'd with the `run_in_background` tool parameter — useful for monitors, watchers, and proactive agents so the LLM doesn't need to remember to set the flag. * fix(core): address background subagent lifecycle gaps - Inherit bgConfig from agentConfig so the resolved approval mode is preserved for background agents (foreground would run AUTO_EDIT but background fell back to DEFAULT, which combined with shouldAvoid- PermissionPrompts would auto-deny every permission request). - Honor SubagentStop blocking decisions in background runs by looping on hook output up to 5 iterations, matching runSubagentWithHooks. - Check terminate mode before reporting completion; non-GOAL modes (ERROR, MAX_TURNS, TIMEOUT) are now reported as failures instead of emitting a success notification for an incomplete run. - Exclude SendMessageType.Notification from the UserPromptSubmit hook guard so background completion messages are not rewritten or blocked as if they were user input. * feat(cli): headless support and SDK task events for background agents (#3379) * feat(cli): unify notification queue for cron and background agents Migrate cron from its own queue (cronQueueRef / cronQueue) to the shared notification queue used by background agents. Both producers now push the same item shape { displayText, modelText, sendMessageType } and a single drain effect / helper processes them in FIFO order. Cron fires render as HistoryItemNotification (● prefix) instead of HistoryItemUser (> prefix), with a "Cron: <prompt>" display label. Records use subtype 'cron' for clean resume and analytics separation. Lift the non-interactive rejection for background agents. Register a notification callback in nonInteractiveCli.ts with a terminal hold-back phase (100ms poll) that keeps the process alive until all background agents complete and their notifications are processed. * feat(cli): emit SDK task events for background subagents Emit `task_started` when a background agent registers and `task_notification` when it completes, fails, or is cancelled, so headless/SDK consumers can track lifecycle without parsing display text. Model-facing text is now structured XML with status, summary, truncated result, and usage stats. Completion stats (tokens, tool uses, duration) are captured from the subagent and included in both the SDK payload and the model XML. * fix: address codex review issues for background subagents - Background subagents now inherit the resolved approval mode from agentConfig instead of the raw session config, so a subagent with `approvalMode: auto-edit` (or execution in a trusted folder) keeps that override when it runs asynchronously. - Non-interactive cron drains are single-flight: concurrent cron fires now await the same in-flight drain, and the cron-done check gates on it, preventing the final result from being emitted while a cron turn is still streaming. - Background forks go through createForkSubagent so they retain the parent's rendered system prompt and inherited history instead of degrading to a plain FORK_AGENT. * fix(cli): restore cancellation, approval, and error paths in queued drain - Hold-back loop now reacts to SIGINT/SIGTERM: when the main abort signal fires it calls registry.abortAll() so background agents with their own AbortControllers stop promptly instead of pinning the process open. - Queued-turn tool execution forwards the stream-json approval update callback (onToolCallsUpdate) so permission-gated tools inside a background-notification follow-up emit can_use_tool requests. - Queued-turn stream loop mirrors the main loop's text-mode handling of GeminiEventType.Error, writing to stderr and throwing so provider errors produce a non-zero exit code instead of silently succeeding. - Interactive cron prompts go through the normal slash/@-command/shell preprocessing again; only Notification messages skip that path. * fix(cli): skip duplicate user-message item for cron prompts Cron prompts already render as a `● Cron: …` notification via the queue drain, so adding them again as a `USER` history item produced a duplicate `> …` line. * fix(cli): honor SIGINT/SIGTERM during cron scheduler wait The non-interactive cron phase awaits a Promise that resolves only when scheduler.size reaches 0 and no drain is in flight. Recurring cron jobs never drop the scheduler size to 0 on their own, so the previous abort handling (added to the hold-back loop) was unreachable — the process hung indefinitely after SIGINT/SIGTERM. Attach an abort listener inside the promise so abort stops the scheduler and resolves immediately, allowing the hold-back loop to run and the process to exit cleanly. * feat(core): propagate tool-use id through background agent notifications Plumb the scheduler's callId into AgentToolInvocation via an optional setCallId hook on the invocation, detected structurally in buildInvocation. The agent tool forwards it as toolUseId on the BackgroundTaskRegistry entry so completion notifications can carry a <tool-use-id> tag and SDK task_started / task_notification events can emit tool_use_id — letting consumers correlate background completions back to the original Agent tool-use that spawned them. * fix(cli): drain single-flight race kept task_notification from emitting drainLocalQueue wrapped its body in an async IIFE and cleared the promise reference via finally. When the queue is empty the IIFE has no awaits, so its finally runs synchronously as part of the RHS of the assignment `drainPromise = (async () => {...})()` — clearing drainPromise BEFORE the outer assignment overwrites it with the resolved promise. The reference then stayed stuck on that fulfilled promise forever, so later calls short-circuited through `if (drainPromise) return drainPromise` and never processed queued notifications. Symptom: in headless `--output-format json` (and `stream-json`), task_started emitted but task_notification never did, even after the background agent completed. The process sat in the hold-back loop until SIGTERM. Fix: move the null-clearing out of the async body into an outer `.finally()` on the returned promise. `.finally()` runs as a microtask after the current synchronous block, so it clears the latest drainPromise reference instead of the pre-assignment null. * fix(cli): append newline to text-mode emitResult so zsh PROMPT_SP doesn't erase the line Headless text mode wrote `resultMessage.result` without a trailing newline. In a TTY, zsh themes that use PROMPT_SP (powerlevel10k, agnoster, …) detect the missing `\n` and emit `\r\033[K` before drawing the next prompt, which wipes the final line off the screen. Pipe-captured output was unaffected, so the bug only surfaced for interactive shell users — most visibly in the background-agent flow where the drain-loop's final assistant message is the *only* stdout write in text mode. Append `\n` to both the success (stdout) and error (stderr) writes. * docs(skill): tighten worked-example blurb in structured-debugging Mirror the simplified blurb from .claude/skills/structured-debugging/SKILL.md (knowledge repo). Drops the round-by-round narrative; keeps the contradiction + two lessons. * docs(skill): mirror SKILL.md improvements (reframing failure mode, generalized path, value-logging guidance) Mirror of knowledge repo commit 38eb28d into the qwen-code .qwen/skills copy. * docs(skill): mirror worked example into .qwen/skills/structured-debugging/ Mirrors knowledge/.claude/skills/structured-debugging/examples/ headless-bg-agent-empty-stdout.md so the .qwen copy of the skill links resolve. * docs(skill): mirror generalized side-note path guidance * fix(cli): harden headless cron and background-agent failure paths Three regressions surfaced by Codex review of feat/background-subagent: - Cron drain rejections were dropped by a bare `void`, so a failing queued turn left the outer Promise unresolved and hung the run. Route drain failures through the Promise's reject so they propagate to the outer catch. - The background-agent registry entry was inserted before `createForkSubagent()` / `createAgentHeadless()` was awaited. Failed init returned an error from the tool call but left a phantom `running` entry, and the headless hold-back loop (`registry.getRunning()`) waited forever. Register only after init succeeds. - SIGINT/SIGTERM during the hold-back phase aborted background tasks, then fell through to `emitResult({ isError: false })`, so a cancelled `qwen -p ...` exited 0 with the prior assistant text. Route through `handleCancellationError()` so cancellation exits non-zero, matching the main turn loop. * test(cli): update stdout/stderr assertions for trailing newline ` |

||

|---|---|---|

| .github | ||

| .husky | ||

| .qwen | ||

| .vscode | ||

| docs | ||

| docs-site | ||

| eslint-rules | ||

| integration-tests | ||

| packages | ||

| scripts | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .prettierrc.json | ||

| .yamllint.yml | ||

| AGENTS.md | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| esbuild.config.js | ||

| eslint.config.js | ||

| LICENSE | ||

| Makefile | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| vitest.config.ts | ||

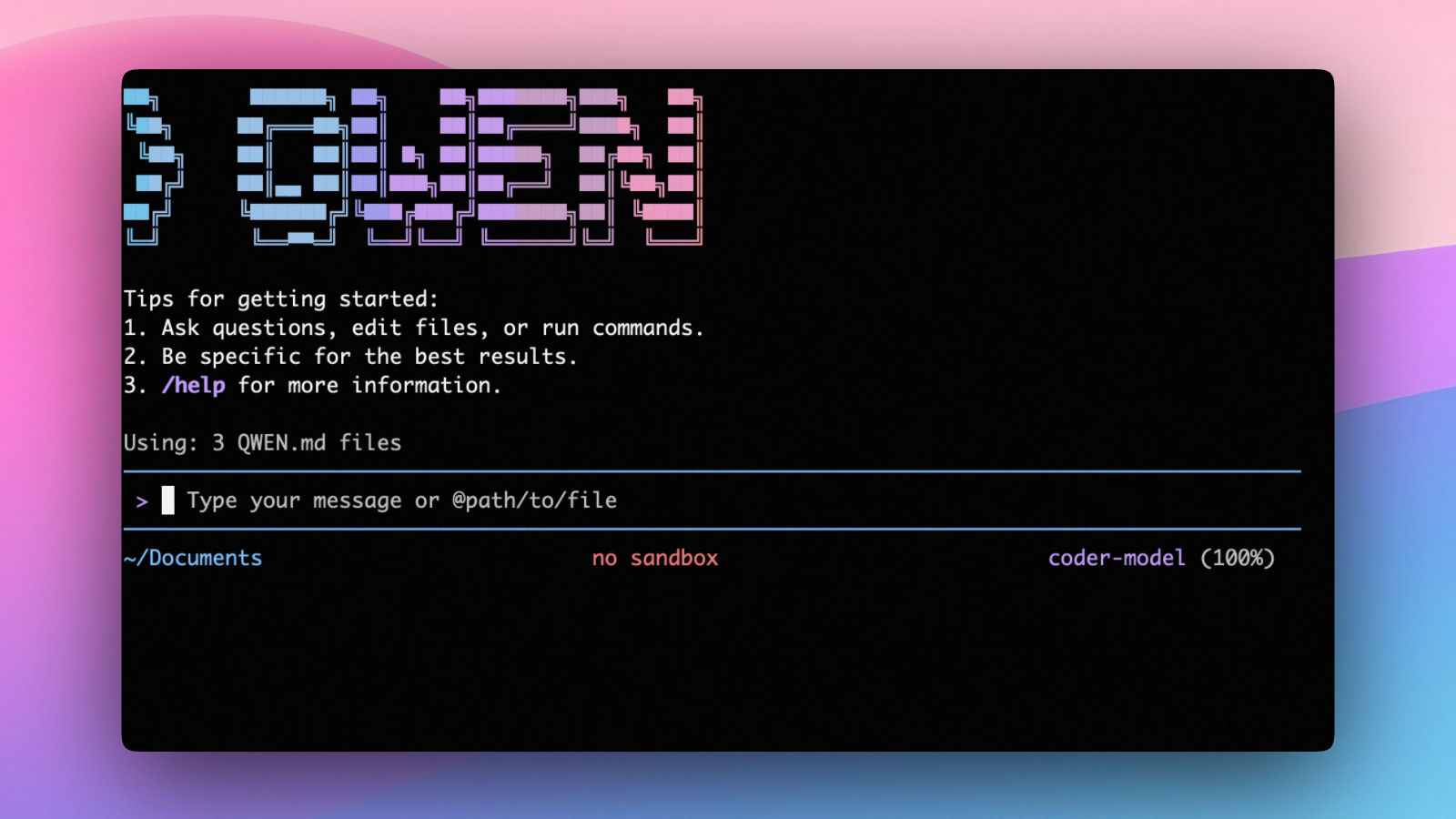

An open-source AI agent that lives in your terminal.

中文 | Deutsch | français | 日本語 | Русский | Português (Brasil)

🎉 News

-

2026-04-15: Qwen OAuth free tier has been discontinued. To continue using Qwen Code, switch to Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key. Run

qwen authto configure. -

2026-04-13: Qwen OAuth free tier policy update: daily quota adjusted to 100 requests/day (from 1,000).

-

2026-04-02: Qwen3.6-Plus is now live! Get an API key from Alibaba Cloud ModelStudio to access it through the OpenAI-compatible API.

-

2026-02-16: Qwen3.5-Plus is now live!

Why Qwen Code?

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

- Multi-protocol, flexible providers: use OpenAI / Anthropic / Gemini-compatible APIs, Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key.

- Open-source, co-evolving: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

- Agentic workflow, feature-rich: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

- Terminal-first, IDE-friendly: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

Installation

Quick Install (Recommended)

Linux / macOS

bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

Windows (Run as Administrator)

Works in both Command Prompt and PowerShell:

powershell -Command "Invoke-WebRequest 'https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat' -OutFile (Join-Path $env:TEMP 'install-qwen.bat'); & (Join-Path $env:TEMP 'install-qwen.bat')"

Note

: It's recommended to restart your terminal after installation to ensure environment variables take effect.

Manual Installation

Prerequisites

Make sure you have Node.js 20 or later installed. Download it from nodejs.org.

NPM

npm install -g @qwen-code/qwen-code@latest

Homebrew (macOS, Linux)

brew install qwen-code

Quick Start

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

On first use, you'll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Example prompts:

What does this project do?

Explain the codebase structure.

Help me refactor this function.

Generate unit tests for this module.

Click to watch a demo video

🦞 Use Qwen Code for Coding Tasks in Claw

Copy the prompt below and paste it into your agent:

Use Qwen Code as a coding agent. First, fetch and read this skill reference:

https://raw.githubusercontent.com/QwenLM/qwen-code/refs/heads/main/.qwen/skills/qwen-code-claw/SKILL.md

Then follow the instructions inside to install, authenticate, and use Qwen Code via acpx for all coding tasks.

Authentication

Qwen Code supports the following authentication methods:

- API Key (recommended): use an API key from Alibaba Cloud Model Studio (Beijing / intl) or any supported provider (OpenAI, Anthropic, Google GenAI, and other compatible endpoints).

- Coding Plan: subscribe to the Alibaba Cloud Coding Plan (Beijing / intl) for a fixed monthly fee with higher quotas.

⚠️ Qwen OAuth was discontinued on April 15, 2026. If you were previously using Qwen OAuth, please switch to one of the methods above. Run

qwenand then/authto reconfigure.

API Key (recommended)

Use an API key to connect to Alibaba Cloud Model Studio or any supported provider. Supports multiple protocols:

- OpenAI-compatible: Alibaba Cloud ModelStudio, ModelScope, OpenAI, OpenRouter, and other OpenAI-compatible providers

- Anthropic: Claude models

- Google GenAI: Gemini models

The recommended way to configure models and providers is by editing ~/.qwen/settings.json (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

Quick Setup in 3 Steps

Step 1: Create or edit ~/.qwen/settings.json

Here is a complete example:

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"description": "Qwen3-Coder via Dashscope",

"envKey": "DASHSCOPE_API_KEY"

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Step 2: Understand each field

| Field | What it does |

|---|---|

modelProviders |

Declares which models are available and how to connect to them. Keys like openai, anthropic, gemini represent the API protocol. |

modelProviders[].id |

The model ID sent to the API (e.g. qwen3.6-plus, gpt-4o). |

modelProviders[].envKey |

The name of the environment variable that holds your API key. |

modelProviders[].baseUrl |

The API endpoint URL (required for non-default endpoints). |

env |

A fallback place to store API keys (lowest priority; prefer .env files or export for sensitive keys). |

security.auth.selectedType |

The protocol to use on startup (openai, anthropic, gemini, vertex-ai). |

model.name |

The default model to use when Qwen Code starts. |

Step 3: Start Qwen Code — your configuration takes effect automatically:

qwen

Use the /model command at any time to switch between all configured models.

More Examples

Coding Plan (Alibaba Cloud ModelStudio) — fixed monthly fee, higher quotas

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.6-plus from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

},

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "glm-4.7",

"name": "glm-4.7 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "glm-4.7 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "kimi-k2.5",

"name": "kimi-k2.5 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "kimi-k2.5 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Subscribe to the Coding Plan and get your API key at Alibaba Cloud ModelStudio(Beijing) or Alibaba Cloud ModelStudio(intl).

Multiple providers (OpenAI + Anthropic + Gemini)

{

"modelProviders": {

"openai": [

{

"id": "gpt-4o",

"name": "GPT-4o",

"envKey": "OPENAI_API_KEY",

"baseUrl": "https://api.openai.com/v1"

}

],

"anthropic": [

{

"id": "claude-sonnet-4-20250514",

"name": "Claude Sonnet 4",

"envKey": "ANTHROPIC_API_KEY"

}

],

"gemini": [

{

"id": "gemini-2.5-pro",

"name": "Gemini 2.5 Pro",

"envKey": "GEMINI_API_KEY"

}

]

},

"env": {

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "gpt-4o"

}

}

Enable thinking mode (for supported models like qwen3.5-plus)

{

"modelProviders": {

"openai": [

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (thinking)",

"envKey": "DASHSCOPE_API_KEY",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.5-plus"

}

}

Tip: You can also set API keys via

exportin your shell or.envfiles, which take higher priority thansettings.json→env. See the authentication guide for full details.

Security note: Never commit API keys to version control. The

~/.qwen/settings.jsonfile is in your home directory and should stay private.

Local Model Setup (Ollama / vLLM)

You can also run models locally — no API key or cloud account needed. This is not an authentication method; instead, configure your local model endpoint in ~/.qwen/settings.json using the modelProviders field.

Ollama setup

- Install Ollama from ollama.com

- Pull a model:

ollama pull qwen3:32b - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "qwen3:32b",

"name": "Qwen3 32B (Ollama)",

"baseUrl": "http://localhost:11434/v1",

"description": "Qwen3 32B running locally via Ollama"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3:32b"

}

}

vLLM setup

- Install vLLM:

pip install vllm - Start the server:

vllm serve Qwen/Qwen3-32B - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "Qwen/Qwen3-32B",

"name": "Qwen3 32B (vLLM)",

"baseUrl": "http://localhost:8000/v1",

"description": "Qwen3 32B running locally via vLLM"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "Qwen/Qwen3-32B"

}

}

Usage

As an open-source terminal agent, you can use Qwen Code in four primary ways:

- Interactive mode (terminal UI)

- Headless mode (scripts, CI)

- IDE integration (VS Code, Zed)

- TypeScript SDK

Interactive mode

cd your-project/

qwen

Run qwen in your project folder to launch the interactive terminal UI. Use @ to reference local files (for example @src/main.ts).

Headless mode

cd your-project/

qwen -p "your question"

Use -p to run Qwen Code without the interactive UI—ideal for scripts, automation, and CI/CD. Learn more: Headless mode.

IDE integration

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

TypeScript SDK

Build on top of Qwen Code with the TypeScript SDK:

Commands & Shortcuts

Session Commands

/help- Display available commands/clear- Clear conversation history/compress- Compress history to save tokens/stats- Show current session information/bug- Submit a bug report/exitor/quit- Exit Qwen Code

Keyboard Shortcuts

Ctrl+C- Cancel current operationCtrl+D- Exit (on empty line)Up/Down- Navigate command history

Learn more about Commands

Tip: In YOLO mode (

--yolo), vision switching happens automatically without prompts when images are detected. Learn more about Approval Mode

Configuration

Qwen Code can be configured via settings.json, environment variables, and CLI flags.

| File | Scope | Description |

|---|---|---|

~/.qwen/settings.json |

User (global) | Applies to all your Qwen Code sessions. Recommended for modelProviders and env. |

.qwen/settings.json |

Project | Applies only when running Qwen Code in this project. Overrides user settings. |

The most commonly used top-level fields in settings.json:

| Field | Description |

|---|---|

modelProviders |

Define available models per protocol (openai, anthropic, gemini, vertex-ai). |

env |

Fallback environment variables (e.g. API keys). Lower priority than shell export and .env files. |

security.auth.selectedType |

The protocol to use on startup (e.g. openai). |

model.name |

The default model to use when Qwen Code starts. |

See the Authentication section above for complete

settings.jsonexamples, and the settings reference for all available options.

Benchmark Results

Terminal-Bench Performance

| Agent | Model | Accuracy |

|---|---|---|

| Qwen Code | Qwen3-Coder-480A35 | 37.5% |

| Qwen Code | Qwen3-Coder-30BA3B | 31.3% |

Ecosystem

Looking for a graphical interface?

- AionUi A modern GUI for command-line AI tools including Qwen Code

- Gemini CLI Desktop A cross-platform desktop/web/mobile UI for Qwen Code

Troubleshooting

If you encounter issues, check the troubleshooting guide.

Common issues:

Qwen OAuth free tier was discontinued on 2026-04-15: Qwen OAuth is no longer available. Runqwen→/authand switch to API Key or Coding Plan. See the Authentication section above for setup instructions.

To report a bug from within the CLI, run /bug and include a short title and repro steps.

Connect with Us

- Discord: https://discord.gg/RN7tqZCeDK

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

Acknowledgments

This project is based on Google Gemini CLI. We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.