|

Some checks are pending

Qwen Code CI / Lint (push) Waiting to run

Qwen Code CI / Test (push) Blocked by required conditions

Qwen Code CI / Test-1 (push) Blocked by required conditions

Qwen Code CI / Test-2 (push) Blocked by required conditions

Qwen Code CI / Test-3 (push) Blocked by required conditions

Qwen Code CI / Test-4 (push) Blocked by required conditions

Qwen Code CI / Test-5 (push) Blocked by required conditions

Qwen Code CI / Test-6 (push) Blocked by required conditions

Qwen Code CI / Test-7 (push) Blocked by required conditions

Qwen Code CI / Test-8 (push) Blocked by required conditions

Qwen Code CI / Post Coverage Comment (push) Blocked by required conditions

Qwen Code CI / CodeQL (push) Waiting to run

E2E Tests / E2E Test (Linux) - sandbox:docker (push) Waiting to run

E2E Tests / E2E Test (Linux) - sandbox:none (push) Waiting to run

E2E Tests / E2E Test - macOS (push) Waiting to run

* feat(cli): add session recap with /recap and auto-show on return

Users often open an old session days later and need to scroll through

pages to remember where they left off. This change adds a short

"where did I leave off" recap — a 1-3 sentence summary generated by

the fast model — so they can resume without re-reading the history.

Two triggers:

- /recap: manual slash command.

- Auto: when the terminal has been blurred for 5+ minutes and gets

focused again (uses the existing DECSET 1004 focus protocol via

useFocus). Gated on streamingState === Idle so it never interrupts

an active turn. Only fires once per blur cycle.

The recap is rendered in dim color with a chevron prefix, visually

distinct from assistant replies. A new `general.showSessionRecap`

setting controls the auto-trigger (default on). /recap works

independent of the setting.

Implementation notes:

- generateSessionRecap uses fastModel (falls back to main model),

tools: [], maxOutputTokens: 300, and a tight system prompt. It

strips tool calls / responses from history before sending — tool

responses can hold 10K+ tokens of file content that drown the recap

in irrelevant detail. The 30-message window respects turn boundaries

(slice never starts on a dangling model/tool response).

- Output is wrapped in <recap>...</recap> tags; the extractor returns

empty (skips render) if the tag is missing, preventing model

reasoning from leaking into the UI.

- All failures are silent (return null) and logged via a scoped

debugLogger; recap is best-effort and must never break main flow.

- /recap refuses to run while a turn is pending.

* fix(cli): abort in-flight recap when showSessionRecap is disabled

If the user disables showSessionRecap while an auto-recap LLM call is

already in flight, the previous code returned early without aborting.

The pending .then would still pass its idle/abort guards and append the

recap, producing an unwanted message after the user has opted out.

Abort the controller and clear it eagerly so the resolved promise no

longer adds to history.

* fix(cli): gate /recap and auto-recap on streaming idle state

Two related issues from review:

1. /recap was only refusing when ui.pendingItem was set, but a normal

model reply runs with streamingState === Responding and a null

pendingItem. Invoking /recap mid-stream would generate a recap from

a partial conversation and insert it between the user prompt and

the assistant reply.

2. useAwaySummary cleared blurredAtRef before checking isIdle, so if

focus returned during a still-streaming turn (after a >5min blur)

the recap was permanently dropped — there was no later retry when

the turn became idle, because isIdle was not in the effect deps.

Fixes:

- Expose isIdleRef on CommandContext.ui (mirrors btwAbortControllerRef

pattern). Plumb it from AppContainer through useSlashCommandProcessor.

- recapCommand now refuses when isIdleRef.current is false OR

pendingItem is non-null.

- useAwaySummary preserves blurredAtRef on the !isIdle bail and adds

isIdle to the effect deps, so the trigger re-evaluates when the

current turn finishes.

- Brief blurs (< AWAY_THRESHOLD_MS) still reset blurredAtRef.

Also seeds isIdleRef in nonInteractiveUi and mockCommandContext so the

new field has a sensible default outside the interactive UI.

* docs: document /recap command, showSessionRecap setting, and design

- User docs: add /recap to the Session and Project Management table in

features/commands.md and a dedicated subsection covering manual use,

the auto-trigger, the dim-color rendering, and the fast-model tip.

- User docs: add general.showSessionRecap row to the configuration

settings reference.

- Design doc: docs/design/session-recap/session-recap-design.md covers

motivation, the two trigger paths, the per-file architecture, prompt

design with the <recap> tag and three-tier extractor, history

filtering rationale (functionResponse can be 10K+ tokens), the

useAwaySummary state machine, the isIdleRef gating for /recap, model

selection, observability, and out-of-scope items.

* fix(core): exclude thought parts from session recap context

filterToDialog kept any non-empty text part, but @google/genai's Part

type also marks model reasoning with part.thought / part.thoughtSignature.

That hidden chain-of-thought was being fed to the recap LLM and could

get summarized as if it were user-visible dialogue.

Drop parts where either flag is set. Update the design doc's

History 过滤 section to call this out alongside the existing

tool-call/response rationale.

* docs(session-recap): correct debug-logging guidance, fill in state machine, sharpen UX wording

Audit of the session recap docs against the implementation found three

issues worth fixing:

- Design doc claimed debug logs were enabled via a QWEN_CODE_DEBUG_LOGGING

env var. That var does not exist; debug logs are written to

~/.qwen/debug/<sessionId>.txt by default, gated by QWEN_DEBUG_LOG_FILE.

Replace with the accurate path + opt-out behavior, and tell the reader

to grep for the [SESSION_RECAP] tag.

- Design doc's useAwaySummary state machine table was missing the

isFocused && blurredAtRef === null path (taken on first render and

right after a brief-blur reset). Add the row.

- User doc's "Refuses to run ... failures are silent" line conflated the

inline-error refusal with silent generation failures, and "(when the

conversation is idle)" used internal jargon. Split the two cases and

spell out what "idle" means, including the wait-then-fire behavior

when focus returns mid-turn.

* docs(session-recap): correctly describe /recap vs auto-trigger failure modes

The previous wording said "Generation/network failures are silent — the

recap simply does not appear", but recapCommand returns a user-facing

info message ("Not enough conversation context for a recap yet.") in

exactly that path, and also returns inline messages for the

config-not-loaded and busy-turn guards.

Only the auto-trigger path is truly silent (it just skips addItem when

generateSessionRecap returns null). Split the two paths in the doc so

the manual command's "always responds with something" behavior is

distinguished from the auto-trigger's no-op-on-failure behavior.

* docs(session-recap): align prompt-rules section with the actual prompt

Two doc-vs-code mismatches in the design doc's "System Prompt" section,

caught with the same lens as yiliang114's failure-mode review:

- The bullet list claimed RECAP_SYSTEM_PROMPT forbids "推测用户意图"

and "用 'you' 称呼用户". Those rules existed in an early draft but

were dropped when the <recap> tag rules were added; the current

prompt has no such restrictions. Replace with the actual rules and

add a "与 RECAP_SYSTEM_PROMPT 一一对应" marker so future edits stay

in sync.

- The doc said systemInstruction "覆盖" the main agent prompt. True

for the agent prompt portion, but GeminiClient.generateContent

internally calls getCustomSystemPrompt which appends user memory

(QWEN.md / 自动 memory) as a suffix. Spell that out — the final

system prompt is recap prompt + user memory, which is actually

useful project context for the recap.

* docs(session-recap): translate design doc to English

The repo convention for docs/design is English (7 of 8 existing files;

auto-memory/memory-system.md is the only Chinese one). The first version

of this design doc followed the auto-memory example, which turned out

to be the wrong sample.

Translate to English while preserving the existing structure, the

state-machine table, the prompt-vs-doc 1:1 alignment, the

QWEN_DEBUG_LOG_FILE description, and the failure-mode notes added in

prior commits.

* fix(cli): drop empty info return from /recap interactive success path

The interactive success path inserts the away_recap history item

directly via ui.addItem and then returned `{type: 'message',

messageType: 'info', content: ''}`. The slash-command processor's

'message' case unconditionally calls addMessage, which adds another

HistoryItemInfo with empty text. The empty info renders as nothing

(StatusMessage early-returns null), but it still bloats the in-memory

history list and shows up in /export and saved sessions.

Return void on the interactive success path and on the abort path so

the processor's `if (result)` check skips the message-handler branch

entirely. Widen the action's return type to `void | SlashCommandActionReturn`

to match (same shape as btwCommand).

|

||

|---|---|---|

| .github | ||

| .husky | ||

| .qwen | ||

| .vscode | ||

| docs | ||

| docs-site | ||

| eslint-rules | ||

| integration-tests | ||

| packages | ||

| scripts | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .prettierrc.json | ||

| .yamllint.yml | ||

| AGENTS.md | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| esbuild.config.js | ||

| eslint.config.js | ||

| LICENSE | ||

| Makefile | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| vitest.config.ts | ||

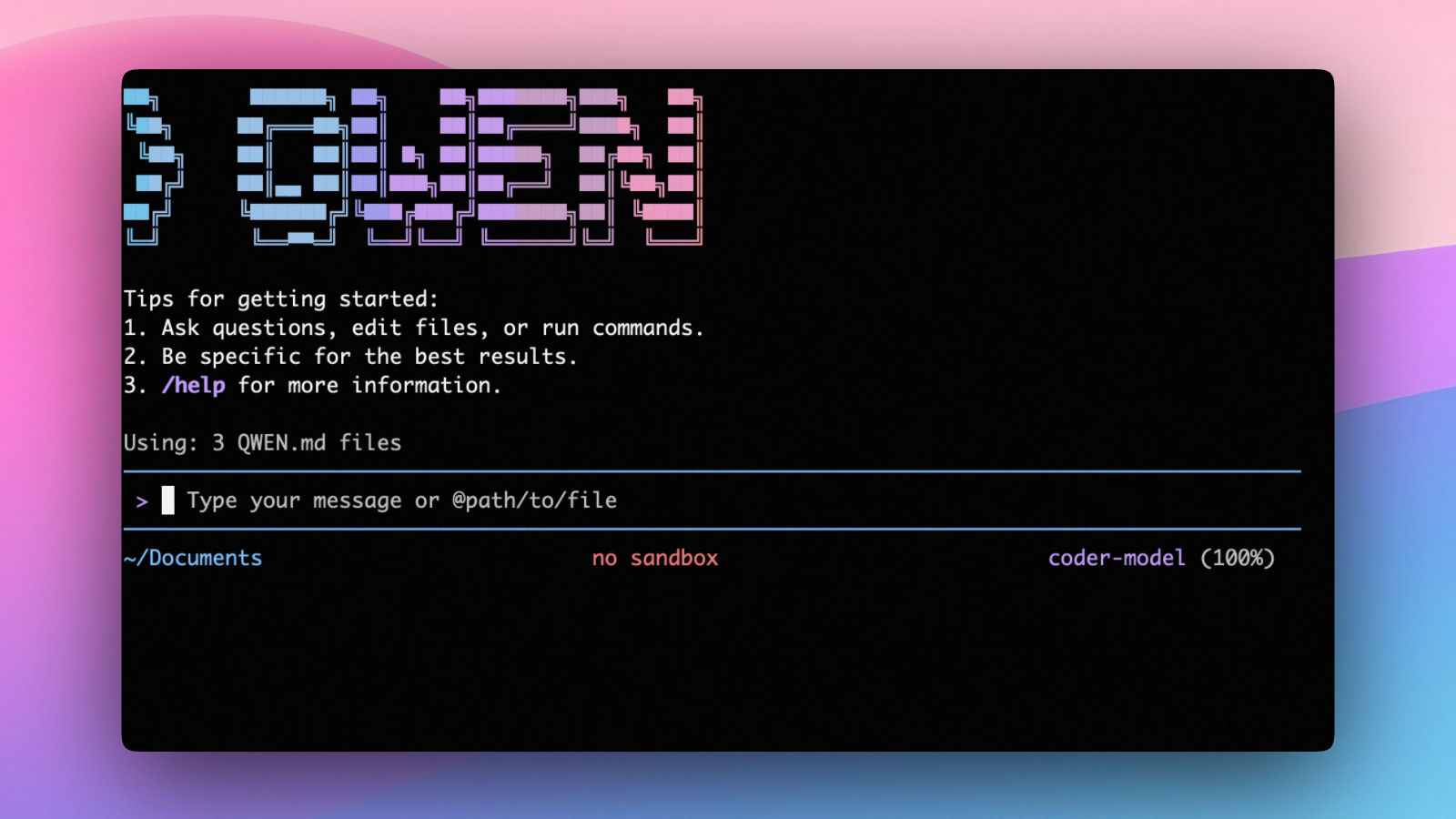

An open-source AI agent that lives in your terminal.

中文 | Deutsch | français | 日本語 | Русский | Português (Brasil)

🎉 News

-

2026-04-15: Qwen OAuth free tier has been discontinued. To continue using Qwen Code, switch to Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key. Run

qwen authto configure. -

2026-04-13: Qwen OAuth free tier policy update: daily quota adjusted to 100 requests/day (from 1,000).

-

2026-04-02: Qwen3.6-Plus is now live! Get an API key from Alibaba Cloud ModelStudio to access it through the OpenAI-compatible API.

-

2026-02-16: Qwen3.5-Plus is now live!

Why Qwen Code?

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

- Multi-protocol, flexible providers: use OpenAI / Anthropic / Gemini-compatible APIs, Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key.

- Open-source, co-evolving: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

- Agentic workflow, feature-rich: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

- Terminal-first, IDE-friendly: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

Installation

Quick Install (Recommended)

Linux / macOS

bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

Windows (Run as Administrator)

Works in both Command Prompt and PowerShell:

powershell -Command "Invoke-WebRequest 'https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat' -OutFile (Join-Path $env:TEMP 'install-qwen.bat'); & (Join-Path $env:TEMP 'install-qwen.bat')"

Note

: It's recommended to restart your terminal after installation to ensure environment variables take effect.

Manual Installation

Prerequisites

Make sure you have Node.js 20 or later installed. Download it from nodejs.org.

NPM

npm install -g @qwen-code/qwen-code@latest

Homebrew (macOS, Linux)

brew install qwen-code

Quick Start

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

On first use, you'll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Example prompts:

What does this project do?

Explain the codebase structure.

Help me refactor this function.

Generate unit tests for this module.

Click to watch a demo video

🦞 Use Qwen Code for Coding Tasks in Claw

Copy the prompt below and paste it into your agent:

Use Qwen Code as a coding agent. First, fetch and read this skill reference:

https://raw.githubusercontent.com/QwenLM/qwen-code/refs/heads/main/.qwen/skills/qwen-code-claw/SKILL.md

Then follow the instructions inside to install, authenticate, and use Qwen Code via acpx for all coding tasks.

Authentication

Qwen Code supports the following authentication methods:

- API Key (recommended): use an API key from Alibaba Cloud Model Studio (Beijing / intl) or any supported provider (OpenAI, Anthropic, Google GenAI, and other compatible endpoints).

- Coding Plan: subscribe to the Alibaba Cloud Coding Plan (Beijing / intl) for a fixed monthly fee with higher quotas.

⚠️ Qwen OAuth was discontinued on April 15, 2026. If you were previously using Qwen OAuth, please switch to one of the methods above. Run

qwenand then/authto reconfigure.

API Key (recommended)

Use an API key to connect to Alibaba Cloud Model Studio or any supported provider. Supports multiple protocols:

- OpenAI-compatible: Alibaba Cloud ModelStudio, ModelScope, OpenAI, OpenRouter, and other OpenAI-compatible providers

- Anthropic: Claude models

- Google GenAI: Gemini models

The recommended way to configure models and providers is by editing ~/.qwen/settings.json (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

Quick Setup in 3 Steps

Step 1: Create or edit ~/.qwen/settings.json

Here is a complete example:

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"description": "Qwen3-Coder via Dashscope",

"envKey": "DASHSCOPE_API_KEY"

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Step 2: Understand each field

| Field | What it does |

|---|---|

modelProviders |

Declares which models are available and how to connect to them. Keys like openai, anthropic, gemini represent the API protocol. |

modelProviders[].id |

The model ID sent to the API (e.g. qwen3.6-plus, gpt-4o). |

modelProviders[].envKey |

The name of the environment variable that holds your API key. |

modelProviders[].baseUrl |

The API endpoint URL (required for non-default endpoints). |

env |

A fallback place to store API keys (lowest priority; prefer .env files or export for sensitive keys). |

security.auth.selectedType |

The protocol to use on startup (openai, anthropic, gemini, vertex-ai). |

model.name |

The default model to use when Qwen Code starts. |

Step 3: Start Qwen Code — your configuration takes effect automatically:

qwen

Use the /model command at any time to switch between all configured models.

More Examples

Coding Plan (Alibaba Cloud ModelStudio) — fixed monthly fee, higher quotas

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.6-plus from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

},

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "glm-4.7",

"name": "glm-4.7 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "glm-4.7 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "kimi-k2.5",

"name": "kimi-k2.5 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "kimi-k2.5 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Subscribe to the Coding Plan and get your API key at Alibaba Cloud ModelStudio(Beijing) or Alibaba Cloud ModelStudio(intl).

Multiple providers (OpenAI + Anthropic + Gemini)

{

"modelProviders": {

"openai": [

{

"id": "gpt-4o",

"name": "GPT-4o",

"envKey": "OPENAI_API_KEY",

"baseUrl": "https://api.openai.com/v1"

}

],

"anthropic": [

{

"id": "claude-sonnet-4-20250514",

"name": "Claude Sonnet 4",

"envKey": "ANTHROPIC_API_KEY"

}

],

"gemini": [

{

"id": "gemini-2.5-pro",

"name": "Gemini 2.5 Pro",

"envKey": "GEMINI_API_KEY"

}

]

},

"env": {

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "gpt-4o"

}

}

Enable thinking mode (for supported models like qwen3.5-plus)

{

"modelProviders": {

"openai": [

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (thinking)",

"envKey": "DASHSCOPE_API_KEY",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.5-plus"

}

}

Tip: You can also set API keys via

exportin your shell or.envfiles, which take higher priority thansettings.json→env. See the authentication guide for full details.

Security note: Never commit API keys to version control. The

~/.qwen/settings.jsonfile is in your home directory and should stay private.

Local Model Setup (Ollama / vLLM)

You can also run models locally — no API key or cloud account needed. This is not an authentication method; instead, configure your local model endpoint in ~/.qwen/settings.json using the modelProviders field.

Ollama setup

- Install Ollama from ollama.com

- Pull a model:

ollama pull qwen3:32b - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "qwen3:32b",

"name": "Qwen3 32B (Ollama)",

"baseUrl": "http://localhost:11434/v1",

"description": "Qwen3 32B running locally via Ollama"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3:32b"

}

}

vLLM setup

- Install vLLM:

pip install vllm - Start the server:

vllm serve Qwen/Qwen3-32B - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "Qwen/Qwen3-32B",

"name": "Qwen3 32B (vLLM)",

"baseUrl": "http://localhost:8000/v1",

"description": "Qwen3 32B running locally via vLLM"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "Qwen/Qwen3-32B"

}

}

Usage

As an open-source terminal agent, you can use Qwen Code in four primary ways:

- Interactive mode (terminal UI)

- Headless mode (scripts, CI)

- IDE integration (VS Code, Zed)

- TypeScript SDK

Interactive mode

cd your-project/

qwen

Run qwen in your project folder to launch the interactive terminal UI. Use @ to reference local files (for example @src/main.ts).

Headless mode

cd your-project/

qwen -p "your question"

Use -p to run Qwen Code without the interactive UI—ideal for scripts, automation, and CI/CD. Learn more: Headless mode.

IDE integration

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

TypeScript SDK

Build on top of Qwen Code with the TypeScript SDK:

Commands & Shortcuts

Session Commands

/help- Display available commands/clear- Clear conversation history/compress- Compress history to save tokens/stats- Show current session information/bug- Submit a bug report/exitor/quit- Exit Qwen Code

Keyboard Shortcuts

Ctrl+C- Cancel current operationCtrl+D- Exit (on empty line)Up/Down- Navigate command history

Learn more about Commands

Tip: In YOLO mode (

--yolo), vision switching happens automatically without prompts when images are detected. Learn more about Approval Mode

Configuration

Qwen Code can be configured via settings.json, environment variables, and CLI flags.

| File | Scope | Description |

|---|---|---|

~/.qwen/settings.json |

User (global) | Applies to all your Qwen Code sessions. Recommended for modelProviders and env. |

.qwen/settings.json |

Project | Applies only when running Qwen Code in this project. Overrides user settings. |

The most commonly used top-level fields in settings.json:

| Field | Description |

|---|---|

modelProviders |

Define available models per protocol (openai, anthropic, gemini, vertex-ai). |

env |

Fallback environment variables (e.g. API keys). Lower priority than shell export and .env files. |

security.auth.selectedType |

The protocol to use on startup (e.g. openai). |

model.name |

The default model to use when Qwen Code starts. |

See the Authentication section above for complete

settings.jsonexamples, and the settings reference for all available options.

Benchmark Results

Terminal-Bench Performance

| Agent | Model | Accuracy |

|---|---|---|

| Qwen Code | Qwen3-Coder-480A35 | 37.5% |

| Qwen Code | Qwen3-Coder-30BA3B | 31.3% |

Ecosystem

Looking for a graphical interface?

- AionUi A modern GUI for command-line AI tools including Qwen Code

- Gemini CLI Desktop A cross-platform desktop/web/mobile UI for Qwen Code

Troubleshooting

If you encounter issues, check the troubleshooting guide.

Common issues:

Qwen OAuth free tier was discontinued on 2026-04-15: Qwen OAuth is no longer available. Runqwen→/authand switch to API Key or Coding Plan. See the Authentication section above for setup instructions.

To report a bug from within the CLI, run /bug and include a short title and repro steps.

Connect with Us

- Discord: https://discord.gg/RN7tqZCeDK

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

Acknowledgments

This project is based on Google Gemini CLI. We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.