* feat(cli): add terminal theme auto-detection when ui.theme is 'auto' Detect terminal dark/light preference at startup using macOS system appearance (AppleInterfaceStyle) and COLORFGBG env variable fallback, then resolve to Qwen Dark or Qwen Light accordingly. Adds 'Auto' option to the /theme dialog. Closes #2998 * fix: address audit issues in terminal theme detection - Fix ThemeDialog preview: use getActiveTheme() when 'auto' is highlighted so the preview shows the actual detected theme instead of always falling back to Qwen Dark. - Swap detection order: check COLORFGBG (terminal-specific) before macOS system appearance (system-wide) since the terminal may use a different theme than the OS. - Fix core/theme.test.ts mock to export AUTO_THEME_NAME and add test case verifying 'auto' bypasses validation. * feat(cli): add OSC 11 background color query for theme detection Send ESC]11;?BEL to the terminal at startup to read the actual background RGB value, then decide dark/light via ITU-R BT.709 luminance. This is the most universal detection method and covers Linux terminals (GNOME Terminal, Windows Terminal, etc.) that do not set COLORFGBG. Async detection (OSC 11 → COLORFGBG → macOS → dark) is used at startup; the sync path (COLORFGBG → macOS → dark) remains for the /theme dialog live-preview to avoid ~200ms latency per highlight. * fix: optimize async detection order and improve comments - Check COLORFGBG first in the async path to avoid a 200ms OSC 11 timeout on terminals that already set COLORFGBG but lack OSC 11. - Fix misleading comment about stdin flowing mode vs raw mode. * fix(cli): defer auto theme detection past sandbox entry - Move resolveAutoThemeAsync() to after the sandbox-check gate so the ~200ms OSC 11 probe does not block a process that is about to exec into the sandbox child (which reruns the same detection). - Register missing i18n keys 'Auto (detect terminal theme)' and 'Auto' across all 7 locales; previously non-English users fell back to the English keys. - Simplify resolveAutoThemeAsync to return Promise<void> (the caller never checked the previous always-true boolean). * feat(cli): auto-detect theme when ui.theme is unset An unset ui.theme now behaves the same as 'auto' — the async OSC 11 / COLORFGBG / macOS probe runs at startup and resolves to Qwen Dark or Qwen Light. Fresh installs no longer hard-code Qwen Dark. The /theme dialog also highlights the "Auto" row when ui.theme is undefined, so the selection reflects the effective resolution. * fix(cli): do not run OSC 11 probe when ui.theme is unset Fresh startups were showing kitty-protocol response bytes (e.g. [?0u[?62c) inside the input box. The OSC 11 probe added for the unset-theme path flips stdin raw mode and pauses the stream, and that state dance interleaves with kitty protocol detection on some terminals so the kitty responses leak past the early-input-capture filter and land in the TUI input. Fall back to the synchronous detector (COLORFGBG + macOS) when the user has no theme configured. Explicit 'auto' still runs the OSC 11 probe since the user has opted in. * fix(cli): run OSC 11 probe inside the early-capture window Previous fix restricted the OSC 11 probe to explicit 'auto', leaving fresh installs without terminal detection — not acceptable. The real problem was that the probe managed its own stdin raw mode and pause cycle before early input capture was attached, so kitty protocol response bytes arriving during the gap slipped past the filter and landed in the TUI input. - Make detectOsc11Theme stdin-state-agnostic: it no longer flips raw mode or pauses the stream; it just attaches a listener, sends the query, and removes the listener on response or timeout. - Defer the async probe in gemini.tsx until after startEarlyInputCapture (and kitty detection kickoff) inside the interactive block. The existing filter in startEarlyInputCapture absorbs the OSC 11 response bytes alongside our handler, so nothing can leak into the TUI input. - Both unset theme and explicit 'auto' now run the async probe. * fix(cli): sync theme baseline for non-interactive and pre-render UI The previous refactor only resolved 'auto'/unset themes inside the interactive startup block. That dropped detection for non-interactive runs and left any pre-render UI (the --resume session picker) drawing with the default Qwen Dark palette even on light terminals. Set a synchronous baseline (COLORFGBG + macOS) right after loading custom themes so the theme is already correct when those paths run; the interactive block still refines with an OSC 11 probe when possible. * fix(cli): cache async auto-detect so /theme Auto stays consistent /theme's live preview calls setActiveTheme('auto'), which runs the synchronous detector (COLORFGBG + macOS only). On terminals whose light/dark state is only visible to OSC 11 (e.g. GNOME Terminal), the sync path disagrees with the async probe done at startup — so picking Auto once showed the correct preview, but switching away and picking Auto again flipped the preview to the wrong theme. Cache the result from resolveAutoThemeAsync and prefer it in the sync path; fall back to live sync detection only when no async result is known yet. Added a unit test that locks the regression down. * fix(theme): don't pin macOS detection to Light on generic exec failure detectMacOSTheme previously treated every `defaults read -g AppleInterfaceStyle` failure as Light Mode. Only the "key does not exist" error actually indicates Light — timeouts, missing `defaults`, ENOENT, SIGTERM, etc. are inconclusive and should fall through so the caller can continue its fallback chain instead of locking to Light. Match the "does not exist" marker in the error's stderr or message; return undefined otherwise. Adds tests for the timeout, ENOENT and stderr-only paths. * perf(cli): overlap OSC 11 theme probe with startup work resolveAutoThemeAsync was awaited on the critical path, so an unset or 'auto' ui.theme paid the full OSC 11 timeout (~200 ms) plus the synchronous macOS defaults read before the first paint. The synchronous baseline picked earlier already keeps the theme valid for the non-interactive paths and the pre-render UI, so this await was the only thing forcing render to wait on the probe. Kick the probe off without awaiting alongside detectAndEnableKittyProtocol and drain the resulting promise just before startInteractiveUI. The OSC 11 timeout now overlaps with initializeApp and the warnings collection, the early-capture filter is still active when the response arrives (so no terminal bytes leak into the TUI), and the refined theme is in place by the time the first frame renders. * test(cli): cover OSC 11 probe listener lifecycle Adds regression tests for the listener-leak path that motivated three mid-PR fixes (OSC 11 bytes bleeding into the input box): - happy-path resolves 'dark' from a simulated terminal response and asserts the data listener is removed - timeout path resolves undefined and likewise restores the listener count to baseline - multi-chunk path reassembles a response split across two data events Also resets the module-level `cachedAutoDetection` singleton in the theme-manager beforeEach so the async detection cache cannot leak across tests and make ordering load-bearing. |

||

|---|---|---|

| .github | ||

| .husky | ||

| .qwen | ||

| .vscode | ||

| docs | ||

| docs-site | ||

| eslint-rules | ||

| integration-tests | ||

| packages | ||

| scripts | ||

| .dockerignore | ||

| .editorconfig | ||

| .gitattributes | ||

| .gitignore | ||

| .npmrc | ||

| .nvmrc | ||

| .prettierignore | ||

| .prettierrc.json | ||

| .yamllint.yml | ||

| AGENTS.md | ||

| CONTRIBUTING.md | ||

| Dockerfile | ||

| esbuild.config.js | ||

| eslint.config.js | ||

| LICENSE | ||

| Makefile | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| vitest.config.ts | ||

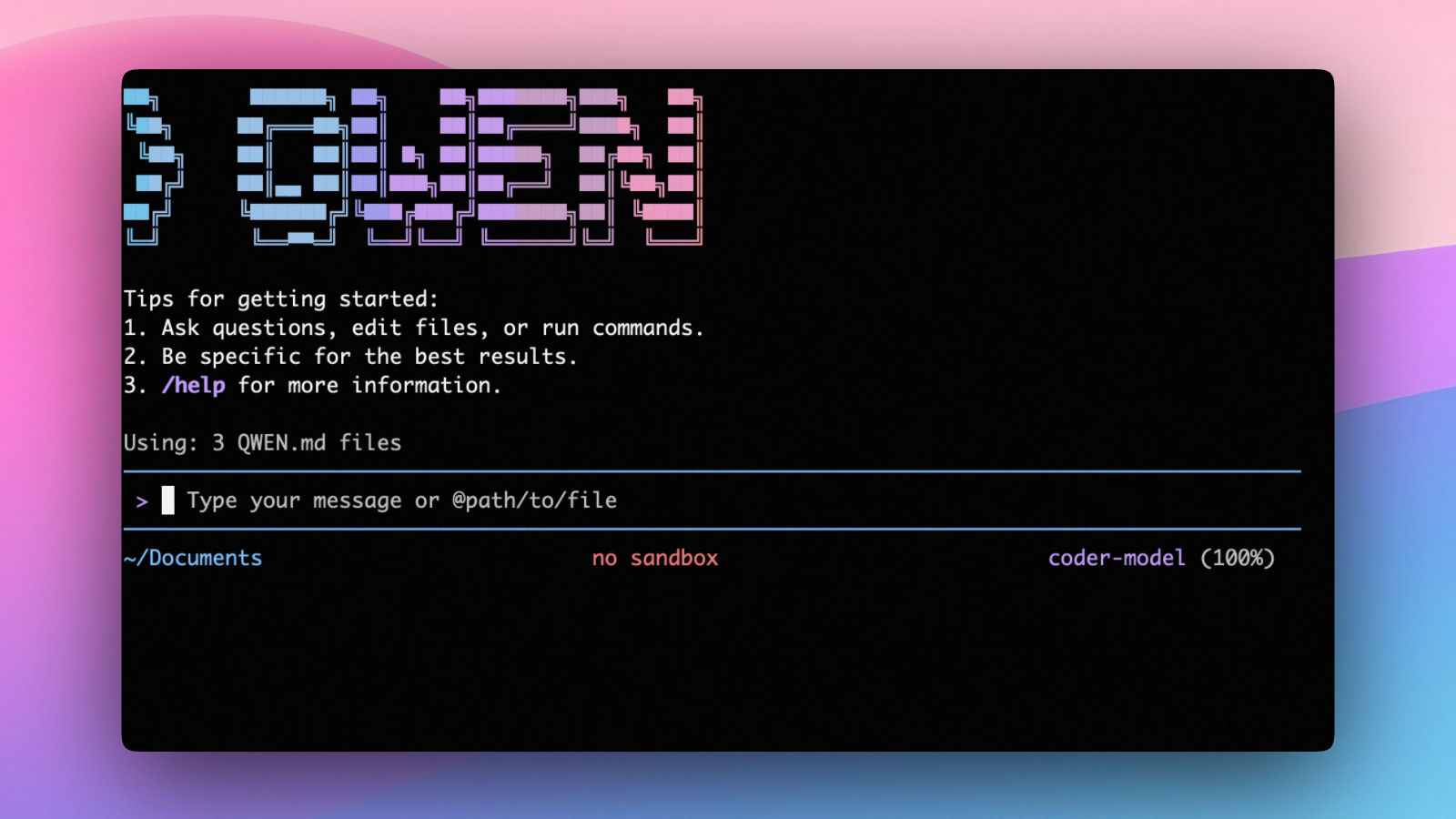

An open-source AI agent that lives in your terminal.

中文 | Deutsch | français | 日本語 | Русский | Português (Brasil)

🎉 News

-

2026-04-15: Qwen OAuth free tier has been discontinued. To continue using Qwen Code, switch to Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key. Run

qwen authto configure. -

2026-04-13: Qwen OAuth free tier policy update: daily quota adjusted to 100 requests/day (from 1,000).

-

2026-04-02: Qwen3.6-Plus is now live! Get an API key from Alibaba Cloud ModelStudio to access it through the OpenAI-compatible API.

-

2026-02-16: Qwen3.5-Plus is now live!

Why Qwen Code?

Qwen Code is an open-source AI agent for the terminal, optimized for Qwen series models. It helps you understand large codebases, automate tedious work, and ship faster.

- Multi-protocol, flexible providers: use OpenAI / Anthropic / Gemini-compatible APIs, Alibaba Cloud Coding Plan, OpenRouter, Fireworks AI, or bring your own API key.

- Open-source, co-evolving: both the framework and the Qwen3-Coder model are open-source—and they ship and evolve together.

- Agentic workflow, feature-rich: rich built-in tools (Skills, SubAgents) for a full agentic workflow and a Claude Code-like experience.

- Terminal-first, IDE-friendly: built for developers who live in the command line, with optional integration for VS Code, Zed, and JetBrains IDEs.

Installation

Quick Install (Recommended)

Linux / macOS

bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

Windows (Run as Administrator)

Works in both Command Prompt and PowerShell:

powershell -Command "Invoke-WebRequest 'https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat' -OutFile (Join-Path $env:TEMP 'install-qwen.bat'); & (Join-Path $env:TEMP 'install-qwen.bat')"

Note

: It's recommended to restart your terminal after installation to ensure environment variables take effect.

Manual Installation

Prerequisites

Make sure you have Node.js 20 or later installed. Download it from nodejs.org.

NPM

npm install -g @qwen-code/qwen-code@latest

Homebrew (macOS, Linux)

brew install qwen-code

Quick Start

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

On first use, you'll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Example prompts:

What does this project do?

Explain the codebase structure.

Help me refactor this function.

Generate unit tests for this module.

Click to watch a demo video

🦞 Use Qwen Code for Coding Tasks in Claw

Copy the prompt below and paste it into your agent:

Use Qwen Code as a coding agent. First, fetch and read this skill reference:

https://raw.githubusercontent.com/QwenLM/qwen-code/refs/heads/main/.qwen/skills/qwen-code-claw/SKILL.md

Then follow the instructions inside to install, authenticate, and use Qwen Code via acpx for all coding tasks.

Authentication

Qwen Code supports the following authentication methods:

- API Key (recommended): use an API key from Alibaba Cloud Model Studio (Beijing / intl) or any supported provider (OpenAI, Anthropic, Google GenAI, and other compatible endpoints).

- Coding Plan: subscribe to the Alibaba Cloud Coding Plan (Beijing / intl) for a fixed monthly fee with higher quotas.

⚠️ Qwen OAuth was discontinued on April 15, 2026. If you were previously using Qwen OAuth, please switch to one of the methods above. Run

qwenand then/authto reconfigure.

API Key (recommended)

Use an API key to connect to Alibaba Cloud Model Studio or any supported provider. Supports multiple protocols:

- OpenAI-compatible: Alibaba Cloud ModelStudio, ModelScope, OpenAI, OpenRouter, and other OpenAI-compatible providers

- Anthropic: Claude models

- Google GenAI: Gemini models

The recommended way to configure models and providers is by editing ~/.qwen/settings.json (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

Quick Setup in 3 Steps

Step 1: Create or edit ~/.qwen/settings.json

Here is a complete example:

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"description": "Qwen3-Coder via Dashscope",

"envKey": "DASHSCOPE_API_KEY"

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Step 2: Understand each field

| Field | What it does |

|---|---|

modelProviders |

Declares which models are available and how to connect to them. Keys like openai, anthropic, gemini represent the API protocol. |

modelProviders[].id |

The model ID sent to the API (e.g. qwen3.6-plus, gpt-4o). |

modelProviders[].envKey |

The name of the environment variable that holds your API key. |

modelProviders[].baseUrl |

The API endpoint URL (required for non-default endpoints). |

env |

A fallback place to store API keys (lowest priority; prefer .env files or export for sensitive keys). |

security.auth.selectedType |

The protocol to use on startup (openai, anthropic, gemini, vertex-ai). |

model.name |

The default model to use when Qwen Code starts. |

Step 3: Start Qwen Code — your configuration takes effect automatically:

qwen

Use the /model command at any time to switch between all configured models.

More Examples

Coding Plan (Alibaba Cloud ModelStudio) — fixed monthly fee, higher quotas

{

"modelProviders": {

"openai": [

{

"id": "qwen3.6-plus",

"name": "qwen3.6-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.6-plus from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

},

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "qwen3.5-plus with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "glm-4.7",

"name": "glm-4.7 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "glm-4.7 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

},

{

"id": "kimi-k2.5",

"name": "kimi-k2.5 (Coding Plan)",

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

"description": "kimi-k2.5 with thinking enabled from ModelStudio Coding Plan",

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.6-plus"

}

}

Subscribe to the Coding Plan and get your API key at Alibaba Cloud ModelStudio(Beijing) or Alibaba Cloud ModelStudio(intl).

Multiple providers (OpenAI + Anthropic + Gemini)

{

"modelProviders": {

"openai": [

{

"id": "gpt-4o",

"name": "GPT-4o",

"envKey": "OPENAI_API_KEY",

"baseUrl": "https://api.openai.com/v1"

}

],

"anthropic": [

{

"id": "claude-sonnet-4-20250514",

"name": "Claude Sonnet 4",

"envKey": "ANTHROPIC_API_KEY"

}

],

"gemini": [

{

"id": "gemini-2.5-pro",

"name": "Gemini 2.5 Pro",

"envKey": "GEMINI_API_KEY"

}

]

},

"env": {

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "gpt-4o"

}

}

Enable thinking mode (for supported models like qwen3.5-plus)

{

"modelProviders": {

"openai": [

{

"id": "qwen3.5-plus",

"name": "qwen3.5-plus (thinking)",

"envKey": "DASHSCOPE_API_KEY",

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"generationConfig": {

"extra_body": {

"enable_thinking": true

}

}

}

]

},

"env": {

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3.5-plus"

}

}

Tip: You can also set API keys via

exportin your shell or.envfiles, which take higher priority thansettings.json→env. See the authentication guide for full details.

Security note: Never commit API keys to version control. The

~/.qwen/settings.jsonfile is in your home directory and should stay private.

Local Model Setup (Ollama / vLLM)

You can also run models locally — no API key or cloud account needed. This is not an authentication method; instead, configure your local model endpoint in ~/.qwen/settings.json using the modelProviders field.

Ollama setup

- Install Ollama from ollama.com

- Pull a model:

ollama pull qwen3:32b - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "qwen3:32b",

"name": "Qwen3 32B (Ollama)",

"baseUrl": "http://localhost:11434/v1",

"description": "Qwen3 32B running locally via Ollama"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "qwen3:32b"

}

}

vLLM setup

- Install vLLM:

pip install vllm - Start the server:

vllm serve Qwen/Qwen3-32B - Configure

~/.qwen/settings.json:

{

"modelProviders": {

"openai": [

{

"id": "Qwen/Qwen3-32B",

"name": "Qwen3 32B (vLLM)",

"baseUrl": "http://localhost:8000/v1",

"description": "Qwen3 32B running locally via vLLM"

}

]

},

"security": {

"auth": {

"selectedType": "openai"

}

},

"model": {

"name": "Qwen/Qwen3-32B"

}

}

Usage

As an open-source terminal agent, you can use Qwen Code in four primary ways:

- Interactive mode (terminal UI)

- Headless mode (scripts, CI)

- IDE integration (VS Code, Zed)

- TypeScript SDK

Interactive mode

cd your-project/

qwen

Run qwen in your project folder to launch the interactive terminal UI. Use @ to reference local files (for example @src/main.ts).

Headless mode

cd your-project/

qwen -p "your question"

Use -p to run Qwen Code without the interactive UI—ideal for scripts, automation, and CI/CD. Learn more: Headless mode.

IDE integration

Use Qwen Code inside your editor (VS Code, Zed, and JetBrains IDEs):

TypeScript SDK

Build on top of Qwen Code with the TypeScript SDK:

Commands & Shortcuts

Session Commands

/help- Display available commands/clear- Clear conversation history/compress- Compress history to save tokens/stats- Show current session information/bug- Submit a bug report/exitor/quit- Exit Qwen Code

Keyboard Shortcuts

Ctrl+C- Cancel current operationCtrl+D- Exit (on empty line)Up/Down- Navigate command history

Learn more about Commands

Tip: In YOLO mode (

--yolo), vision switching happens automatically without prompts when images are detected. Learn more about Approval Mode

Configuration

Qwen Code can be configured via settings.json, environment variables, and CLI flags.

| File | Scope | Description |

|---|---|---|

~/.qwen/settings.json |

User (global) | Applies to all your Qwen Code sessions. Recommended for modelProviders and env. |

.qwen/settings.json |

Project | Applies only when running Qwen Code in this project. Overrides user settings. |

The most commonly used top-level fields in settings.json:

| Field | Description |

|---|---|

modelProviders |

Define available models per protocol (openai, anthropic, gemini, vertex-ai). |

env |

Fallback environment variables (e.g. API keys). Lower priority than shell export and .env files. |

security.auth.selectedType |

The protocol to use on startup (e.g. openai). |

model.name |

The default model to use when Qwen Code starts. |

See the Authentication section above for complete

settings.jsonexamples, and the settings reference for all available options.

Benchmark Results

Terminal-Bench Performance

| Agent | Model | Accuracy |

|---|---|---|

| Qwen Code | Qwen3-Coder-480A35 | 37.5% |

| Qwen Code | Qwen3-Coder-30BA3B | 31.3% |

Ecosystem

Looking for a graphical interface?

- AionUi A modern GUI for command-line AI tools including Qwen Code

- Gemini CLI Desktop A cross-platform desktop/web/mobile UI for Qwen Code

Troubleshooting

If you encounter issues, check the troubleshooting guide.

Common issues:

Qwen OAuth free tier was discontinued on 2026-04-15: Qwen OAuth is no longer available. Runqwen→/authand switch to API Key or Coding Plan. See the Authentication section above for setup instructions.

To report a bug from within the CLI, run /bug and include a short title and repro steps.

Connect with Us

- Discord: https://discord.gg/RN7tqZCeDK

- Dingtalk: https://qr.dingtalk.com/action/joingroup?code=v1,k1,+FX6Gf/ZDlTahTIRi8AEQhIaBlqykA0j+eBKKdhLeAE=&_dt_no_comment=1&origin=1

Acknowledgments

This project is based on Google Gemini CLI. We acknowledge and appreciate the excellent work of the Gemini CLI team. Our main contribution focuses on parser-level adaptations to better support Qwen-Coder models.