mirror of

https://github.com/QwenLM/qwen-code.git

synced 2026-04-28 11:41:04 +00:00

Merge branch 'main' into feat/mcp-tui

This commit is contained in:

commit

1542a2bdc4

114 changed files with 6943 additions and 1324 deletions

27

.github/workflows/release-sdk.yml

vendored

27

.github/workflows/release-sdk.yml

vendored

|

|

@ -348,15 +348,32 @@ jobs:

|

|||

CLI_SOURCE_DESC="CLI built from source (same branch/ref as SDK)"

|

||||

fi

|

||||

|

||||

# Create release notes with CLI version info

|

||||

NOTES="## Bundled CLI Version\n\nThis SDK release bundles CLI version: \`${CLI_VERSION}\`\n\nSource: ${CLI_SOURCE_DESC}\n\n---\n\n"

|

||||

# Create release notes file

|

||||

NOTES_FILE=$(mktemp)

|

||||

{

|

||||

echo "## Bundled CLI Version"

|

||||

echo ""

|

||||

echo "This SDK release bundles CLI version: ${CLI_VERSION}"

|

||||

echo ""

|

||||

echo "Source: ${CLI_SOURCE_DESC}"

|

||||

echo ""

|

||||

echo "---"

|

||||

echo ""

|

||||

} > "${NOTES_FILE}"

|

||||

|

||||

# Get previous release notes if available

|

||||

PREVIOUS_NOTES=$(gh release view "sdk-typescript-${PREVIOUS_RELEASE_TAG}" --json body -q '.body' 2>/dev/null || echo 'See commit history for changes.')

|

||||

printf '%s\n' "${PREVIOUS_NOTES}" >> "${NOTES_FILE}"

|

||||

|

||||

# Create GitHub release

|

||||

gh release create "sdk-typescript-${RELEASE_TAG}" \

|

||||

--target "${TARGET}" \

|

||||

--title "SDK TypeScript Release ${RELEASE_TAG}" \

|

||||

--notes-start-tag "sdk-typescript-${PREVIOUS_RELEASE_TAG}" \

|

||||

--notes "${NOTES}$(gh release view "sdk-typescript-${PREVIOUS_RELEASE_TAG}" --json body -q '.body' 2>/dev/null || echo 'See commit history for changes.')" \

|

||||

"${PRERELEASE_FLAG}"

|

||||

--notes-file "${NOTES_FILE}" \

|

||||

${PRERELEASE_FLAG}

|

||||

|

||||

# Cleanup

|

||||

rm -f "${NOTES_FILE}"

|

||||

|

||||

- name: 'Create PR to merge release branch into main'

|

||||

if: |-

|

||||

|

|

|

|||

11

.github/workflows/release.yml

vendored

11

.github/workflows/release.yml

vendored

|

|

@ -206,13 +206,22 @@ jobs:

|

|||

RELEASE_BRANCH: '${{ steps.release_branch.outputs.BRANCH_NAME }}'

|

||||

RELEASE_TAG: '${{ steps.version.outputs.RELEASE_TAG }}'

|

||||

PREVIOUS_RELEASE_TAG: '${{ steps.version.outputs.PREVIOUS_RELEASE_TAG }}'

|

||||

IS_NIGHTLY: '${{ steps.vars.outputs.is_nightly }}'

|

||||

IS_PREVIEW: '${{ steps.vars.outputs.is_preview }}'

|

||||

run: |-

|

||||

# Set prerelease flag for nightly and preview releases

|

||||

PRERELEASE_FLAG=""

|

||||

if [[ "${IS_NIGHTLY}" == "true" || "${IS_PREVIEW}" == "true" ]]; then

|

||||

PRERELEASE_FLAG="--prerelease"

|

||||

fi

|

||||

|

||||

gh release create "${RELEASE_TAG}" \

|

||||

dist/cli.js \

|

||||

--target "$RELEASE_BRANCH" \

|

||||

--title "Release ${RELEASE_TAG}" \

|

||||

--notes-start-tag "$PREVIOUS_RELEASE_TAG" \

|

||||

--generate-notes

|

||||

--generate-notes \

|

||||

${PRERELEASE_FLAG}

|

||||

|

||||

- name: 'Create Issue on Failure'

|

||||

if: |-

|

||||

|

|

|

|||

5

.gitignore

vendored

5

.gitignore

vendored

|

|

@ -51,7 +51,10 @@ packages/core/src/generated/

|

|||

packages/vscode-ide-companion/*.vsix

|

||||

|

||||

# Qwen Code Configs

|

||||

|

||||

.qwen/

|

||||

!.qwen/commands/

|

||||

!.qwen/skills/

|

||||

logs/

|

||||

# GHA credentials

|

||||

gha-creds-*.json

|

||||

|

|

@ -70,6 +73,8 @@ __pycache__/

|

|||

integration-tests/concurrent-runner/output/

|

||||

integration-tests/concurrent-runner/task-*

|

||||

|

||||

integration-tests/terminal-capture/scenarios/screenshots/

|

||||

|

||||

# storybook

|

||||

*storybook.log

|

||||

storybook-static

|

||||

|

|

|

|||

104

.qwen/skills/pr-review/SKILL.md

Normal file

104

.qwen/skills/pr-review/SKILL.md

Normal file

|

|

@ -0,0 +1,104 @@

|

|||

---

|

||||

name: pr-review

|

||||

description: Reviews pull requests with code analysis and terminal smoke testing. Applies when examining code changes, running CLI tests, or when 'PR review', 'code review', 'terminal screenshot', 'visual test' is mentioned.

|

||||

---

|

||||

|

||||

# PR Review — Code Review + Terminal Smoke Testing

|

||||

|

||||

## Workflow

|

||||

|

||||

### 1. Fetch PR Information

|

||||

|

||||

```bash

|

||||

# List open PRs

|

||||

gh pr list

|

||||

|

||||

# View PR details

|

||||

gh pr view <number>

|

||||

|

||||

# Get diff

|

||||

gh pr diff <number>

|

||||

```

|

||||

|

||||

### 2. Code Review

|

||||

|

||||

Analyze changes across the following dimensions:

|

||||

|

||||

- **Correctness** — Is the logic correct? Are edge cases handled?

|

||||

- **Code Style** — Does it follow existing code style and conventions?

|

||||

- **Performance** — Are there any performance concerns?

|

||||

- **Test Coverage** — Are there corresponding tests for the changes?

|

||||

- **Security** — Does it introduce any security risks?

|

||||

|

||||

Output format:

|

||||

|

||||

- 🔴 **Critical** — Must fix

|

||||

- 🟡 **Suggestion** — Suggested improvement

|

||||

- 🟢 **Nice to have** — Optional optimization

|

||||

|

||||

### 3. Terminal Smoke Testing (Run for Every PR)

|

||||

|

||||

**Run terminal-capture for every PR review**, not just UI changes. Reasons:

|

||||

|

||||

- **Smoke Test** — Verify the CLI starts correctly and responds to user input, ensuring the PR didn't break anything

|

||||

- **Visual Verification** — If there are UI changes, screenshots provide the most intuitive review evidence

|

||||

- **Documentation** — Attach screenshots to the PR comments so reviewers can see the results without building locally

|

||||

|

||||

```bash

|

||||

# Checkout branch & build

|

||||

gh pr checkout <number>

|

||||

npm run build

|

||||

```

|

||||

|

||||

#### Scenario Selection Strategy

|

||||

|

||||

Choose appropriate scenarios based on the PR's scope of changes:

|

||||

|

||||

| PR Type | Recommended Scenarios | Description |

|

||||

| ------------------------------------- | ------------------------------------------------------------ | --------------------------------- |

|

||||

| **Any PR** (default) | smoke test: send `hi`, verify startup & response | Minimal-cost smoke validation |

|

||||

| Slash command changes | Corresponding command scenarios (`/about`, `/context`, etc.) | Verify command output correctness |

|

||||

| Ink component / layout changes | Multiple scenarios + full-flow long screenshot | Verify visual effects |

|

||||

| Large refactors / dependency upgrades | Run `scenarios/all.ts` fully | Full regression |

|

||||

|

||||

#### Running Screenshots

|

||||

|

||||

```bash

|

||||

# Write scenario config to integration-tests/terminal-capture/scenarios/

|

||||

# See terminal-capture skill for FlowStep API reference

|

||||

|

||||

# Single scenario

|

||||

npx tsx integration-tests/terminal-capture/run.ts integration-tests/terminal-capture/scenarios/<scenario>.ts

|

||||

|

||||

|

||||

# Check output in screenshots/ directory

|

||||

```

|

||||

|

||||

#### Minimal Smoke Test Example

|

||||

|

||||

No need to write a new scenario file — just use the existing `about.ts`. It sends "hi" then runs `/about`, covering startup + input + command response:

|

||||

|

||||

```bash

|

||||

npx tsx integration-tests/terminal-capture/run.ts integration-tests/terminal-capture/scenarios/about.ts

|

||||

```

|

||||

|

||||

### 4. Upload Screenshots to PR

|

||||

|

||||

Use Playwright MCP browser to upload screenshots to the PR comments (images hosted at `github.com/user-attachments/assets/`, zero side effects):

|

||||

|

||||

1. Open the PR page with Playwright: `https://github.com/<repo>/pull/<number>`

|

||||

2. Click the comment text box and enter a comment title (e.g., `## 📷 Terminal Smoke Test Screenshots`)

|

||||

3. Click the "Paste, drop, or click to add files" button to trigger the file picker

|

||||

4. Upload screenshot PNG files via `browser_file_upload` (can upload multiple one by one)

|

||||

5. Wait for GitHub to process (about 2-3 seconds) — image links auto-insert into the comment box

|

||||

6. Click the "Comment" button to submit

|

||||

|

||||

> **Prerequisite**: Playwright MCP needs `--user-data-dir` configured to persist GitHub login session. First time use requires manually logging into GitHub in the Playwright browser.

|

||||

|

||||

### 5. Submit Review

|

||||

|

||||

Submit code review comments via `gh pr review`:

|

||||

|

||||

```bash

|

||||

gh pr review <number> --comment --body "review content"

|

||||

```

|

||||

197

.qwen/skills/terminal-capture/SKILL.md

Normal file

197

.qwen/skills/terminal-capture/SKILL.md

Normal file

|

|

@ -0,0 +1,197 @@

|

|||

---

|

||||

name: terminal-capture

|

||||

description: Automates terminal UI screenshot testing for CLI commands. Applies when reviewing PRs that affect CLI output, testing slash commands (/about, /context, /auth, /export), generating visual documentation, or when 'terminal screenshot', 'CLI test', 'visual test', or 'terminal-capture' is mentioned.

|

||||

---

|

||||

|

||||

# Terminal Capture — CLI Terminal Screenshot Automation

|

||||

|

||||

Drive terminal interactions and screenshots via TypeScript configuration, used for visual verification during PR reviews.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Ensure the following dependencies are installed before running:

|

||||

|

||||

```bash

|

||||

npm install # Install project dependencies (including node-pty, xterm, playwright, etc.)

|

||||

npx playwright install chromium # Install Playwright browser

|

||||

```

|

||||

|

||||

## Architecture

|

||||

|

||||

```

|

||||

node-pty (pseudo-terminal) → ANSI byte stream → xterm.js (Playwright headless) → Screenshot

|

||||

```

|

||||

|

||||

Core files:

|

||||

|

||||

| File | Purpose |

|

||||

| -------------------------------------------------------- | ------------------------------------------------------------------------ |

|

||||

| `integration-tests/terminal-capture/terminal-capture.ts` | Low-level engine (PTY + xterm.js + Playwright) |

|

||||

| `integration-tests/terminal-capture/scenario-runner.ts` | Scenario executor (parses config, drives interactions, auto-screenshots) |

|

||||

| `integration-tests/terminal-capture/run.ts` | CLI entry point (batch run scenarios) |

|

||||

| `integration-tests/terminal-capture/scenarios/*.ts` | Scenario configuration files |

|

||||

|

||||

## Quick Start

|

||||

|

||||

### 1. Write Scenario Configuration

|

||||

|

||||

Create a `.ts` file under `integration-tests/terminal-capture/scenarios/`:

|

||||

|

||||

```typescript

|

||||

import type { ScenarioConfig } from '../scenario-runner.js';

|

||||

|

||||

export default {

|

||||

name: '/about',

|

||||

spawn: ['node', 'dist/cli.js', '--yolo'],

|

||||

terminal: { title: 'qwen-code', cwd: '../../..' }, // Relative to this config file's location

|

||||

flow: [

|

||||

{ type: 'Hi, can you help me understand this codebase?' },

|

||||

{ type: '/about' },

|

||||

],

|

||||

} satisfies ScenarioConfig;

|

||||

```

|

||||

|

||||

### 2. Run

|

||||

|

||||

```bash

|

||||

# Single scenario

|

||||

npx tsx integration-tests/terminal-capture/run.ts integration-tests/terminal-capture/scenarios/about.ts

|

||||

|

||||

# Batch (entire directory)

|

||||

npx tsx integration-tests/terminal-capture/run.ts integration-tests/terminal-capture/scenarios/

|

||||

```

|

||||

|

||||

### 3. Output

|

||||

|

||||

Screenshots are saved to `integration-tests/terminal-capture/scenarios/screenshots/{name}/`:

|

||||

|

||||

| File | Description |

|

||||

| --------------- | ---------------------------------- |

|

||||

| `01-01.png` | Step 1 input state |

|

||||

| `01-02.png` | Step 1 execution result |

|

||||

| `02-01.png` | Step 2 input state |

|

||||

| `02-02.png` | Step 2 execution result |

|

||||

| `full-flow.png` | Final state full-length screenshot |

|

||||

|

||||

## FlowStep API

|

||||

|

||||

Each flow step can contain the following fields:

|

||||

|

||||

### `type: string` — Input Text

|

||||

|

||||

Automatic behavior: Input text → Screenshot (01) → Press Enter → Wait for output to stabilize → Screenshot (02).

|

||||

|

||||

```typescript

|

||||

{

|

||||

type: 'Hello';

|

||||

} // Plain text

|

||||

{

|

||||

type: '/about';

|

||||

} // Slash command (auto-completion handled automatically)

|

||||

```

|

||||

|

||||

**Special rule**: If the next step is `key`, do not auto-press Enter (hand over control to the key sequence).

|

||||

|

||||

### `key: string | string[]` — Send Key Press

|

||||

|

||||

Used for menu selection, Tab completion, and other interactions. Does not auto-press Enter or auto-screenshot.

|

||||

|

||||

Supported key names: `ArrowUp`, `ArrowDown`, `ArrowLeft`, `ArrowRight`, `Enter`, `Tab`, `Escape`, `Backspace`, `Space`, `Home`, `End`, `PageUp`, `PageDown`, `Delete`

|

||||

|

||||

```typescript

|

||||

{

|

||||

key: 'ArrowDown';

|

||||

} // Single key

|

||||

{

|

||||

key: ['ArrowDown', 'ArrowDown', 'Enter'];

|

||||

} // Multiple keys

|

||||

```

|

||||

|

||||

Auto-screenshot is triggered after the key sequence ends (when the next step is not a `key`).

|

||||

|

||||

### `capture` / `captureFull` — Explicit Screenshot

|

||||

|

||||

Use as a standalone step, or override automatic naming:

|

||||

|

||||

```typescript

|

||||

{

|

||||

capture: 'initial.png';

|

||||

} // Screenshot current viewport only

|

||||

{

|

||||

captureFull: 'all-output.png';

|

||||

} // Screenshot full scrollback buffer

|

||||

```

|

||||

|

||||

## Scenario Examples

|

||||

|

||||

### Basic: Input + Command

|

||||

|

||||

```typescript

|

||||

flow: [{ type: 'explain this project' }, { type: '/about' }];

|

||||

```

|

||||

|

||||

### Secondary Menu Selection (/auth)

|

||||

|

||||

```typescript

|

||||

flow: [

|

||||

{ type: '/auth' },

|

||||

{ key: 'ArrowDown' }, // Select API Key option

|

||||

{ key: 'Enter' }, // Confirm

|

||||

{ type: 'sk-xxx' }, // Input API key

|

||||

];

|

||||

```

|

||||

|

||||

### Tab Completion Selection (/export)

|

||||

|

||||

```typescript

|

||||

flow: [

|

||||

{ type: 'Tell me about yourself' },

|

||||

{ type: '/export' }, // No auto-Enter (next step is key)

|

||||

{ key: 'Tab' }, // Pop format selection

|

||||

{ key: 'ArrowDown' }, // Select format

|

||||

{ key: 'Enter' }, // Confirm → auto-screenshot

|

||||

];

|

||||

```

|

||||

|

||||

### Array Batch (Multiple Scenarios in One File)

|

||||

|

||||

```typescript

|

||||

export default [

|

||||

{ name: '/about', spawn: [...], flow: [...] },

|

||||

{ name: '/context', spawn: [...], flow: [...] },

|

||||

] satisfies ScenarioConfig[];

|

||||

```

|

||||

|

||||

## Integration with PR Review

|

||||

|

||||

This tool is commonly used for visual verification during PR reviews. For the complete code review + screenshot workflow, see the [pr-review](../pr-review/SKILL.md) skill.

|

||||

|

||||

## Troubleshooting

|

||||

|

||||

| Issue | Cause | Solution |

|

||||

| ------------------------------------ | ------------------------------------- | ---------------------------------------------------- |

|

||||

| Playwright error `browser not found` | Browser not installed | `npx playwright install chromium` |

|

||||

| Blank screenshot | Process starts slowly or build failed | Ensure `npm run build` succeeds, check spawn command |

|

||||

| PTY-related errors | node-pty native module not compiled | `npm rebuild node-pty` |

|

||||

| Unstable screenshot output | Terminal output not fully rendered | Check if the scenario needs additional wait time |

|

||||

|

||||

## Full ScenarioConfig Type

|

||||

|

||||

```typescript

|

||||

interface ScenarioConfig {

|

||||

name: string; // Scenario name (also used as screenshot subdirectory name)

|

||||

spawn: string[]; // Launch command ["node", "dist/cli.js", "--yolo"]

|

||||

flow: FlowStep[]; // Interaction steps

|

||||

terminal?: {

|

||||

// Terminal configuration (all optional)

|

||||

cols?: number; // Number of columns, default 100

|

||||

rows?: number; // Number of rows, default 28

|

||||

theme?: string; // Theme: dracula|one-dark|github-dark|monokai|night-owl

|

||||

chrome?: boolean; // macOS window decorations, default true

|

||||

title?: string; // Window title, default "Terminal"

|

||||

fontSize?: number; // Font size

|

||||

cwd?: string; // Working directory (relative to config file)

|

||||

};

|

||||

outputDir?: string; // Screenshot output directory (relative to config file)

|

||||

}

|

||||

```

|

||||

13

.vscode/launch.json

vendored

13

.vscode/launch.json

vendored

|

|

@ -127,6 +127,19 @@

|

|||

"cwd": "${workspaceFolder}",

|

||||

"console": "integratedTerminal",

|

||||

"env": {}

|

||||

},

|

||||

{

|

||||

"type": "node",

|

||||

"request": "launch",

|

||||

"name": "Dev Launch CLI",

|

||||

"runtimeExecutable": "npm",

|

||||

"runtimeArgs": ["run", "dev"],

|

||||

"skipFiles": ["<node_internals>/**"],

|

||||

"cwd": "${workspaceFolder}",

|

||||

"console": "integratedTerminal",

|

||||

"env": {

|

||||

"GEMINI_SANDBOX": "false"

|

||||

}

|

||||

}

|

||||

],

|

||||

"inputs": [

|

||||

|

|

|

|||

245

README.md

245

README.md

|

|

@ -18,6 +18,8 @@

|

|||

|

||||

</div>

|

||||

|

||||

> 🎉 **News (2026-02-16)**: Qwen3.5-Plus is now live! Sign in via Qwen OAuth to use it directly, or get an API key from [Alibaba Cloud ModelStudio](https://modelstudio.console.alibabacloud.com?tab=doc#/doc/?type=model&url=2840914_2&modelId=group-qwen3.5-plus) to access it through the OpenAI-compatible API.

|

||||

|

||||

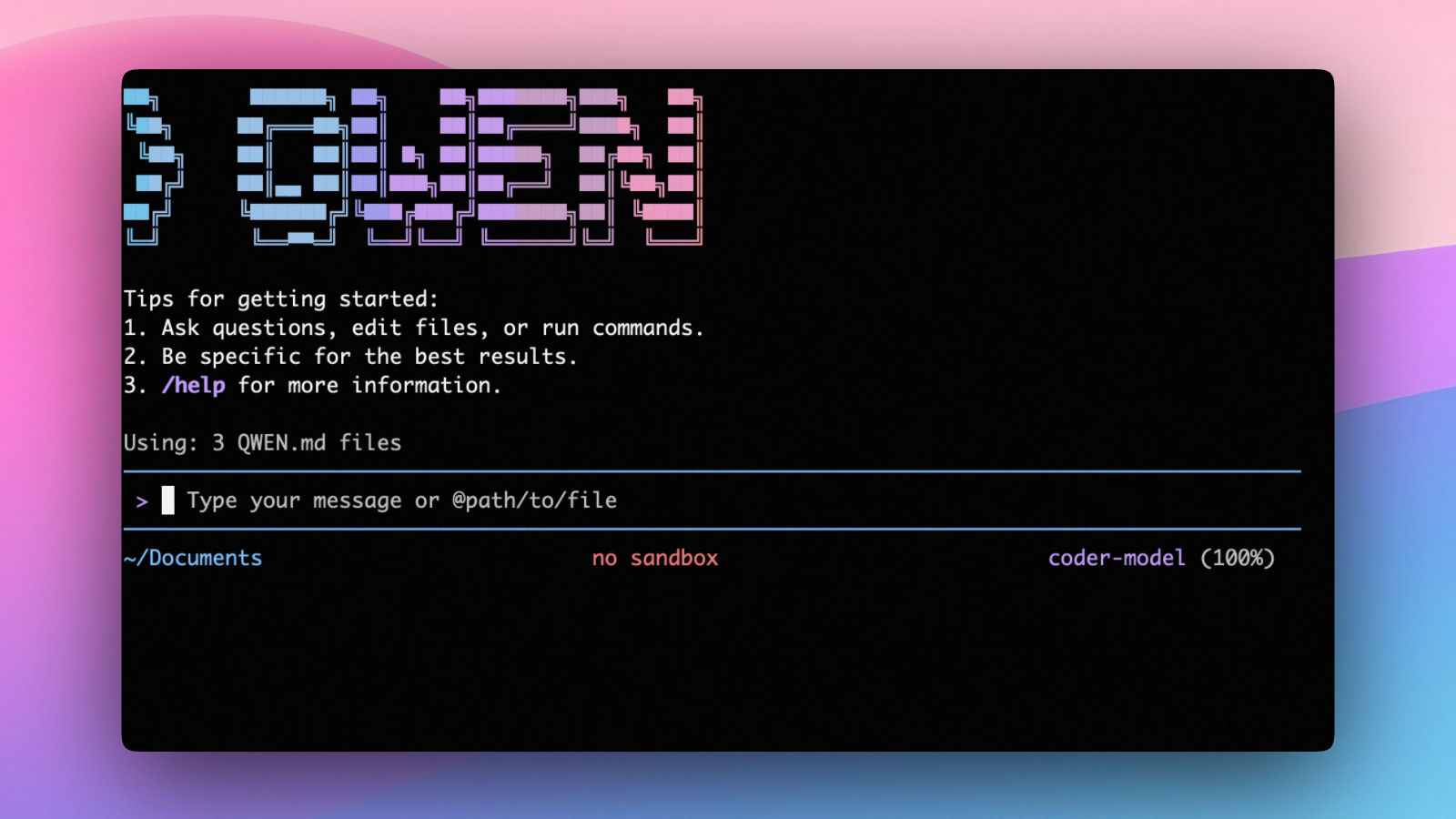

Qwen Code is an open-source AI agent for the terminal, optimized for [Qwen3-Coder](https://github.com/QwenLM/Qwen3-Coder). It helps you understand large codebases, automate tedious work, and ship faster.

|

||||

|

||||

|

||||

|

|

@ -123,7 +125,231 @@ Use this if you want more flexibility over which provider and model to use. Supp

|

|||

- **Anthropic**: Claude models

|

||||

- **Google GenAI**: Gemini models

|

||||

|

||||

For full details (including `modelProviders` configuration, `.env` file loading, environment variable priorities, and security notes), see the [authentication guide](https://qwenlm.github.io/qwen-code-docs/en/users/configuration/auth/).

|

||||

The **recommended** way to configure models and providers is by editing `~/.qwen/settings.json` (create it if it doesn't exist). This file lets you define all available models, API keys, and default settings in one place.

|

||||

|

||||

##### Quick Setup in 3 Steps

|

||||

|

||||

**Step 1:** Create or edit `~/.qwen/settings.json`

|

||||

|

||||

Here is a complete example:

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"openai": [

|

||||

{

|

||||

"id": "qwen3-coder-plus",

|

||||

"name": "qwen3-coder-plus",

|

||||

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

|

||||

"description": "Qwen3-Coder via Dashscope",

|

||||

"envKey": "DASHSCOPE_API_KEY"

|

||||

}

|

||||

]

|

||||

},

|

||||

"env": {

|

||||

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

|

||||

},

|

||||

"security": {

|

||||

"auth": {

|

||||

"selectedType": "openai"

|

||||

}

|

||||

},

|

||||

"model": {

|

||||

"name": "qwen3-coder-plus"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

**Step 2:** Understand each field

|

||||

|

||||

| Field | What it does |

|

||||

| ---------------------------- | ------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

| `modelProviders` | Declares which models are available and how to connect to them. Keys like `openai`, `anthropic`, `gemini` represent the API protocol. |

|

||||

| `modelProviders[].id` | The model ID sent to the API (e.g. `qwen3-coder-plus`, `gpt-4o`). |

|

||||

| `modelProviders[].envKey` | The name of the environment variable that holds your API key. |

|

||||

| `modelProviders[].baseUrl` | The API endpoint URL (required for non-default endpoints). |

|

||||

| `env` | A fallback place to store API keys (lowest priority; prefer `.env` files or `export` for sensitive keys). |

|

||||

| `security.auth.selectedType` | The protocol to use on startup (`openai`, `anthropic`, `gemini`, `vertex-ai`). |

|

||||

| `model.name` | The default model to use when Qwen Code starts. |

|

||||

|

||||

**Step 3:** Start Qwen Code — your configuration takes effect automatically:

|

||||

|

||||

```bash

|

||||

qwen

|

||||

```

|

||||

|

||||

Use the `/model` command at any time to switch between all configured models.

|

||||

|

||||

##### More Examples

|

||||

|

||||

<details>

|

||||

<summary>Coding Plan (Alibaba Cloud Bailian) — fixed monthly fee, higher quotas</summary>

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"openai": [

|

||||

{

|

||||

"id": "qwen3.5-plus",

|

||||

"name": "qwen3.5-plus (Coding Plan)",

|

||||

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

|

||||

"description": "qwen3.5-plus with thinking enabled from Bailian Coding Plan",

|

||||

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

|

||||

"generationConfig": {

|

||||

"extra_body": {

|

||||

"enable_thinking": true

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"id": "qwen3-coder-plus",

|

||||

"name": "qwen3-coder-plus (Coding Plan)",

|

||||

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

|

||||

"description": "qwen3-coder-plus from Bailian Coding Plan",

|

||||

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

|

||||

},

|

||||

{

|

||||

"id": "qwen3-coder-next",

|

||||

"name": "qwen3-coder-next (Coding Plan)",

|

||||

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

|

||||

"description": "qwen3-coder-next with thinking enabled from Bailian Coding Plan",

|

||||

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

|

||||

"generationConfig": {

|

||||

"extra_body": {

|

||||

"enable_thinking": true

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"id": "glm-4.7",

|

||||

"name": "glm-4.7 (Coding Plan)",

|

||||

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

|

||||

"description": "glm-4.7 with thinking enabled from Bailian Coding Plan",

|

||||

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

|

||||

"generationConfig": {

|

||||

"extra_body": {

|

||||

"enable_thinking": true

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"id": "kimi-k2.5",

|

||||

"name": "kimi-k2.5 (Coding Plan)",

|

||||

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

|

||||

"description": "kimi-k2.5 with thinking enabled from Bailian Coding Plan",

|

||||

"envKey": "BAILIAN_CODING_PLAN_API_KEY",

|

||||

"generationConfig": {

|

||||

"extra_body": {

|

||||

"enable_thinking": true

|

||||

}

|

||||

}

|

||||

}

|

||||

]

|

||||

},

|

||||

"env": {

|

||||

"BAILIAN_CODING_PLAN_API_KEY": "sk-xxxxxxxxxxxxx"

|

||||

},

|

||||

"security": {

|

||||

"auth": {

|

||||

"selectedType": "openai"

|

||||

}

|

||||

},

|

||||

"model": {

|

||||

"name": "qwen3-coder-plus"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

> Subscribe to the Coding Plan and get your API key at [Alibaba Cloud Bailian](https://modelstudio.console.aliyun.com/?tab=dashboard#/efm/coding_plan).

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary>Multiple providers (OpenAI + Anthropic + Gemini)</summary>

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"openai": [

|

||||

{

|

||||

"id": "gpt-4o",

|

||||

"name": "GPT-4o",

|

||||

"envKey": "OPENAI_API_KEY",

|

||||

"baseUrl": "https://api.openai.com/v1"

|

||||

}

|

||||

],

|

||||

"anthropic": [

|

||||

{

|

||||

"id": "claude-sonnet-4-20250514",

|

||||

"name": "Claude Sonnet 4",

|

||||

"envKey": "ANTHROPIC_API_KEY"

|

||||

}

|

||||

],

|

||||

"gemini": [

|

||||

{

|

||||

"id": "gemini-2.5-pro",

|

||||

"name": "Gemini 2.5 Pro",

|

||||

"envKey": "GEMINI_API_KEY"

|

||||

}

|

||||

]

|

||||

},

|

||||

"env": {

|

||||

"OPENAI_API_KEY": "sk-xxxxxxxxxxxxx",

|

||||

"ANTHROPIC_API_KEY": "sk-ant-xxxxxxxxxxxxx",

|

||||

"GEMINI_API_KEY": "AIzaxxxxxxxxxxxxx"

|

||||

},

|

||||

"security": {

|

||||

"auth": {

|

||||

"selectedType": "openai"

|

||||

}

|

||||

},

|

||||

"model": {

|

||||

"name": "gpt-4o"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary>Enable thinking mode (for supported models like qwen3.5-plus)</summary>

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"openai": [

|

||||

{

|

||||

"id": "qwen3.5-plus",

|

||||

"name": "qwen3.5-plus (thinking)",

|

||||

"envKey": "DASHSCOPE_API_KEY",

|

||||

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

|

||||

"generationConfig": {

|

||||

"extra_body": {

|

||||

"enable_thinking": true

|

||||

}

|

||||

}

|

||||

}

|

||||

]

|

||||

},

|

||||

"env": {

|

||||

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

|

||||

},

|

||||

"security": {

|

||||

"auth": {

|

||||

"selectedType": "openai"

|

||||

}

|

||||

},

|

||||

"model": {

|

||||

"name": "qwen3.5-plus"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

</details>

|

||||

|

||||

> **Tip:** You can also set API keys via `export` in your shell or `.env` files, which take higher priority than `settings.json` → `env`. See the [authentication guide](https://qwenlm.github.io/qwen-code-docs/en/users/configuration/auth/) for full details.

|

||||

|

||||

> **Security note:** Never commit API keys to version control. The `~/.qwen/settings.json` file is in your home directory and should stay private.

|

||||

|

||||

## Usage

|

||||

|

||||

|

|

@ -191,10 +417,21 @@ Build on top of Qwen Code with the TypeScript SDK:

|

|||

|

||||

Qwen Code can be configured via `settings.json`, environment variables, and CLI flags.

|

||||

|

||||

- **User settings**: `~/.qwen/settings.json`

|

||||

- **Project settings**: `.qwen/settings.json`

|

||||

| File | Scope | Description |

|

||||

| ----------------------- | ------------- | --------------------------------------------------------------------------------------- |

|

||||

| `~/.qwen/settings.json` | User (global) | Applies to all your Qwen Code sessions. **Recommended for `modelProviders` and `env`.** |

|

||||

| `.qwen/settings.json` | Project | Applies only when running Qwen Code in this project. Overrides user settings. |

|

||||

|

||||

See [settings](https://qwenlm.github.io/qwen-code-docs/en/users/configuration/settings/) for available options and precedence.

|

||||

The most commonly used top-level fields in `settings.json`:

|

||||

|

||||

| Field | Description |

|

||||

| ---------------------------- | ---------------------------------------------------------------------------------------------------- |

|

||||

| `modelProviders` | Define available models per protocol (`openai`, `anthropic`, `gemini`, `vertex-ai`). |

|

||||

| `env` | Fallback environment variables (e.g. API keys). Lower priority than shell `export` and `.env` files. |

|

||||

| `security.auth.selectedType` | The protocol to use on startup (e.g. `openai`). |

|

||||

| `model.name` | The default model to use when Qwen Code starts. |

|

||||

|

||||

> See the [Authentication](#api-key-flexible) section above for complete `settings.json` examples, and the [settings reference](https://qwenlm.github.io/qwen-code-docs/en/users/configuration/settings/) for all available options.

|

||||

|

||||

## Benchmark Results

|

||||

|

||||

|

|

|

|||

10

SECURITY.md

10

SECURITY.md

|

|

@ -1,5 +1,9 @@

|

|||

# Reporting Security Issues

|

||||

# Security Policy

|

||||

|

||||

Please report any security issue or Higress crash report to [ASRC](https://security.alibaba.com/) (Alibaba Security Response Center) where the issue will be triaged appropriately.

|

||||

## Reporting a Vulnerability

|

||||

|

||||

Thank you for helping keep our project secure.

|

||||

If you believe you have discovered a security vulnerability, please report it to us through the following portal: [Report Security Issue](https://yundun.console.aliyun.com/?p=xznew#/taskmanagement/tasks/detail/151)

|

||||

|

||||

> **Note:** This channel is strictly for reporting security-related issues. Non-security vulnerabilities or general bug reports will not be addressed here.

|

||||

|

||||

We sincerely appreciate your responsible disclosure and your contribution to helping us keep our project secure.

|

||||

|

|

|

|||

|

|

@ -2,13 +2,13 @@

|

|||

|

||||

> **Objective**: Catch up with Claude Code's product functionality, continuously refine details, and enhance user experience.

|

||||

|

||||

| Category | Phase 1 | Phase 2 |

|

||||

| ------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ------------------------------------------------------------------------------------------------- |

|

||||

| User Experience | ✅ Terminal UI<br>✅ Support OpenAI Protocol<br>✅ Settings<br>✅ OAuth<br>✅ Cache Control<br>✅ Memory<br>✅ Compress<br>✅ Theme | Better UI<br>OnBoarding<br>LogView<br>✅ Session<br>Permission<br>🔄 Cross-platform Compatibility |

|

||||

| Coding Workflow | ✅ Slash Commands<br>✅ MCP<br>✅ PlanMode<br>✅ TodoWrite<br>✅ SubAgent<br>✅ Multi Model<br>✅ Chat Management<br>✅ Tools (WebFetch, Bash, TextSearch, FileReadFile, EditFile) | 🔄 Hooks<br>SubAgent (enhanced)<br>✅ Skill<br>✅ Headless Mode<br>✅ Tools (WebSearch) |

|

||||

| Building Open Capabilities | ✅ Custom Commands | ✅ QwenCode SDK<br> Extension |

|

||||

| Integrating Community Ecosystem | | ✅ VSCode Plugin<br>🔄 ACP/Zed<br>✅ GHA |

|

||||

| Administrative Capabilities | ✅ Stats<br>✅ Feedback | Costs<br>Dashboard |

|

||||

| Category | Phase 1 | Phase 2 |

|

||||

| ------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | --------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

| User Experience | ✅ Terminal UI<br>✅ Support OpenAI Protocol<br>✅ Settings<br>✅ OAuth<br>✅ Cache Control<br>✅ Memory<br>✅ Compress<br>✅ Theme | Better UI<br>OnBoarding<br>LogView<br>✅ Session<br>Permission<br>🔄 Cross-platform Compatibility<br>✅ Coding Plan<br>✅ Anthropic Provider<br>✅ Multimodal Input<br>✅ Unified WebUI |

|

||||

| Coding Workflow | ✅ Slash Commands<br>✅ MCP<br>✅ PlanMode<br>✅ TodoWrite<br>✅ SubAgent<br>✅ Multi Model<br>✅ Chat Management<br>✅ Tools (WebFetch, Bash, TextSearch, FileReadFile, EditFile) | 🔄 Hooks<br>✅ Skill<br>✅ Headless Mode<br>✅ Tools (WebSearch)<br>✅ LSP Support<br>✅ Concurrent Runner |

|

||||

| Building Open Capabilities | ✅ Custom Commands | ✅ QwenCode SDK<br>✅ Extension System |

|

||||

| Integrating Community Ecosystem | | ✅ VSCode Plugin<br>✅ ACP/Zed<br>✅ GHA |

|

||||

| Administrative Capabilities | ✅ Stats<br>✅ Feedback | Costs<br>Dashboard<br>✅ User Feedback Dialog |

|

||||

|

||||

> For more details, please see the list below.

|

||||

|

||||

|

|

@ -16,39 +16,48 @@

|

|||

|

||||

#### Completed Features

|

||||

|

||||

| Feature | Version | Description | Category |

|

||||

| ----------------------- | --------- | ------------------------------------------------------- | ------------------------------- |

|

||||

| Skill | `V0.6.0` | Extensible custom AI skills | Coding Workflow |

|

||||

| Github Actions | `V0.5.0` | qwen-code-action and automation | Integrating Community Ecosystem |

|

||||

| VSCode Plugin | `V0.5.0` | VSCode extension plugin | Integrating Community Ecosystem |

|

||||

| QwenCode SDK | `V0.4.0` | Open SDK for third-party integration | Building Open Capabilities |

|

||||

| Session | `V0.4.0` | Enhanced session management | User Experience |

|

||||

| i18n | `V0.3.0` | Internationalization and multilingual support | User Experience |

|

||||

| Headless Mode | `V0.3.0` | Headless mode (non-interactive) | Coding Workflow |

|

||||

| ACP/Zed | `V0.2.0` | ACP and Zed editor integration | Integrating Community Ecosystem |

|

||||

| Terminal UI | `V0.1.0+` | Interactive terminal user interface | User Experience |

|

||||

| Settings | `V0.1.0+` | Configuration management system | User Experience |

|

||||

| Theme | `V0.1.0+` | Multi-theme support | User Experience |

|

||||

| Support OpenAI Protocol | `V0.1.0+` | Support for OpenAI API protocol | User Experience |

|

||||

| Chat Management | `V0.1.0+` | Session management (save, restore, browse) | Coding Workflow |

|

||||

| MCP | `V0.1.0+` | Model Context Protocol integration | Coding Workflow |

|

||||

| Multi Model | `V0.1.0+` | Multi-model support and switching | Coding Workflow |

|

||||

| Slash Commands | `V0.1.0+` | Slash command system | Coding Workflow |

|

||||

| Tool: Bash | `V0.1.0+` | Shell command execution tool (with is_background param) | Coding Workflow |

|

||||

| Tool: FileRead/EditFile | `V0.1.0+` | File read/write and edit tools | Coding Workflow |

|

||||

| Custom Commands | `V0.1.0+` | Custom command loading | Building Open Capabilities |

|

||||

| Feedback | `V0.1.0+` | Feedback mechanism (/bug command) | Administrative Capabilities |

|

||||

| Stats | `V0.1.0+` | Usage statistics and quota display | Administrative Capabilities |

|

||||

| Memory | `V0.0.9+` | Project-level and global memory management | User Experience |

|

||||

| Cache Control | `V0.0.9+` | Prompt caching control (Anthropic, DashScope) | User Experience |

|

||||

| PlanMode | `V0.0.14` | Task planning mode | Coding Workflow |

|

||||

| Compress | `V0.0.11` | Chat compression mechanism | User Experience |

|

||||

| SubAgent | `V0.0.11` | Dedicated sub-agent system | Coding Workflow |

|

||||

| TodoWrite | `V0.0.10` | Task management and progress tracking | Coding Workflow |

|

||||

| Tool: TextSearch | `V0.0.8+` | Text search tool (grep, supports .qwenignore) | Coding Workflow |

|

||||

| Tool: WebFetch | `V0.0.7+` | Web content fetching tool | Coding Workflow |

|

||||

| Tool: WebSearch | `V0.0.7+` | Web search tool (using Tavily API) | Coding Workflow |

|

||||

| OAuth | `V0.0.5+` | OAuth login authentication (Qwen OAuth) | User Experience |

|

||||

| Feature | Version | Description | Category | Phase |

|

||||

| ----------------------- | --------- | ------------------------------------------------------- | ------------------------------- | ----- |

|

||||

| **Coding Plan** | `V0.10.0` | Bailian Coding Plan authentication & models | User Experience | 2 |

|

||||

| Unified WebUI | `V0.9.0` | Shared WebUI component library for VSCode/CLI | User Experience | 2 |

|

||||

| Export Chat | `V0.8.0` | Export sessions to Markdown/HTML/JSON/JSONL | User Experience | 2 |

|

||||

| Extension System | `V0.8.0` | Full extension management with slash commands | Building Open Capabilities | 2 |

|

||||

| LSP Support | `V0.7.0` | Experimental LSP service (`--experimental-lsp`) | Coding Workflow | 2 |

|

||||

| Anthropic Provider | `V0.7.0` | Anthropic API provider support | User Experience | 2 |

|

||||

| User Feedback Dialog | `V0.7.0` | In-app feedback collection with fatigue mechanism | Administrative Capabilities | 2 |

|

||||

| Concurrent Runner | `V0.6.0` | Batch CLI execution with Git integration | Coding Workflow | 2 |

|

||||

| Multimodal Input | `V0.6.0` | Image, PDF, audio, video input support | User Experience | 2 |

|

||||

| Skill | `V0.6.0` | Extensible custom AI skills (experimental) | Coding Workflow | 2 |

|

||||

| Github Actions | `V0.5.0` | qwen-code-action and automation | Integrating Community Ecosystem | 1 |

|

||||

| VSCode Plugin | `V0.5.0` | VSCode extension plugin | Integrating Community Ecosystem | 1 |

|

||||

| QwenCode SDK | `V0.4.0` | Open SDK for third-party integration | Building Open Capabilities | 1 |

|

||||

| Session | `V0.4.0` | Enhanced session management | User Experience | 1 |

|

||||

| i18n | `V0.3.0` | Internationalization and multilingual support | User Experience | 1 |

|

||||

| Headless Mode | `V0.3.0` | Headless mode (non-interactive) | Coding Workflow | 1 |

|

||||

| ACP/Zed | `V0.2.0` | ACP and Zed editor integration | Integrating Community Ecosystem | 1 |

|

||||

| Terminal UI | `V0.1.0+` | Interactive terminal user interface | User Experience | 1 |

|

||||

| Settings | `V0.1.0+` | Configuration management system | User Experience | 1 |

|

||||

| Theme | `V0.1.0+` | Multi-theme support | User Experience | 1 |

|

||||

| Support OpenAI Protocol | `V0.1.0+` | Support for OpenAI API protocol | User Experience | 1 |

|

||||

| Chat Management | `V0.1.0+` | Session management (save, restore, browse) | Coding Workflow | 1 |

|

||||

| MCP | `V0.1.0+` | Model Context Protocol integration | Coding Workflow | 1 |

|

||||

| Multi Model | `V0.1.0+` | Multi-model support and switching | Coding Workflow | 1 |

|

||||

| Slash Commands | `V0.1.0+` | Slash command system | Coding Workflow | 1 |

|

||||

| Tool: Bash | `V0.1.0+` | Shell command execution tool (with is_background param) | Coding Workflow | 1 |

|

||||

| Tool: FileRead/EditFile | `V0.1.0+` | File read/write and edit tools | Coding Workflow | 1 |

|

||||

| Custom Commands | `V0.1.0+` | Custom command loading | Building Open Capabilities | 1 |

|

||||

| Feedback | `V0.1.0+` | Feedback mechanism (/bug command) | Administrative Capabilities | 1 |

|

||||

| Stats | `V0.1.0+` | Usage statistics and quota display | Administrative Capabilities | 1 |

|

||||

| Memory | `V0.0.9+` | Project-level and global memory management | User Experience | 1 |

|

||||

| Cache Control | `V0.0.9+` | Prompt caching control (Anthropic, DashScope) | User Experience | 1 |

|

||||

| PlanMode | `V0.0.14` | Task planning mode | Coding Workflow | 1 |

|

||||

| Compress | `V0.0.11` | Chat compression mechanism | User Experience | 1 |

|

||||

| SubAgent | `V0.0.11` | Dedicated sub-agent system | Coding Workflow | 1 |

|

||||

| TodoWrite | `V0.0.10` | Task management and progress tracking | Coding Workflow | 1 |

|

||||

| Tool: TextSearch | `V0.0.8+` | Text search tool (grep, supports .qwenignore) | Coding Workflow | 1 |

|

||||

| Tool: WebFetch | `V0.0.7+` | Web content fetching tool | Coding Workflow | 1 |

|

||||

| Tool: WebSearch | `V0.0.7+` | Web search tool (using Tavily API) | Coding Workflow | 1 |

|

||||

| OAuth | `V0.0.5+` | OAuth login authentication (Qwen OAuth) | User Experience | 1 |

|

||||

|

||||

#### Features to Develop

|

||||

|

||||

|

|

@ -60,7 +69,6 @@

|

|||

| Cross-platform Compatibility | P1 | In Progress | Windows/Linux/macOS compatibility | User Experience |

|

||||

| LogView | P2 | Planned | Log viewing and debugging feature | User Experience |

|

||||

| Hooks | P2 | In Progress | Extension hooks system | Coding Workflow |

|

||||

| Extension | P2 | Planned | Extension system | Building Open Capabilities |

|

||||

| Costs | P2 | Planned | Cost tracking and analysis | Administrative Capabilities |

|

||||

| Dashboard | P2 | Planned | Management dashboard | Administrative Capabilities |

|

||||

|

||||

|

|

|

|||

|

|

@ -31,6 +31,52 @@ qwen

|

|||

|

||||

Use this if you want more flexibility over which provider and model to use. Supports multiple protocols and providers, including OpenAI, Anthropic, Google GenAI, Alibaba Cloud Bailian, Azure OpenAI, OpenRouter, ModelScope, or a self-hosted compatible endpoint.

|

||||

|

||||

### Recommended: One-file setup via `settings.json`

|

||||

|

||||

The simplest way to get started with API-KEY authentication is to put everything in a single `~/.qwen/settings.json` file. Here's a complete, ready-to-use example:

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"openai": [

|

||||

{

|

||||

"id": "qwen3-coder-plus",

|

||||

"name": "qwen3-coder-plus",

|

||||

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

|

||||

"description": "Qwen3-Coder via Dashscope",

|

||||

"envKey": "DASHSCOPE_API_KEY"

|

||||

}

|

||||

]

|

||||

},

|

||||

"env": {

|

||||

"DASHSCOPE_API_KEY": "sk-xxxxxxxxxxxxx"

|

||||

},

|

||||

"security": {

|

||||

"auth": {

|

||||

"selectedType": "openai"

|

||||

}

|

||||

},

|

||||

"model": {

|

||||

"name": "qwen3-coder-plus"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

What each field does:

|

||||

|

||||

| Field | Description |

|

||||

| ---------------------------- | ----------------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

| `modelProviders` | Declares which models are available and how to connect to them. Keys (`openai`, `anthropic`, `gemini`, `vertex-ai`) represent the API protocol. |

|

||||

| `env` | Stores API keys directly in `settings.json` as a fallback (lowest priority — shell `export` and `.env` files take precedence). |

|

||||

| `security.auth.selectedType` | Tells Qwen Code which protocol to use on startup (e.g. `openai`, `anthropic`, `gemini`). Without this, you'd need to run `/auth` interactively. |

|

||||

| `model.name` | The default model to activate when Qwen Code starts. Must match one of the `id` values in your `modelProviders`. |

|

||||

|

||||

After saving the file, just run `qwen` — no interactive `/auth` setup needed.

|

||||

|

||||

> [!tip]

|

||||

>

|

||||

> The sections below explain each part in more detail. If the quick example above works for you, feel free to skip ahead to [Security notes](#security-notes).

|

||||

|

||||

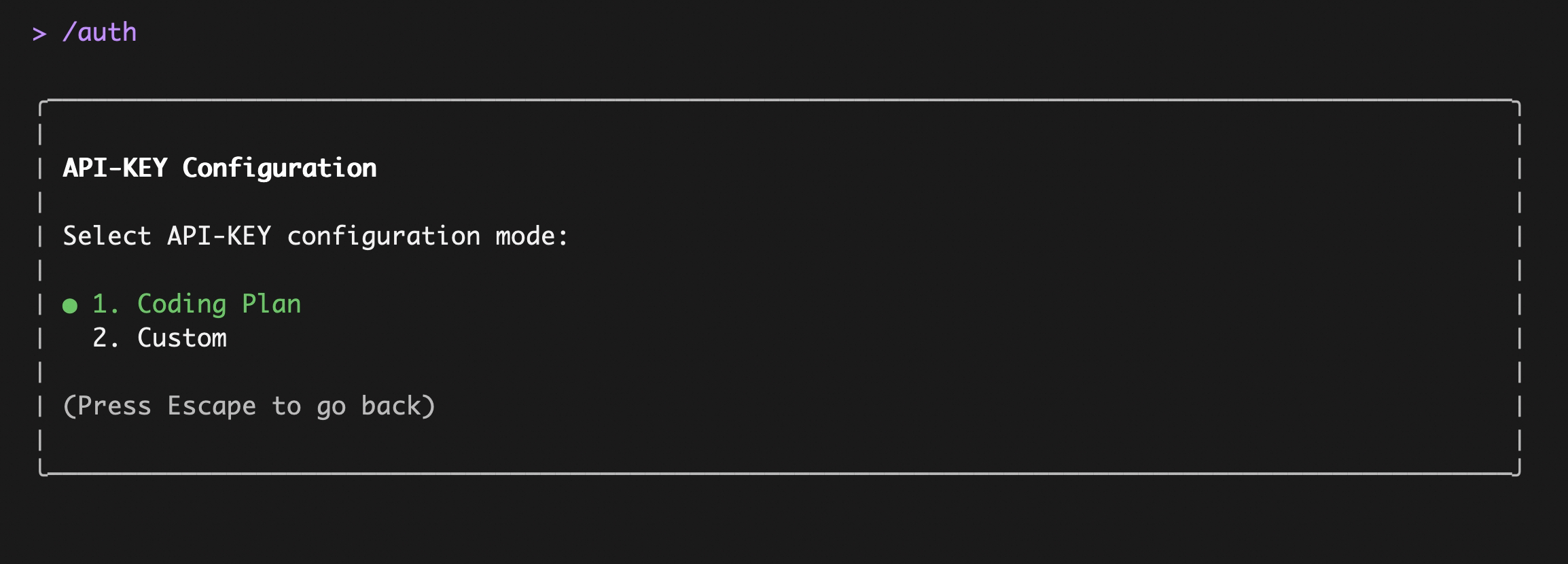

### Option1: Coding Plan(Aliyun Bailian)

|

||||

|

||||

Use this if you want predictable costs with higher usage quotas for the qwen3-coder-plus model.

|

||||

|

|

@ -48,10 +94,45 @@ After entering, select `Coding Plan`:

|

|||

|

||||

|

||||

|

||||

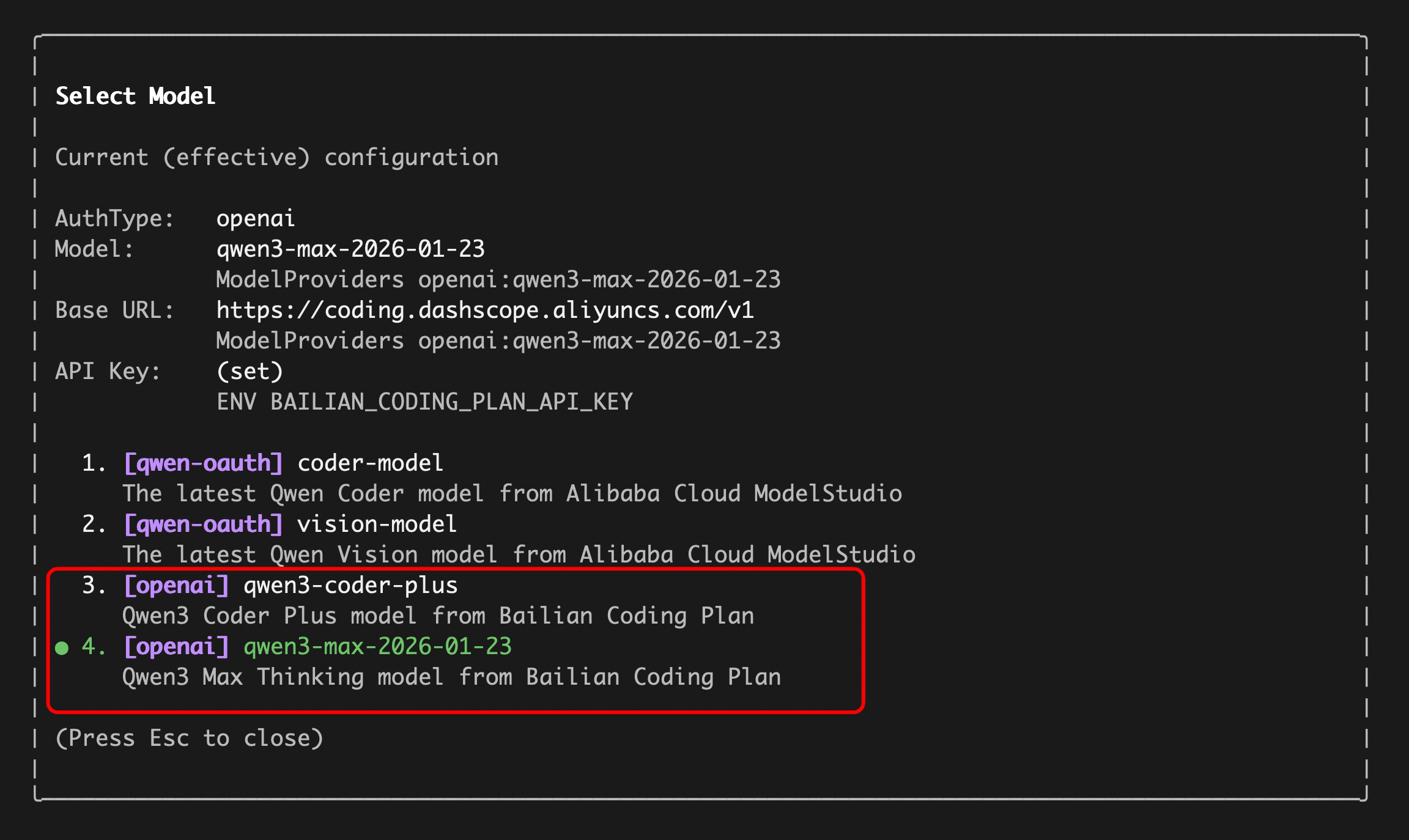

Enter your `sk-sp-xxxxxxxxx` key, then use the `/model` command to switch between all Bailian `Coding Plan` supported models:

|

||||

Enter your `sk-sp-xxxxxxxxx` key, then use the `/model` command to switch between all Bailian `Coding Plan` supported models (including qwen3.5-plus, qwen3-coder-plus, qwen3-coder-next, qwen3-max, glm-4.7, and kimi-k2.5):

|

||||

|

||||

|

||||

|

||||

**Alternative: configure Coding Plan via `settings.json`**

|

||||

|

||||

If you prefer to skip the interactive `/auth` flow, add the following to `~/.qwen/settings.json`:

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"openai": [

|

||||

{

|

||||

"id": "qwen3-coder-plus",

|

||||

"name": "qwen3-coder-plus (Coding Plan)",

|

||||

"baseUrl": "https://coding.dashscope.aliyuncs.com/v1",

|

||||

"description": "qwen3-coder-plus from Bailian Coding Plan",

|

||||

"envKey": "BAILIAN_CODING_PLAN_API_KEY"

|

||||

}

|

||||

]

|

||||

},

|

||||

"env": {

|

||||

"BAILIAN_CODING_PLAN_API_KEY": "sk-sp-xxxxxxxxx"

|

||||

},

|

||||

"security": {

|

||||

"auth": {

|

||||

"selectedType": "openai"

|

||||

}

|

||||

},

|

||||

"model": {

|

||||

"name": "qwen3-coder-plus"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

> [!note]

|

||||

>

|

||||

> The Coding Plan uses a dedicated endpoint (`https://coding.dashscope.aliyuncs.com/v1`) that is different from the standard Dashscope endpoint. Make sure to use the correct `baseUrl`.

|

||||

|

||||

### Option2: Third-party API-KEY

|

||||

|

||||

Use this if you want to connect to third-party providers such as OpenAI, Anthropic, Google, Azure OpenAI, OpenRouter, ModelScope, or a self-hosted endpoint.

|

||||

|

|

@ -67,7 +148,7 @@ The key concept is **Model Providers** (`modelProviders`): Qwen Code supports mu

|

|||

| Google GenAI | `gemini` | `GEMINI_API_KEY`, `GEMINI_MODEL` | Google Gemini |

|

||||

| Google Vertex AI | `vertex-ai` | `GOOGLE_API_KEY`, `GOOGLE_MODEL` | Google Vertex AI |

|

||||

|

||||

#### Step 1: Configure `modelProviders` in `~/.qwen/settings.json`

|

||||

#### Step 1: Configure models and providers in `~/.qwen/settings.json`

|

||||

|

||||

Define which models are available for each protocol. Each model entry requires at minimum an `id` and an `envKey` (the environment variable name that holds your API key).

|

||||

|

||||

|

|

@ -75,7 +156,7 @@ Define which models are available for each protocol. Each model entry requires a

|

|||

>

|

||||

> It is recommended to define `modelProviders` in the user-scope `~/.qwen/settings.json` to avoid merge conflicts between project and user settings.

|

||||

|

||||

Edit `~/.qwen/settings.json` (create it if it doesn't exist):

|

||||

Edit `~/.qwen/settings.json` (create it if it doesn't exist). You can mix multiple protocols in a single file — here is a multi-provider example showing just the `modelProviders` section:

|

||||

|

||||

```json

|

||||

{

|

||||

|

|

@ -106,7 +187,11 @@ Edit `~/.qwen/settings.json` (create it if it doesn't exist):

|

|||

}

|

||||

```

|

||||

|

||||

You can mix multiple protocols and models in a single configuration. The `ModelConfig` fields are:

|

||||

> [!tip]

|

||||

>

|

||||

> Don't forget to also set `env`, `security.auth.selectedType`, and `model.name` alongside `modelProviders` — see the [complete example above](#recommended-one-file-setup-via-settingsjson) for reference.

|

||||

|

||||

**`ModelConfig` fields (each entry inside `modelProviders`):**

|

||||

|

||||

| Field | Required | Description |

|

||||

| ------------------ | -------- | -------------------------------------------------------------------- |

|

||||

|

|

@ -118,9 +203,9 @@ You can mix multiple protocols and models in a single configuration. The `ModelC

|

|||

|

||||

> [!note]

|

||||

>

|

||||

> Credentials are **never** stored in `settings.json`. The runtime reads them from the environment variable specified in `envKey`.

|

||||

> When using the `env` field in `settings.json`, credentials are stored in plain text. For better security, prefer `.env` files or shell `export` — see [Step 2](#step-2-set-environment-variables).

|

||||

|

||||

For the full `modelProviders` schema and advanced options like `generationConfig`, `customHeaders`, and `extra_body`, see [Settings Reference → modelProviders](settings.md#modelproviders).

|

||||

For the full `modelProviders` schema and advanced options like `generationConfig`, `customHeaders`, and `extra_body`, see [Model Providers Reference](model-providers.md).

|

||||

|

||||

#### Step 2: Set environment variables

|

||||

|

||||

|

|

@ -165,25 +250,19 @@ If nothing is found, it falls back to your **home directory**:

|

|||

|

||||

**3. `settings.json` → `env` field (lowest priority)**

|

||||

|

||||

You can also define environment variables directly in `~/.qwen/settings.json` under the `env` key. These are loaded as the **lowest-priority fallback** — only applied when a variable is not already set by the system environment or `.env` files.

|

||||

You can also define API keys directly in `~/.qwen/settings.json` under the `env` key. These are loaded as the **lowest-priority fallback** — only applied when a variable is not already set by the system environment or `.env` files.

|

||||

|

||||

```json

|

||||

{

|

||||

"env": {

|

||||

"DASHSCOPE_API_KEY":"sk-...",

|

||||

"DASHSCOPE_API_KEY": "sk-...",

|

||||

"OPENAI_API_KEY": "sk-...",

|

||||

"ANTHROPIC_API_KEY": "sk-ant-...",

|

||||

"GEMINI_API_KEY": "AIza..."

|

||||

},

|

||||

"modelProviders": {

|

||||

...

|

||||

"ANTHROPIC_API_KEY": "sk-ant-..."

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

> [!note]

|

||||

>

|

||||

> This is useful when you want to keep all configuration (providers + credentials) in a single file. However, be mindful that `settings.json` may be shared or synced — prefer `.env` files for sensitive secrets.

|

||||

This is the approach used in the [one-file setup example](#recommended-one-file-setup-via-settingsjson) above. It's convenient for keeping everything in one place, but be mindful that `settings.json` may be shared or synced — prefer `.env` files for sensitive secrets.

|

||||

|

||||

**Priority summary:**

|

||||

|

||||

|

|

|

|||

521

docs/users/configuration/model-providers.md

Normal file

521

docs/users/configuration/model-providers.md

Normal file

|

|

@ -0,0 +1,521 @@

|

|||

# Model Providers

|

||||

|

||||

Qwen Code allows you to configure multiple model providers through the `modelProviders` setting in your `settings.json`. This enables you to switch between different AI models and providers using the `/model` command.

|

||||

|

||||

## Overview

|

||||

|

||||

Use `modelProviders` to declare curated model lists per auth type that the `/model` picker can switch between. Keys must be valid auth types (`openai`, `anthropic`, `gemini`, `vertex-ai`, etc.). Each entry requires an `id` and **must include `envKey`**, with optional `name`, `description`, `baseUrl`, and `generationConfig`. Credentials are never persisted in settings; the runtime reads them from `process.env[envKey]`. Qwen OAuth models remain hard-coded and cannot be overridden.

|

||||

|

||||

> [!note]

|

||||

> Only the `/model` command exposes non-default auth types. Anthropic, Gemini, Vertex AI, etc., must be defined via `modelProviders`. The `/auth` command intentionally lists only the built-in Qwen OAuth and OpenAI flows.

|

||||

|

||||

> [!warning]

|

||||

> **Duplicate model IDs within the same authType:** Defining multiple models with the same `id` under a single `authType` (e.g., two entries with `"id": "gpt-4o"` in `openai`) is currently not supported. If duplicates exist, **the first occurrence wins** and subsequent duplicates are skipped with a warning. Note that the `id` field is used both as the configuration identifier and as the actual model name sent to the API, so using unique IDs (e.g., `gpt-4o-creative`, `gpt-4o-balanced`) is not a viable workaround. This is a known limitation that we plan to address in a future release.

|

||||

|

||||

## Configuration Examples by Auth Type

|

||||

|

||||

Below are comprehensive configuration examples for different authentication types, showing the available parameters and their combinations.

|

||||

|

||||

### Supported Auth Types

|

||||

|

||||

The `modelProviders` object keys must be valid `authType` values. Currently supported auth types are:

|

||||

|

||||

| Auth Type | Description |

|

||||

| ------------ | --------------------------------------------------------------------------------------- |

|

||||

| `openai` | OpenAI-compatible APIs (OpenAI, Azure OpenAI, local inference servers like vLLM/Ollama) |

|

||||

| `anthropic` | Anthropic Claude API |

|

||||

| `gemini` | Google Gemini API |

|

||||

| `vertex-ai` | Google Vertex AI |

|

||||

| `qwen-oauth` | Qwen OAuth (hard-coded, cannot be overridden in `modelProviders`) |

|

||||

|

||||

> [!warning]

|

||||

> If an invalid auth type key is used (e.g., a typo like `"openai-custom"`), the configuration will be **silently skipped** and the models will not appear in the `/model` picker. Always use one of the supported auth type values listed above.

|

||||

|

||||

### SDKs Used for API Requests

|

||||

|

||||

Qwen Code uses the following official SDKs to send requests to each provider:

|

||||

|

||||

| Auth Type | SDK Package |

|

||||

| ---------------------- | ----------------------------------------------------------------------------------------------- |

|

||||

| `openai` | [`openai`](https://www.npmjs.com/package/openai) - Official OpenAI Node.js SDK |

|

||||

| `anthropic` | [`@anthropic-ai/sdk`](https://www.npmjs.com/package/@anthropic-ai/sdk) - Official Anthropic SDK |

|

||||

| `gemini` / `vertex-ai` | [`@google/genai`](https://www.npmjs.com/package/@google/genai) - Official Google GenAI SDK |

|

||||

| `qwen-oauth` | [`openai`](https://www.npmjs.com/package/openai) with custom provider (DashScope-compatible) |

|

||||

|

||||

This means the `baseUrl` you configure should be compatible with the corresponding SDK's expected API format. For example, when using `openai` auth type, the endpoint must accept OpenAI API format requests.

|

||||

|

||||

### OpenAI-compatible providers (`openai`)

|

||||

|

||||

This auth type supports not only OpenAI's official API but also any OpenAI-compatible endpoint, including aggregated model providers like OpenRouter.

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"openai": [

|

||||

{

|

||||

"id": "gpt-4o",

|

||||

"name": "GPT-4o",

|

||||

"envKey": "OPENAI_API_KEY",

|

||||

"baseUrl": "https://api.openai.com/v1",

|

||||

"generationConfig": {

|

||||

"timeout": 60000,

|

||||

"maxRetries": 3,

|

||||

"enableCacheControl": true,

|

||||

"contextWindowSize": 128000,

|

||||

"customHeaders": {

|

||||

"X-Client-Request-ID": "req-123"

|

||||

},

|

||||

"extra_body": {

|

||||

"enable_thinking": true,

|

||||

"service_tier": "priority"

|

||||

},

|

||||

"samplingParams": {

|

||||

"temperature": 0.2,

|

||||

"top_p": 0.8,

|

||||

"max_tokens": 4096,

|

||||

"presence_penalty": 0.1,

|

||||

"frequency_penalty": 0.1

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"id": "gpt-4o-mini",

|

||||

"name": "GPT-4o Mini",

|

||||

"envKey": "OPENAI_API_KEY",

|

||||

"baseUrl": "https://api.openai.com/v1",

|

||||

"generationConfig": {

|

||||

"timeout": 30000,

|

||||

"samplingParams": {

|

||||

"temperature": 0.5,

|

||||

"max_tokens": 2048

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"id": "openai/gpt-4o",

|

||||

"name": "GPT-4o (via OpenRouter)",

|

||||

"envKey": "OPENROUTER_API_KEY",

|

||||

"baseUrl": "https://openrouter.ai/api/v1",

|

||||

"generationConfig": {

|

||||

"timeout": 120000,

|

||||

"maxRetries": 3,

|

||||

"samplingParams": {

|

||||

"temperature": 0.7

|

||||

}

|

||||

}

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### Anthropic (`anthropic`)

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"anthropic": [

|

||||

{

|

||||

"id": "claude-3-5-sonnet",

|

||||

"name": "Claude 3.5 Sonnet",

|

||||

"envKey": "ANTHROPIC_API_KEY",

|

||||

"baseUrl": "https://api.anthropic.com/v1",

|

||||

"generationConfig": {

|

||||

"timeout": 120000,

|

||||

"maxRetries": 3,

|

||||

"contextWindowSize": 200000,

|

||||

"samplingParams": {

|

||||

"temperature": 0.7,

|

||||

"max_tokens": 8192,

|

||||

"top_p": 0.9

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"id": "claude-3-opus",

|

||||

"name": "Claude 3 Opus",

|

||||

"envKey": "ANTHROPIC_API_KEY",

|

||||

"baseUrl": "https://api.anthropic.com/v1",

|

||||

"generationConfig": {

|

||||

"timeout": 180000,

|

||||

"samplingParams": {

|

||||

"temperature": 0.3,

|

||||

"max_tokens": 4096

|

||||

}

|

||||

}

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### Google Gemini (`gemini`)

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"gemini": [

|

||||

{

|

||||

"id": "gemini-2.0-flash",

|

||||

"name": "Gemini 2.0 Flash",

|

||||

"envKey": "GEMINI_API_KEY",

|

||||

"baseUrl": "https://generativelanguage.googleapis.com",

|

||||

"capabilities": {

|

||||

"vision": true

|

||||

},

|

||||

"generationConfig": {

|

||||

"timeout": 60000,

|

||||

"maxRetries": 2,

|

||||

"contextWindowSize": 1000000,

|

||||

"schemaCompliance": "auto",

|

||||

"samplingParams": {

|

||||

"temperature": 0.4,

|

||||

"top_p": 0.95,

|

||||

"max_tokens": 8192,

|

||||

"top_k": 40

|

||||

}

|

||||

}

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### Google Vertex AI (`vertex-ai`)

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"vertex-ai": [

|

||||

{

|

||||

"id": "gemini-1.5-pro-vertex",

|

||||

"name": "Gemini 1.5 Pro (Vertex AI)",

|

||||

"envKey": "GOOGLE_API_KEY",

|

||||

"baseUrl": "https://generativelanguage.googleapis.com",

|

||||

"generationConfig": {

|

||||

"timeout": 90000,

|

||||

"contextWindowSize": 2000000,

|

||||

"samplingParams": {

|

||||

"temperature": 0.2,

|

||||

"max_tokens": 8192

|

||||

}

|

||||

}

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### Local Self-Hosted Models (via OpenAI-compatible API)

|

||||

|

||||

Most local inference servers (vLLM, Ollama, LM Studio, etc.) provide an OpenAI-compatible API endpoint. Configure them using the `openai` auth type with a local `baseUrl`:

|

||||

|

||||

```json

|

||||

{

|

||||

"modelProviders": {

|

||||

"openai": [

|

||||

{

|

||||

"id": "qwen2.5-7b",

|

||||

"name": "Qwen2.5 7B (Ollama)",

|

||||

"envKey": "OLLAMA_API_KEY",

|

||||

"baseUrl": "http://localhost:11434/v1",

|

||||

"generationConfig": {

|

||||

"timeout": 300000,

|

||||

"maxRetries": 1,

|

||||

"contextWindowSize": 32768,

|

||||

"samplingParams": {

|

||||

"temperature": 0.7,

|

||||

"top_p": 0.9,

|

||||

"max_tokens": 4096

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"id": "llama-3.1-8b",

|

||||

"name": "Llama 3.1 8B (vLLM)",

|

||||

"envKey": "VLLM_API_KEY",

|

||||

"baseUrl": "http://localhost:8000/v1",

|

||||

"generationConfig": {

|

||||

"timeout": 120000,

|

||||

"maxRetries": 2,

|

||||

"contextWindowSize": 128000,

|

||||

"samplingParams": {

|

||||

"temperature": 0.6,

|

||||

"max_tokens": 8192

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"id": "local-model",

|

||||

"name": "Local Model (LM Studio)",

|

||||

"envKey": "LMSTUDIO_API_KEY",

|

||||

"baseUrl": "http://localhost:1234/v1",

|

||||

"generationConfig": {

|

||||

"timeout": 60000,

|

||||

"samplingParams": {

|

||||

"temperature": 0.5

|

||||

}

|

||||

}

|

||||

}

|

||||

]

|

||||