* Five correctness fixes from multi-agent bug hunt

A multi-agent audit of the codeburn correctness surface found five

real bugs each producing visibly wrong numbers or risking data loss.

All five fixes were validated by parallel review agents and exercised

end-to-end against real session data on this machine.

- src/cli.ts: --refresh <seconds> was using bare parseInt as the

commander callback. Commander invokes the callback as

parseInt(value, previous), so previous becomes the radix:

--refresh 30 was being parsed as parseInt('30', 30) = 90, and

--refresh 60 became NaN. Replaced with parseInteger (already

defined at line 48 with radix locked to 10) at all three sites.

- src/providers/cursor.ts: parseAgentKv was timestamping every

agentKv call as new Date().toISOString() because the Cursor

SQLite schema has no per-message timestamp. Result: every

Cursor agent call regardless of when it happened landed in

today's date bucket. Now uses statSync(dbPath).mtimeMs as a

bounded ceiling so calls land at the actual last-write time of

the Cursor database, not today. Verified locally: a 1904-call

Cursor history with March 22 mtime now correctly bucket into

all-time only and shows 0 calls for today/week/30days.

- src/providers/codex.ts: prev token counters were only updated

inside the cumulative-fallback branch, so a session emitting N

events with last_token_usage followed by one cumulative-only

event computed the next delta against prev=0 and double-counted

the entire cumulative window. Cost could be inflated 10-100x

for any mixed-format Codex session. Now prev advances to the

current cumulative state regardless of which branch ran.

- src/providers/gemini.ts: totalOutput accumulated output+thoughts

while totalThoughts was tracked separately. The result was

outputTokens = output+thoughts AND reasoningTokens = thoughts;

any consumer summing the two double-counted thoughts. Now

totalOutput holds just output, reasoningTokens holds thoughts,

and the cost calc folds thoughts into the output count to keep

pricing correct (Google bills thoughts at the output rate;

calculateCost has no reasoning parameter).

- src/export.ts: exportJson had no safety check before writeFile,

so codeburn export -f json -o ~/important.json would silently

clobber the user's file. CSV path had a marker-file guard; JSON

did not. Now refuses to overwrite a file unless its first 4KB

contain the codeburn schema marker. Uses a streaming partial

read so a large existing file does not OOM Node's ~512MB

string limit. Refuses directories outright.

Skipped intentionally: cursor-auto/copilot-auto/cline-auto/

qwen-auto are aliased to claude-sonnet-4-5. The audit flagged

this as wrong pricing for non-Anthropic auto-routed turns, but

Cursor's "auto" mode does not expose the actual model and any

alternative estimate is equally arbitrary. README already

documents this as a Sonnet-based estimate.

vitest run: 38 files, 529 tests pass.

* Five more correctness fixes from the bug-hunt round

This commit closes out the remaining critical-tier findings from the

multi-agent audit, with one item documented as a known limitation.

- src/providers/cursor.ts: bubble dedup key included mutable

inputTokens/outputTokens. Cursor mutates token counts on the row in

place when streaming completes, so re-parsing the same DB produced

a fresh dedup key per bubble and silently double-counted. Switched

to the SQLite row key (`bubbleId:<unique>`) which is stable per

bubble. Adjusted BubbleRow type and BUBBLE_QUERY_BASE to expose

`key as bubble_key`.

- src/providers/pi.ts: usage fields were destructured non-optionally,

but real Pi/OMP session files sometimes omit individual fields.

`calculateCost(model, undefined, ...)` returned NaN, and that NaN

propagated into every aggregate cost total. Coerce each field to

0 with `?? 0`.

- src/models.ts: getShortModelName and the getModelCosts startsWith

fallback both walked the dictionary in insertion order. A model id

like `gpt-5-mini` could resolve to the entry for `gpt-5` (matched

by startsWith first) and silently get GPT-5's display name and

pricing tier. Iterate longest keys first so more-specific prefixes

win. Tightened the cost fallback's match condition from

`startsWith(key) || startsWith(key + '-')` to require either an

exact match or a `key + '-'` continuation, removing accidental

matches like `gpt-50` against `gpt-5`.

- src/models.ts: calculateCost returned 0 silently for any model

missing from the pricing snapshot. New Anthropic / OpenAI models

shipped between snapshot refreshes look free until the user

notices. Now warns once per unknown model name per process to

stderr. Skips the warning for the `<synthetic>` placeholder so

the noise floor stays low.

- src/yield.ts: revert detection was broken on the canonical case.

Two problems: (1) `subject.toLowerCase().includes('revert')`

matched any commit whose subject mentioned the word ("Add revert

button" was misclassified). (2) The window logic only counted

reverts within the original session's 1-hour boundary, but real

`git revert` commits land in later sessions, so original sessions

always looked productive. Now: getRevertedShas runs once with

`--grep=^This reverts commit` and parses bodies to build a Set of

SHAs that were the target of a revert anywhere in history.

CommitInfo.wasReverted is set when this commit's SHA appears in

that set. categorizeSession then flags a session as reverted when

its in-main commits were later reverted, regardless of when the

revert itself happened.

- src/providers/droid.ts: SKIPPED with comment. Droid records token

usage only at session level. The current behavior splits evenly

across emitted assistant calls and prices all of them at

settings.model (the latest model). For sessions where the user

switched models mid-stream, costs are approximate. Added an

inline comment documenting this; a real fix requires per-message

model data that isn't in the Droid JSONL schema.

Verified end-to-end on this machine:

- vitest run: 38 files, 529 tests pass

- `codeburn report --format json` produces valid JSON

- `codeburn yield -p week` runs without crashing, finds 0 reverts

in the user's recent git history (plausible — fix changed the

detection from "subject contains revert" to "this commit's SHA

appears in a later 'This reverts commit ...' body")

- Stderr now warns for unknown model ids: `openai/gpt-5.3`,

`qwen3.6:35b-a3b-bf16`, `big-pickle`. These previously priced

silently at $0.

* Four high-severity fixes from the bug-hunt round

- src/currency.ts: getExchangeRate wrapped fetchRate and cacheRate in

one try/catch. If fetchRate succeeded but cacheRate threw (disk

full, ENOSPC, no permissions on the cache dir), the catch block

swallowed the error and returned 1. Every cost rendered after that

point became USD-equivalent silently. Now the fetch and the cache

write live in separate paths: a successful fetch returns the rate

even if the persist fails, and the cache-write error is dropped to

a fire-and-forget so transient disk problems do not corrupt the

user's currency display.

- src/cursor-cache.ts: writeFile was non-atomic. Two concurrent

codeburn invocations writing to cursor-results.json could

interleave bytes mid-write, leaving a truncated file that

parsed-error on next read and forced a full SQLite re-scan every

run. Switched to the temp-file + rename pattern with a randomized

temp name so each writer gets its own staging file and the rename

is atomic on POSIX. Crash mid-write also leaves only a leftover

temp file, which gets unlinked in the catch path; the destination

is never half-written.

- mac/.../CodeBurnApp.swift refresh loop on sleep: the loop's

Task.sleep keeps a wakeup pending across system sleep, so on wake

the natural tick fires the same instant the wake observers do.

Combined with didWakeNotification, screensDidWakeNotification, and

the launchd com.codeburn.refresh distributed notification, that

produced 2-3 concurrent CLI spawns within ms of every wake. Now:

willSleepNotification cancels the loop task; didWakeNotification

restarts it. The loop also reads lastRefreshTime and skips its

natural tick if a wake/manual/distributed-notification refresh ran

within the last 5 seconds, coalescing the two sources of refresh

into one CLI spawn per wake event.

- mac/.../CodeBurnApp.swift observeStore: the read closure had an

implicit strong self capture (it accessed store.* without a

capture annotation), pinning self for the lifetime of any

unfired observation. Added [weak self] and a guard to make the

capture explicit. withObservationTracking is one-shot per call,

so there is at most one active subscription at a time; the

earlier audit's claim of an unbounded leak overstated the issue,

but tightening the capture pattern is still cleaner.

Verified:

- vitest run: 38 files, 529 tests pass

- swift build -c release --arch arm64 --arch x86_64: clean, no

diagnostics, no MainActor warnings

- mac/Scripts/package-app.sh dev produces a valid universal bundle

- Menubar launches and runs without crash

* Eleven medium-severity fixes from the bug-hunt round

- src/format.ts formatTokens: guard against Infinity, NaN, and

negative input. Previously a corrupt aggregate could leak into

the UI as the literal strings "NaN" or "Infinity". Negatives now

render as "0" rather than "-500" with no scaling.

- src/cli-date.ts parseDateRangeFlags: the missing-from default

was new Date(0), which opened a 55-year scan from 1970 epoch

whenever the user passed only --to. Default now anchors at 6

months back from now, matching the dashboard's all-time period.

Test updated to assert the new bounded window.

- src/cli-date.ts toPeriod: previously fell back silently to "week"

for any unknown input, so a typo like `-p mounth` produced a

quiet 7-day report while the user thought they were viewing the

month. Now exits with a clear stderr error and exit code 1.

Test updated to assert the loud-failure behavior.

- src/optimize.ts urgencyScore: rebalanced weights so a high-impact

finding with zero observed tokens cannot outrank a medium-impact

finding with millions of tokens. Old 0.7/0.3 split made high+0

(0.70) beat medium+1B (0.65). New 0.5/0.5 split makes medium+1B

(0.75) beat high+0 (0.50). Token normalization lifted to 5M so

the ramp covers a realistic spend range.

- src/models.ts calculateCost: clamp negative or non-finite token

inputs to 0 before pricing. A corrupt JSONL emitting a negative

count would otherwise produce a negative cost that silently

subtracted from real spend in aggregates.

- src/currency.ts convertCost: stop rounding during aggregation.

For zero-fraction currencies (JPY, KRW, CLP) this clamped every

per-session cost to a whole unit before sum, so a project of

1000 sessions averaging ¥0.4 each aggregated to ¥0 instead of

¥400. formatCost still rounds at the display boundary.

- src/config.ts saveConfig: the temp file path was a fixed

`${configPath}.tmp` suffix. Two simultaneous saveConfig calls

(overlapping menubar and CLI runs) raced on the same staging

file and could leave one writer reading partial bytes from the

other. Randomized the temp suffix per call.

- src/providers/antigravity.ts flushCache: the early return on

`!cacheDirty` short-circuited eviction when liveCascadeIds was

supplied but no cascade had been added or updated this run. As

a result, deleted .pb files persisted in the cache forever once

the user stopped writing to it. Eviction now runs whenever

liveCascadeIds is provided, marks the cache dirty if anything

was removed, and only then short-circuits if there is nothing

to write.

- src/daily-cache.ts addNewDays: cap retention at 2 years. The

days array previously merged forever, growing the cache file by

hundreds of bytes per day until JSON parse on every CLI

invocation became measurable. The 6-month UI period plus the

365-day BACKFILL_DAYS bootstrap both fit comfortably inside the

cap, with headroom for a future longer window.

- src/dashboard.tsx useInput: period number keys (1-5) and arrow

keys triggered a reload while the compare view was mounted. The

parent's data state changed underneath the user with no visual

affordance back to the dashboard. Now those keys are gated on

view !== 'compare', and `b` / Esc inside compare returns to the

dashboard.

- mac/.../HeatmapSection.swift formatters: prettyDate, buildTrend

Bars, computeTrendStats, computeForecast, and computeAllStats

each allocated a fresh DateFormatter (and Calendar) on every

call. SwiftUI re-evaluates these views many times per second

during hover scrubbing on the trend chart, so the allocations

were a measurable hot spot. Lifted the yyyy-MM-dd / "EEE MMM d"

/ "MMM d" formatters and the gregorian Calendar to fileprivate

cached singletons.

Two findings from the same bucket were not addressed here:

- UpdateChecker SHA-256 / codesign verification is already

performed by src/menubar-installer.ts (verifyChecksum at line

85). The Swift side just kicks off `codeburn menubar --force`

which runs that path. The audit's claim of missing verification

was a misread.

- NSDistributedNotificationCenter sender validation: the

`com.codeburn.refresh` listener accepts from any sender, but

forceRefresh has a 5-second rate-limit gate so the abuse

ceiling is one CLI spawn per 5 seconds. Mitigations (Mach IPC,

per-launch shared secret) are disproportionate to the impact.

vitest run: 38 files, 529 tests pass.

swift build -c release: clean, no warnings.

* Validator hardenings on the bug-hunt batch

Hoist the per-call sort in getModelCosts and getShortModelName to module

scope so model lookups on the hot path stop reallocating sorted key arrays.

Sanitize the unknown-model stderr warning by stripping C0/C1 controls

and capping length, so a hostile or corrupt JSONL cannot inject terminal

escape sequences via the model field.

Skip the daily-cache prune when newestDate fails to parse. The previous

code produced a NaN cutoff and silently dropped every cached day on the

next merge.

Adds tests locking down the stable resolution of common model names

(gpt-5-mini vs gpt-5, claude-haiku-4-5 vs claude-3-5-haiku, etc.) and

the prune NaN guard.

|

||

|---|---|---|

| .github | ||

| .semgrep/rules | ||

| assets | ||

| docs/superpowers | ||

| gnome | ||

| mac | ||

| scripts | ||

| src | ||

| tests | ||

| .gitignore | ||

| CHANGELOG.md | ||

| LICENSE | ||

| package-lock.json | ||

| package.json | ||

| README.md | ||

| SECURITY.md | ||

| tsconfig.json | ||

| tsup.config.ts | ||

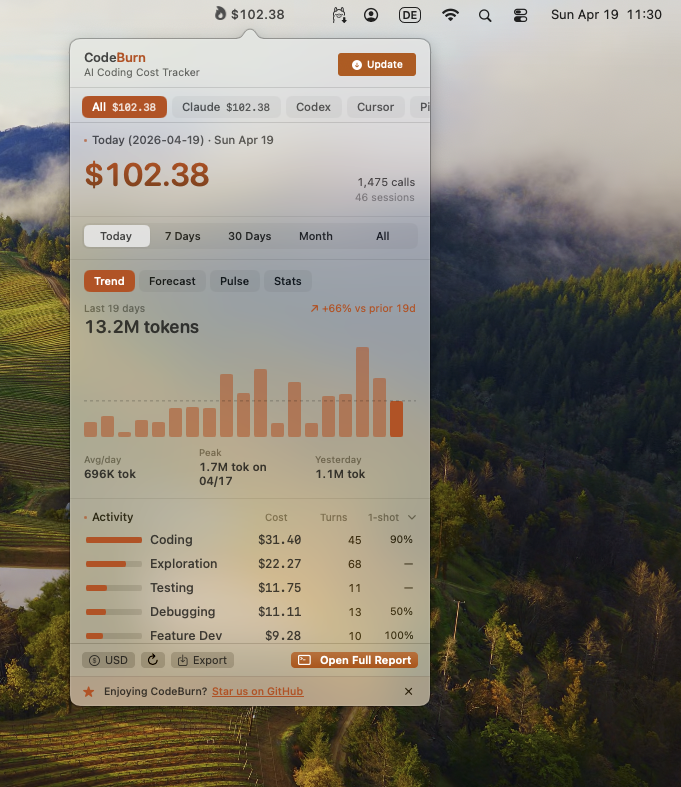

See where your AI coding tokens go.

CodeBurn tracks token usage, cost, and performance across 18 AI coding tools. It breaks down spending by task type, model, tool, project, and provider so you can see exactly where your budget goes.

Everything runs locally. No wrapper, no proxy, no API keys. CodeBurn reads session data directly from disk and prices every call using LiteLLM.

| Dashboard | Menu Bar |

|

|

| Optimize | Compare |

|

|

Requirements

- Node.js 20+

- At least one supported AI coding tool with session data on disk

- For Cursor and OpenCode support,

better-sqlite3is installed automatically as an optional dependency

Install

npm install -g codeburn

Or with Homebrew:

brew tap getagentseal/codeburn

brew install codeburn

Or run directly without installing:

npx codeburn

bunx codeburn

dx codeburn

Usage

codeburn # interactive dashboard (default: 7 days)

codeburn today # today's usage

codeburn month # this month's usage

codeburn report -p 30days # rolling 30-day window

codeburn report -p all # every recorded session

codeburn report --from 2026-04-01 --to 2026-04-10 # exact date range

codeburn report --format json # full dashboard data as JSON

codeburn report --refresh 60 # auto-refresh every 60s (default: 30s)

codeburn status # compact one-liner (today + month)

codeburn status --format json

codeburn export # CSV with today, 7 days, 30 days

codeburn export -f json # JSON export

codeburn optimize # find waste, get copy-paste fixes

codeburn optimize -p week # scope the scan to last 7 days

codeburn compare # side-by-side model comparison

codeburn yield # track productive vs reverted/abandoned spend

codeburn yield -p 30days # yield analysis for last 30 days

Arrow keys switch between Today, 7 Days, 30 Days, Month, and 6 Months (use --from / --to for an exact historical window). Press q to quit, 1 2 3 4 5 as shortcuts, c to open model comparison, o to open optimize. The dashboard auto-refreshes every 30 seconds by default (--refresh 0 to disable). It also shows average cost per session and the five most expensive sessions across all projects.

Supported Providers

| Provider | Data Location | Supported |

|---|---|---|

| Claude Code | ~/.claude/projects/ |

Yes |

| Claude Desktop | ~/Library/Application Support/Claude/local-agent-mode-sessions/ |

Yes |

| Codex (OpenAI) | ~/.codex/sessions/ |

Yes |

| Cursor | ~/Library/Application Support/Cursor/User/globalStorage/state.vscdb |

Yes |

| cursor-agent | ~/.cursor/projects/ |

Yes |

| Gemini CLI | ~/.gemini/tmp/<project>/chats/ |

Yes |

| GitHub Copilot | ~/.copilot/session-state/ + VS Code workspaceStorage/ |

Yes |

| Kiro | ~/Library/Application Support/Kiro/User/globalStorage/kiro.kiroagent/ |

Yes |

| OpenCode | ~/.local/share/opencode/ (SQLite) |

Yes |

| OpenClaw | ~/.openclaw/agents/ (+ legacy .clawdbot, .moltbot, .moldbot) |

Yes |

| Pi | ~/.pi/agent/sessions/ |

Yes |

| OMP (Oh My Pi) | ~/.omp/agent/sessions/ |

Yes |

| Droid | ~/.factory/projects/ |

Yes |

| Roo Code | VS Code globalStorage/rooveterinaryinc.roo-cline/tasks/ |

Yes |

| KiloCode | VS Code globalStorage/kilocode.kilo-code/tasks/ |

Yes |

| Qwen | ~/.qwen/projects/<project>/chats/ |

Yes |

| Goose | ~/.local/share/goose/sessions/sessions.db (SQLite) |

Yes |

| Antigravity | ~/.gemini/antigravity/conversations/ |

Yes |

Paths shown are for macOS. Linux and Windows equivalents are detected automatically. If a path has changed or is wrong, please open an issue.

CodeBurn auto-detects which AI coding tools you use. If multiple providers have session data on disk, press p in the dashboard to toggle between them.

The --provider flag filters any command to a single provider: codeburn report --provider claude, codeburn today --provider codex, codeburn export --provider cursor. Works on all commands: report, today, month, status, export, optimize, compare, yield.

Provider Notes

Cursor reads token usage from its local SQLite database. Since Cursor's "Auto" mode hides the actual model used, costs are estimated using Sonnet pricing (labeled "Auto (Sonnet est.)" in the dashboard). The Cursor view shows a Languages panel instead of Core Tools/Shell/MCP panels, since Cursor does not log individual tool calls. First run on a large Cursor database may take up to a minute; results are cached and subsequent runs are instant.

Gemini CLI stores sessions as single JSON files. Each session embeds real token counts (input, output, cached, thoughts) per message, so no estimation is needed. Gemini reports input tokens inclusive of cached; CodeBurn subtracts cached from input before pricing to avoid double charging.

Kiro stores conversations as .chat JSON files. Token counts are estimated from content length. The underlying model is not exposed, so sessions are labeled kiro-auto and costed at Sonnet rates.

GitHub Copilot reads from both ~/.copilot/session-state/ (legacy CLI) and VS Code's workspaceStorage/*/GitHub.copilot-chat/transcripts/. The VS Code format has no explicit token counts; tokens are estimated from content length and the model is inferred from tool call ID prefixes.

OpenClaw reads JSONL agent logs from ~/.openclaw/agents/ and also checks legacy paths (.clawdbot, .moltbot, .moldbot).

Roo Code and KiloCode are Cline-family VS Code extensions. CodeBurn reads ui_messages.json from each task directory and extracts token usage from api_req_started entries.

Adding a new provider is a single file. See src/providers/codex.ts for an example.

Features

Cost Tracking

Prices every API call using input, output, cache read, cache write, and web search token counts. Fast mode multiplier for Claude. Pricing fetched from LiteLLM and cached locally for 24 hours. Hardcoded fallbacks for all Claude and GPT models to prevent mispricing.

Task Categories

13 categories classified from tool usage patterns and user message keywords. No LLM calls, fully deterministic.

| Category | What triggers it |

|---|---|

| Coding | Edit, Write tools |

| Debugging | Error/fix keywords + tool usage |

| Feature Dev | "add", "create", "implement" keywords |

| Refactoring | "refactor", "rename", "simplify" |

| Testing | pytest, vitest, jest in Bash |

| Exploration | Read, Grep, WebSearch without edits |

| Planning | EnterPlanMode, TaskCreate tools |

| Delegation | Agent tool spawns |

| Git Ops | git push/commit/merge in Bash |

| Build/Deploy | npm build, docker, pm2 |

| Brainstorming | "brainstorm", "what if", "design" |

| Conversation | No tools, pure text exchange |

| General | Skill tool, uncategorized |

Breakdowns

Daily cost chart, per-project, per-model (Opus, Sonnet, Haiku, GPT-5, GPT-4o, Gemini, Kiro, and more), per-activity with one-shot rate, core tools, shell commands, and MCP servers.

One-Shot Rate

For categories that involve code edits, CodeBurn detects edit/test/fix retry cycles (Edit, Bash, Edit patterns). The one-shot column shows the percentage of edit turns that succeeded without retries. Coding at 90% means the AI got it right first try 9 out of 10 times.

Pricing

Fetched from LiteLLM model prices (auto-cached 24 hours at ~/.cache/codeburn/). Handles input, output, cache write, cache read, and web search costs. Fast mode multiplier for Claude. Hardcoded fallbacks for all Claude and GPT-5 models to prevent fuzzy matching mispricing.

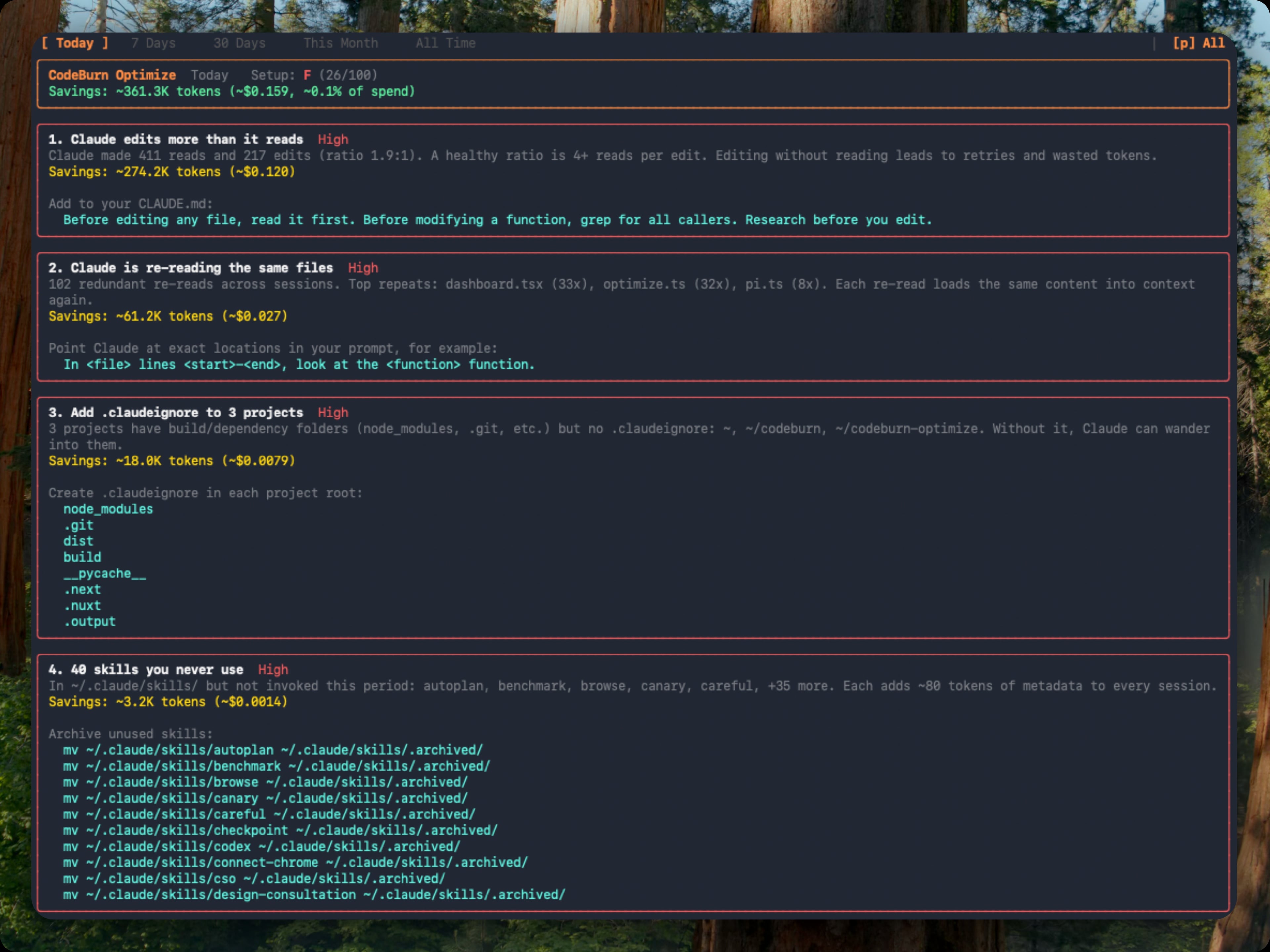

Optimize

codeburn optimize # scan the last 30 days

codeburn optimize -p today # today only

codeburn optimize -p week # last 7 days

codeburn optimize --provider claude # restrict to one provider

Scans your sessions and your ~/.claude/ setup for waste patterns:

- Files Claude re-reads across sessions (same content, same context, over and over)

- Low Read:Edit ratio (editing without reading leads to retries and wasted tokens)

- Wasted bash output (uncapped

BASH_MAX_OUTPUT_LENGTH, trailing noise) - Unused MCP servers still paying their tool-schema overhead every session

- Ghost agents, skills, and slash commands defined in

~/.claude/but never invoked - Bloated

CLAUDE.mdfiles (with@-importexpansion counted) - Cache creation overhead and junk directory reads

- Context-heavy sessions where effective input/cache tokens swamp output

- Possibly low-worth expensive sessions with no edit turns or repeated retries

when no

git/ghdelivery command is observed

Each finding shows the estimated token and dollar savings plus a ready-to-paste fix: a CLAUDE.md line, an environment variable, or a mv command to archive unused items. Findings are ranked by urgency (impact weighted against observed waste) and rolled up into an A to F setup health grade. Repeat runs classify each finding as new, improving, or resolved against a 48-hour recent window.

You can also open it inline from the dashboard: press o when a finding count appears in the status bar, b to return.

Compare

codeburn compare # interactive model picker (default: last 6 months)

codeburn compare -p week # last 7 days

codeburn compare -p today # today only

codeburn compare --provider claude # Claude Code sessions only

Or press c in the dashboard to enter compare mode. Arrow keys switch periods, b to return.

| Section | Metric | What it measures |

|---|---|---|

| Performance | One-shot rate | Edits that succeed without retries |

| Performance | Retry rate | Average retries per edit turn |

| Performance | Self-correction | Turns where the model corrected its own mistake |

| Efficiency | Cost per call | Average cost per API call |

| Efficiency | Cost per edit | Average cost per edit turn |

| Efficiency | Output tokens per call | Average output tokens per call |

| Efficiency | Cache hit rate | Proportion of input from cache |

Also compares per-category one-shot rates, delegation rate, planning rate, average tools per turn, and fast mode usage.

Yield

codeburn yield # last 7 days (default)

codeburn yield -p today # today only

codeburn yield -p 30days # last 30 days

codeburn yield -p month # this calendar month

Correlates AI sessions with git commits by timestamp:

| Category | Meaning |

|---|---|

| Productive | Commits from this session landed in main |

| Reverted | Commits were later reverted |

| Abandoned | No commits near session, or commits never merged |

Requires a git repository. Run from your project directory.

Plans

codeburn plan set claude-max # $200/month

codeburn plan set claude-pro # $20/month

codeburn plan set cursor-pro # $20/month

codeburn plan set custom --monthly-usd 150 --provider claude # custom

codeburn plan set none # disable plan view

codeburn plan # show current

codeburn plan reset # remove plan config

Subscription tracking for Claude Pro, Claude Max, and Cursor Pro. The dashboard shows a progress bar of API-equivalent cost against your plan price. Supports custom plans. Presets use publicly stated plan prices (as of April 2026); they do not model exact token allowances, because vendors do not publish precise consumer-plan limits.

Currency

codeburn currency GBP # set to British Pounds

codeburn currency AUD # set to Australian Dollars

codeburn currency JPY # set to Japanese Yen

codeburn currency # show current setting

codeburn currency --reset # back to USD

Any ISO 4217 currency code is supported (162 currencies). Exchange rates fetched from Frankfurter (European Central Bank data, free, no API key) and cached for 24 hours. Config stored at ~/.config/codeburn/config.json. The currency setting applies everywhere: dashboard, status bar, menu bar, CSV/JSON exports, and JSON API output.

Model Aliases

If you see $0.00 for some models, the model name reported by your provider does not match any entry in the LiteLLM pricing data. This commonly happens when using a proxy that rewrites model names.

codeburn model-alias "my-proxy-model" "claude-opus-4-6" # add alias

codeburn model-alias --list # show configured aliases

codeburn model-alias --remove "my-proxy-model" # remove alias

Aliases are stored in ~/.config/codeburn/config.json and applied at runtime before pricing lookup. The target name can be anything in the LiteLLM model list or a canonical name from the fallback table (e.g. claude-sonnet-4-6, claude-opus-4-5, gpt-4o). Built-in aliases ship for known proxy model name variants. User-configured aliases take precedence over built-ins.

Filtering

codeburn report --project myapp # show only projects matching "myapp"

codeburn report --exclude myapp # show everything except "myapp"

codeburn report --exclude myapp --exclude tests # exclude multiple projects

codeburn month --project api --project web # include multiple projects

codeburn export --project inventory # export only "inventory" project data

Filter by provider, project name (case-insensitive substring), or exact date range. The --project and --exclude flags work on all commands and can be combined with --provider.

codeburn report --from 2026-04-01 --to 2026-04-10 # explicit window

codeburn report --from 2026-04-01 # this date through today

codeburn report --to 2026-04-10 # earliest data through this date

Either flag alone is valid. Inverted or malformed dates exit with a clear error. In the TUI, the custom range sets the initial load only; pressing 1 through 5 switches back to predefined periods.

JSON Output

report, today, and month support --format json to output the full dashboard data as structured JSON to stdout:

codeburn report --format json # 7-day JSON report

codeburn today --format json # today's data as JSON

codeburn month --format json # this month as JSON

codeburn report -p 30days --format json # 30-day window

The JSON includes all dashboard panels: overview (cost, calls, sessions, cache hit %), daily breakdown, projects (with avgCostPerSession), models with token counts, activities with one-shot rates, core tools, MCP servers, and shell commands. Pipe to jq for filtering:

codeburn report --format json | jq '.projects'

codeburn today --format json | jq '.overview.cost'

For lighter output, use status --format json (today and month totals only) or file exports (export -f json).

Menu Bar

codeburn menubar

One command: downloads the latest .app, installs into ~/Applications, and launches it. Re-run with --force to reinstall. Native Swift and SwiftUI app lives in mac/ (see mac/README.md for build details).

The menubar icon always shows today's spend (so $0 is normal if you have not used AI tools today). Click to open a popover with agent tabs, period switcher (Today, 7 Days, 30 Days, Month, All), Trend, Forecast, Pulse, Stats, and Plan insights, activity and model breakdowns, optimize findings, and CSV/JSON export. Refreshes every 30 seconds.

Compact mode shrinks the menubar item to fit the text, dropping decimals (e.g. $110 instead of $110.20):

defaults write org.agentseal.codeburn-menubar CodeBurnMenubarCompact -bool true

Relaunch the app to apply. To revert: defaults delete org.agentseal.codeburn-menubar CodeBurnMenubarCompact.

Reading the Dashboard

CodeBurn surfaces the data, you read the story. A few patterns worth knowing:

| Signal you see | What it might mean |

|---|---|

| Cache hit < 80% | System prompt or context is not stable, or caching not enabled |

Lots of Read calls per session |

Agent re-reading same files, missing context |

| Low 1-shot rate (Coding 30%) | Agent struggling with edits, retry loops |

| Opus 4.6 dominating cost on small turns | Overpowered model for simple tasks |

dispatch_agent / task heavy |

Sub-agent fan-out, expected or excessive |

| No MCP usage shown | Either you don't use MCP servers, or your config is broken |

Bash dominated by git status, ls |

Agent exploring instead of executing |

| Conversation category dominant | Agent talking instead of doing |

These are starting points, not verdicts. A 60% cache hit on a single experimental session is fine. A persistent 60% cache hit across weeks of work is a config issue.

How It Reads Data

Claude Code stores session transcripts as JSONL at ~/.claude/projects/<sanitized-path>/<session-id>.jsonl. Each assistant entry contains model name, token usage (input, output, cache read, cache write), tool_use blocks, and timestamps.

Codex stores sessions at ~/.codex/sessions/YYYY/MM/DD/rollout-*.jsonl with token_count events containing per-call and cumulative token usage, and function_call entries for tool tracking.

Cursor stores session data in a SQLite database at ~/Library/Application Support/Cursor/User/globalStorage/state.vscdb (macOS), ~/.config/Cursor/User/globalStorage/state.vscdb (Linux), or %APPDATA%/Cursor/User/globalStorage/state.vscdb (Windows). Token counts are in cursorDiskKV table entries with bubbleId: key prefix. Requires better-sqlite3 (installed as optional dependency). Parsed results are cached at ~/.cache/codeburn/cursor-results.json and auto-invalidate when the database changes.

OpenCode stores sessions in SQLite databases at ~/.local/share/opencode/opencode*.db. CodeBurn queries the session, message, and part tables read-only, extracts token counts and tool usage, and recalculates cost using the LiteLLM pricing engine. Falls back to OpenCode's own cost field for models not in our pricing data. Subtask sessions (parent_id IS NOT NULL) are excluded to avoid double counting. Supports multiple channel databases and respects XDG_DATA_HOME.

Pi / OMP stores sessions as JSONL at ~/.pi/agent/sessions/<sanitized-cwd>/*.jsonl (Pi) and ~/.omp/agent/sessions/<sanitized-cwd>/*.jsonl (OMP). Each assistant message carries token usage (input, output, cacheRead, cacheWrite) plus inline toolCall content blocks. CodeBurn extracts token counts, normalizes tool names to the standard set (bash to Bash, dispatch_agent to Agent), and pulls bash commands from toolCall.arguments.command for the shell breakdown.

Gemini CLI stores sessions as single JSON files at ~/.gemini/tmp/<project>/chats/session-*.json. Each session embeds real token counts (input, output, cached, thoughts) per message. Gemini reports input tokens inclusive of cached; CodeBurn subtracts cached from input before pricing to avoid double charging.

OpenClaw stores agent sessions as JSONL at ~/.openclaw/agents/*.jsonl. Also checks legacy paths .clawdbot, .moltbot, .moldbot. Token usage comes from assistant message usage blocks; model from modelId or message.model fields.

Roo Code / KiloCode are Cline-family VS Code extensions. CodeBurn reads ui_messages.json from each task directory in VS Code's globalStorage, filtering type: "say" entries with say: "api_req_started" to extract token counts.

CodeBurn deduplicates messages (by API message ID for Claude, by cumulative token cross-check for Codex, by conversation/timestamp for Cursor, by session ID for Gemini, by session+message ID for OpenCode, by responseId for Pi/OMP), filters by date range per entry, and classifies each turn.

Environment Variables

| Variable | Description |

|---|---|

CLAUDE_CONFIG_DIR |

Override Claude Code data directory (default: ~/.claude) |

CODEX_HOME |

Override Codex data directory (default: ~/.codex) |

FACTORY_DIR |

Override Droid data directory (default: ~/.factory) |

QWEN_DATA_DIR |

Override Qwen data directory (default: ~/.qwen/projects) |

Project Structure

src/

cli.ts Commander.js entry point

dashboard.tsx Ink TUI (React for terminals)

parser.ts JSONL reader, dedup, date filter, provider orchestration

models.ts LiteLLM pricing, cost calculation

classifier.ts 13-category task classifier

compare-stats.ts Model comparison engine

daily-cache.ts Persistent daily cache with migration

day-aggregator.ts Daily aggregation from session data

types.ts Type definitions

format.ts Text rendering (status bar)

menubar-json.ts Payload builder for the macOS menubar app

export.ts CSV/JSON multi-period export

config.ts Config file management (~/.config/codeburn/)

currency.ts Currency conversion, exchange rates

sqlite.ts SQLite adapter (lazy-loads better-sqlite3)

optimize.ts Waste pattern detection engine

providers/

types.ts Provider interface definitions

index.ts Provider registry

claude.ts Claude Code session discovery

codex.ts Codex session discovery and JSONL parsing

copilot.ts GitHub Copilot session parsing

cursor.ts Cursor SQLite parsing, language extraction

cursor-agent.ts cursor-agent CLI session parsing

droid.ts Droid session discovery

gemini.ts Gemini CLI session JSON parsing

kilo-code.ts KiloCode VS Code extension parsing

kiro.ts Kiro .chat JSON session parsing

openclaw.ts OpenClaw agent JSONL parsing

opencode.ts OpenCode SQLite session parsing

pi.ts Pi/OMP agent JSONL session parsing

qwen.ts Qwen CLI JSONL session parsing

roo-code.ts Roo Code VS Code extension parsing

goose.ts Goose SQLite session parsing

antigravity.ts Antigravity conversation parsing

Star History

License

MIT

Credits

Inspired by ccusage and CodexBar. Pricing data from LiteLLM. Exchange rates from Frankfurter.

Built by AgentSeal.