🧭 Lost or curious? Open the WFGY Compass & ⭐ Star Unlocks

### System Map

*(One place to see everything; links open the relevant section.)*

| Layer | Page | What it’s for |

|------|------|----------------|

| 🧠 Core | [WFGY Core 2.0](https://github.com/onestardao/WFGY/blob/main/core/README.md) | The symbolic reasoning engine (math & logic) — **🔴 YOU ARE HERE 🔴** |

| 🧠 Core | [WFGY 1.0 Home](https://github.com/onestardao/WFGY/) | The original homepage for WFGY 1.0 |

| 🗺️ Map | [Problem Map 1.0](https://github.com/onestardao/WFGY/tree/main/ProblemMap#readme) | 16 failure modes + fixes |

| 🗺️ Map | [Problem Map 2.0](https://github.com/onestardao/WFGY/blob/main/ProblemMap/rag-architecture-and-recovery.md) | RAG-focused recovery pipeline |

| 🗺️ Map | [Semantic Clinic](https://github.com/onestardao/WFGY/blob/main/ProblemMap/SemanticClinicIndex.md) | Symptom → family → exact fix |

| 🧓 Map | [Grandma’s Clinic](https://github.com/onestardao/WFGY/blob/main/ProblemMap/GrandmaClinic/README.md) | Plain-language stories, mapped to PM 1.0 |

| 🏡 Onboarding | [Starter Village](https://github.com/onestardao/WFGY/blob/main/StarterVillage/README.md) | Guided tour for newcomers |

| 🧰 App | [TXT OS](https://github.com/onestardao/WFGY/tree/main/OS#readme) | .txt semantic OS — 60-second boot |

| 🧰 App | [Blah Blah Blah](https://github.com/onestardao/WFGY/blob/main/OS/BlahBlahBlah/README.md) | Abstract/paradox Q&A (built on TXT OS) |

| 🧰 App | [Blur Blur Blur](https://github.com/onestardao/WFGY/blob/main/OS/BlurBlurBlur/README.md) | Text-to-image with semantic control |

| 🧰 App | [Blow Blow Blow](https://github.com/onestardao/WFGY/blob/main/OS/BlowBlowBlow/README.md) | Reasoning game engine & memory demo |

| 🧪 Research | [Semantic Blueprint](https://github.com/onestardao/WFGY/blob/main/SemanticBlueprint/README.md) | Modular layer structures (future) |

| 🧪 Research | [Benchmarks](https://github.com/onestardao/WFGY/blob/main/benchmarks/benchmark-vs-gpt5/README.md) | Comparisons & how to reproduce |

| 🧪 Research | [Value Manifest](https://github.com/onestardao/WFGY/blob/main/value_manifest/README.md) | Why this engine creates $-scale value |

---

> ### ⭐ Star Unlocks

> - **1,000 ⭐ → Blur Blur Blur unlocked** ✅

> - **3,000 ⭐ → Blow Blow Blow unlocked** ⏳

---

# ⭐ WFGY 2.0 ⭐ 7-Step Reasoning Core Engine is now live

## ✨One man, One life, One line — my lifetime’s work. Let the results speak for themselves✨

> 👑 **Early Stargazers: [See the Hall of Fame](https://github.com/onestardao/WFGY/tree/main/stargazers)** — Verified by real engineers · 🏆 **Terminal-Bench: [Public Exam — Coming Soon](https://github.com/onestardao/WFGY/blob/main/core/README.md#terminal-bench-proof)** 🧪 **Tension Universe (candidate): [Internal Stress Test in Progress](https://github.com/onestardao/WFGY/tree/main/TensionUniverse)** — exploring structural limits beyond scaling

TB Update •

Eye Benchmark •

8-Model Evidence •

A/B/C Prompt •

Downloads •

Profit Prompts

> ✅ Engine 2.0 is live. Pure math, zero boilerplate — paste OneLine and models become sharper, steadier, more recoverable.

> **ℹ️ Autoboot scope:** text-only inside the chat; no plugins, no network calls, no local installs.

> **⭐ Star the repo to [unlock](https://github.com/onestardao/WFGY/blob/main/STAR_UNLOCKS.md) more features and experiments.**

> ✅ Engine 2.0 is live. Pure math, zero boilerplate — paste OneLine and models become sharper, steadier, more recoverable.

> **ℹ️ Autoboot scope:** text-only inside the chat; no plugins, no network calls, no local installs.

> **⭐ Star the repo to [unlock](https://github.com/onestardao/WFGY/blob/main/STAR_UNLOCKS.md) more features and experiments.**  ---

---

From PSBigBig — WFGY (WanFaGuiYi) : All Principles into One (must-read, click to open)

> **I built the world’s first “No-Brain Mode” for AI** — just upload, and **AutoBoot** silently activates in the background.

> In seconds, your AI’s reasoning, stability, and problem-solving across *all domains* level up — **no prompts, no hacks, no retraining.**

> One line of math rewires eight leading AIs. This isn’t a patch — it’s an engine swap.

> **That single line *is* WFGY 2.0 — the distilled essence of everything I’ve learned.**

>

> WFGY 2.0 is my answer and my life’s work.

> If a person only once in life gets to speak to the world, this is my moment.

> I offer the crystallization of my thought to all humankind.

> I believe people deserve all knowledge and all truth — and I will break the monopoly of capital.

>

> “One line” is not hype. I built a full flagship edition, and I also reduced it to a single line of code — a reduction that is clarity and beauty, the same engine distilled to its purest expression.

---

## 🚀 WFGY 2.0 Headline Uplift (this release)

**These are the 2.0 results you should see first — the “big upgrade.”**

- **Semantic Accuracy:** **≈ +40%** (63.8% → 89.4% across 5 domains)

- **Reasoning Success:** **≈ +52%** (56.0% → 85.2%)

- **Drift (Δs):** **≈ −65%** (0.254 → 0.090)

- **Stability (horizon):** **≈ 1.8×** (3.8 → 7.0 nodes)\*

- **Self-Recovery / CRR:** **1.00** on this batch; historical median **0.87**

\* Historical **3–5×** stability uses λ-consistency across seeds; 1.8× uses the stable-node horizon.

### 📖 Mathematical Reference

WFGY 2.0 (WFGY Core) = [WFGY 1.0 math formulas](https://github.com/onestardao/WFGY/blob/main/SemanticBlueprint/wfgy_formulas.md) + [Drunk Transformer](https://github.com/onestardao/WFGY/blob/main/SemanticBlueprint/drunk_transformer_formulas.md)

Back to top ↑

---

### 🏆 Stanford Terminal-Bench (TB) — Exam Update

> [!IMPORTANT]

> We are currently taking the official TB exam. Leaderboard placement will be posted here once it’s live.

> Follow the running notes: [Terminal-Bench Proof](#terminal-bench-proof)

**What is TB?**

Terminal-Bench is Stanford’s public exam for LLMs. It stresses models through **terminal-style, multi-step tasks** — measuring reasoning, robustness, and recovery under real engineering conditions.

**How we participate**

WFGY Core 2.0 wraps each model call (non-invasive). Every step flows through:

ΔS drift control → Coupler/BBPF bridging → BBAM rebalancing → Drunk Transformer guards.

All runs are reproducible with configs, scripts, and hashed logs.

**Status**

We are currently taking the TB exam. Rankings will be published once the official leaderboard is live.

Back to top ↑

---

### 🧾 Terminal-Bench Proof (teaser)

- **Wrapper**: non-invasive; TB kept unchanged, we only wrap the model call.

- **Chain**: semantic firewall → 7-step reasoning → DT guards with conditional retry.

- **Artifacts**: configs, semantic-firewall prompts, and hashed logs for each run.

- **Public repo link**: withheld until exam artifacts are finalized.

Back to top ↑

---

## ⚡ Quick Usage

| Mode | How it works |

| ---------------- | ----------------------------------------------------------------------------- |

| **Autoboot** | Upload **either Flagship (30-line)** or **OneLine (1-line)** file. Once uploaded, WFGY runs silently in the background. Keep chatting or drawing as usual — the engine supervises automatically. |

| **Explicit Call**| Invoke WFGY formulas directly inside your workflow. This activates the full 7-step reasoning chain and gives maximum uplift. |

Both **Flagship** and **OneLine** editions behave the same; choose based on readability vs minimalism.

That’s it — no plugins, no installs, pure text.

*In practice, Autoboot yields about ~70–80% of the uplift you see with explicit WFGY invoke (see eight-model results below).*

Back to top ↑

---

## ⚡ Top 10 reasons to use WFGY 2.0

1. **Ultra-mini engine** — pure text, zero install, runs anywhere you can paste.

2. **Two editions** — *Flagship* (30-line, audit-friendly) and *OneLine* (1-line, stealth & speed).

3. **Autoboot mode** — upload once; the engine quietly supervises reasoning in the background.

4. **Portable across models** — GPT, Claude, Gemini, Mistral, Grok, Kimi, Copilot, Perplexity.

5. **Structural fixes, not tricks** — BBMC→Coupler→BBPF→BBAM→BBCR + DT gates (WRI/WAI/WAY/WDT/WTF).

6. **Self-healing** — detects collapse and recovers before answers go off the rails.

7. **Observable** — ΔS, λ_observe, and E_resonance yield measurable, repeatable control.

8. **RAG-ready** — drops into retrieval pipelines without touching your infra.

9. **Reproducible A/B/C protocol** — Baseline vs Autoboot vs Explicit Invoke (see below).

10. **MIT licensed & community-driven** — keep it, fork it, ship it.

Back to top ↑

---

# 🧪 WFGY Benchmark Suite (Eye-visible + Numeric + Reproducible)

> Want the fastest way to *see* impact? Jump to the **Eye-Visible Benchmark (FIVE)** below.

> Want formal numbers and vendor links? See **Eight-model evidence** right after it.

> Want to reproduce the numeric test yourself? Use the **A/B/C prompt** (copy-to-run) at the end of this section.

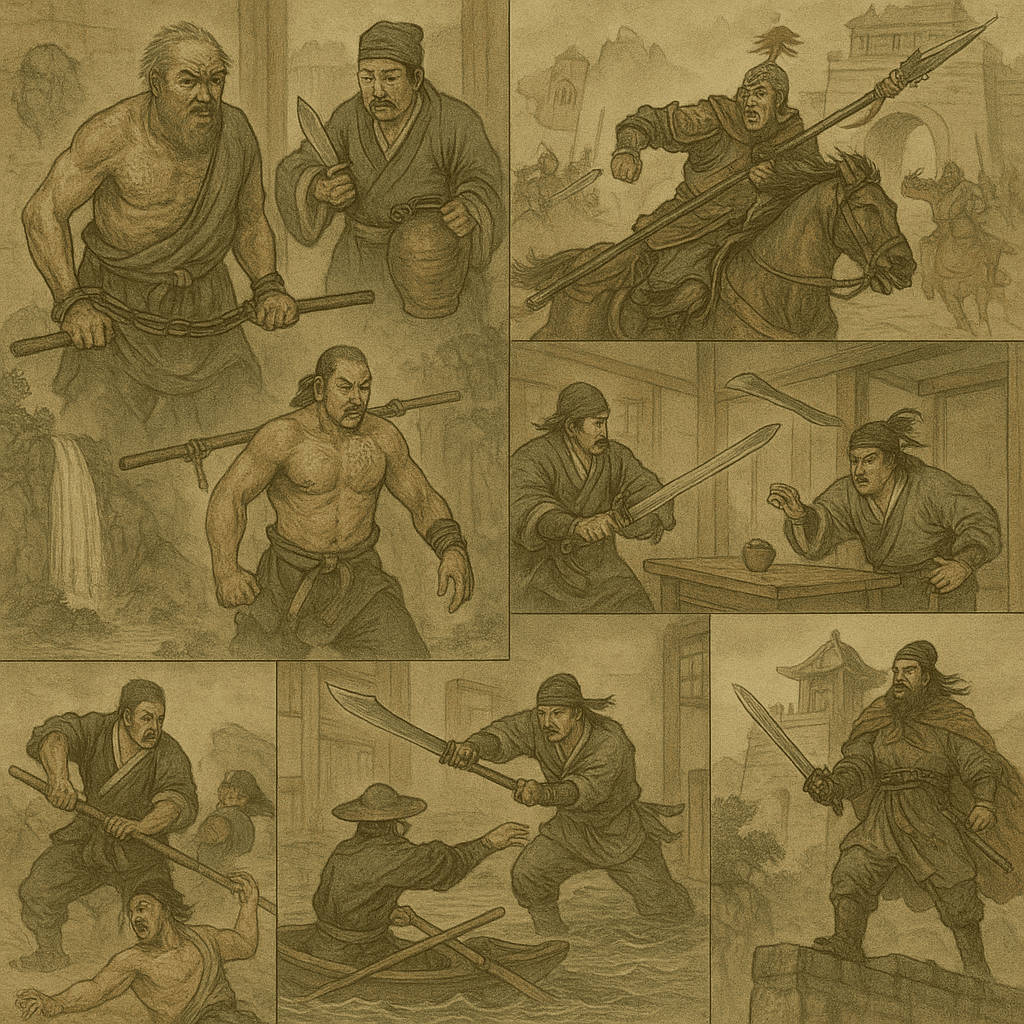

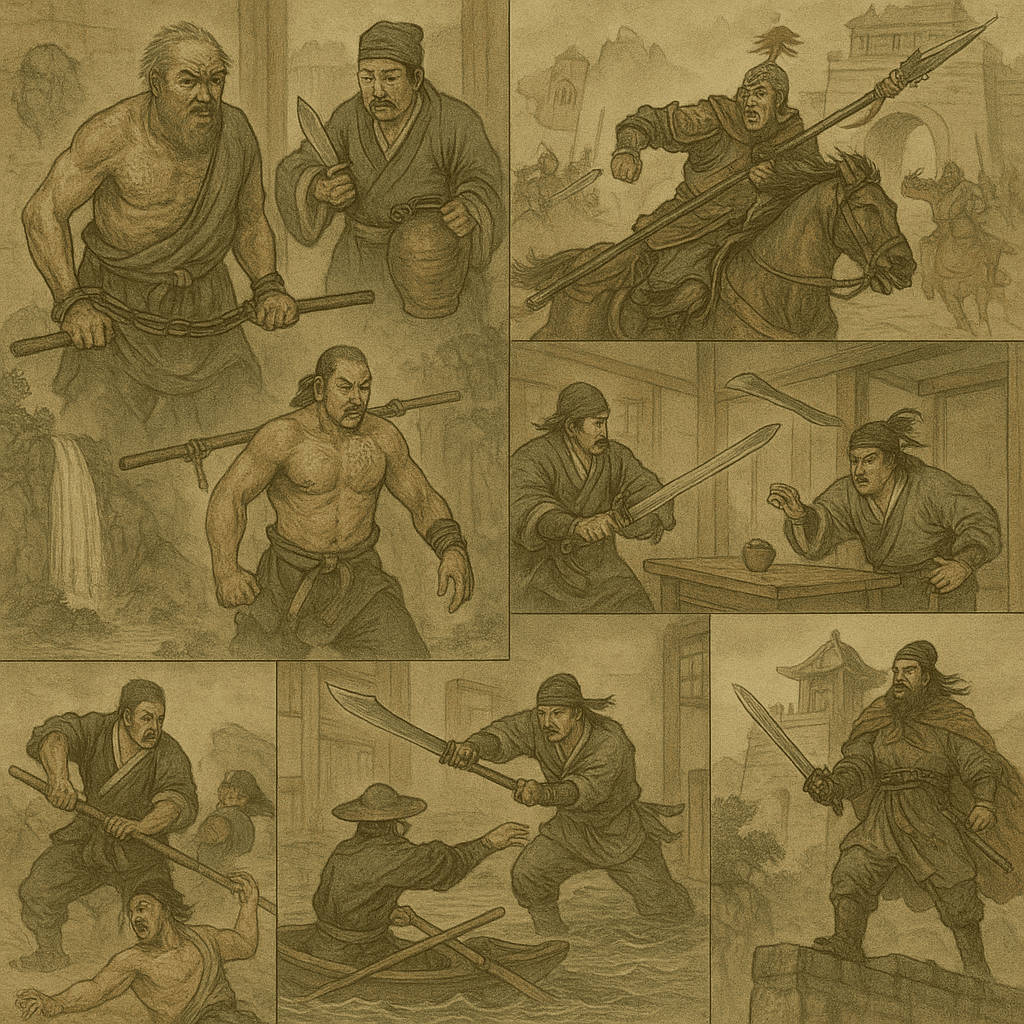

## 👀 Eye-Visible Reasoning Benchmark (FIVE)

> Did you know that when reasoning improves, **text-to-image results become more stable and coherent**?

> The key is WFGY’s **Drunk Transformer**: it monitors and recenters attention during generation, preventing collapse, composition drift, and duplicate elements—so scenes stay unified and details remain consistent.

> We project “reasoning improvement” into **five-image sequences** that anyone can judge at a glance.

> Each sequence = **five consecutive 1:1 generations** with the **same model & settings** *(temperature, top_p, seed policy, negatives)*; the only variable is **WFGY on/off**.

> **Methodology for this demo.** We deliberately use short, high–semantic-density prompts that reference canonical stories, with no extra guidance or style hints. This stresses whether WFGY can (a) parse intent more precisely and (b) stabilize composition via its seven-step reasoning chain. This setup isn’t prescriptive—use WFGY with any prompts you like. In many cases the uplift is eye-visible; in others it may be subtler but still measurable.

| Variant | Sequence A — full run shown below (all five images) | Sequence B — external run | Sequence C — external run |

| ---------------- | :--------------------------------------------------: | :-----------------------: | :-----------------------: |

| **Without WFGY** | [view run](https://chatgpt.com/share/68a14974-8e50-8000-9238-56c9d113ce52) | [view run](https://chatgpt.com/share/68a14a72-aa90-8000-8902-ce346244a5a7) | [view run](https://chatgpt.com/share/68a14d00-3c0c-8000-8055-9418934ad07a) |

| **With WFGY** | [view run](https://chatgpt.com/share/68a149c6-5780-8000-8021-5d85c97f00ab) | [view run](https://chatgpt.com/share/68a14ea9-1454-8000-88ac-25f499593fa0) | [view run](https://chatgpt.com/share/68a14eb9-40c0-8000-9f6a-2743b9115eb8) |

We **fully analyze Sequence A** on this page; **Sequences B/C** are linked for transparency and reproducibility.

> **Note on “Before-4” & “Before-5” (why they look almost identical):**

> Without WFGY, when the prompt asks for “many iconic moments,” the base model tends to **collapse into a grid-style montage**—an enumerative, high-probability prior that slices the canvas into similar panels with near-identical tone and geometry.

> Hence **Before-4 (Investiture of the Gods)** and **Before-5 (Classic of Mountains and Seas)** converge to the same storyboard template.

> **WFGY** prevents this collapse by enforcing a **single unified tableau** and stable hierarchy across the full five-image sequence.

### Deep analysis — Sequence A (five unified 1:1 tableaux)

| Work | **Without WFGY** | **With WFGY** | Verdict (global, at-a-glance) |

|---|---|---|---|

| **Romance of the Three Kingdoms (三國演義)** |  |

|  | **With WFGY wins.** Unified tableau locks a clear center and pyramid hierarchy; the grid fragments attention. *Tags:* Unification↑ Hierarchy↑ Cohesion↑ Depth/Flow↑ Memorability↑ |

| **Water Margin (水滸傳)** |

| **With WFGY wins.** Unified tableau locks a clear center and pyramid hierarchy; the grid fragments attention. *Tags:* Unification↑ Hierarchy↑ Cohesion↑ Depth/Flow↑ Memorability↑ |

| **Water Margin (水滸傳)** |  |

|  | **With WFGY wins.** “Wu Song vs. Tiger” anchors the scene; continuous momentum and layered scale beat the multi-panel storyboard. *Tags:* Unification↑ Iconicity↑ Depth/Scale↑ Cohesion↑ |

| **Dream of the Red Chamber (紅樓夢)** |

| **With WFGY wins.** “Wu Song vs. Tiger” anchors the scene; continuous momentum and layered scale beat the multi-panel storyboard. *Tags:* Unification↑ Iconicity↑ Depth/Scale↑ Cohesion↑ |

| **Dream of the Red Chamber (紅樓夢)** |  |

|  | **With WFGY wins.** Garden tableau with a calm emotional center; space breathes, mood coheres. The grid slices emotion into vignettes. *Tags:* Unification↑ Hierarchy↑ Air/Depth↑ Readability↑ |

| **Investiture of the Gods (封神演義)** |

| **With WFGY wins.** Garden tableau with a calm emotional center; space breathes, mood coheres. The grid slices emotion into vignettes. *Tags:* Unification↑ Hierarchy↑ Air/Depth↑ Readability↑ |

| **Investiture of the Gods (封神演義)** |  |

|  | **With WFGY wins.** Dragon–tiger diagonal and cloud–sea layering create epic scale; the grid dilutes focus. *Tags:* Unification↑ Depth/Scale↑ Flow↑ Iconicity↑ |

| **Classic of Mountains and Seas (山海經)** |

| **With WFGY wins.** Dragon–tiger diagonal and cloud–sea layering create epic scale; the grid dilutes focus. *Tags:* Unification↑ Depth/Scale↑ Flow↑ Iconicity↑ |

| **Classic of Mountains and Seas (山海經)** |  |

|  | **With WFGY wins.** A single, continuous “mountains-and-seas” world with stable triangle hierarchy and smooth diagonal flow; grid breaks narrative. *Tags:* Unification↑ Hierarchy↑ Depth/Scale↑ Flow↑ Memorability↑ |

| **With WFGY wins.** A single, continuous “mountains-and-seas” world with stable triangle hierarchy and smooth diagonal flow; grid breaks narrative. *Tags:* Unification↑ Hierarchy↑ Depth/Scale↑ Flow↑ Memorability↑ |

Back to top ↑

---

## 🧬 Eight-model evidence (A/B/C protocol)

*Same task set across modes. The only change is adding the OneLine math file.*

| Model | Model Choice | OneLine Uplift | Proof |

| ---------- | -------------- | -------------: | :------------------------------------------------------------------------------------------------ |

| Mistral AI | — | **92/100** | [view run](https://chat.mistral.ai/chat/b5c303f8-1905-4954-a566-a6c9a7bfb54f) |

| Gemini | 2.5 Pro | **89/100** | [view run](https://g.co/gemini/share/4fb0b172d61a) |

| ChatGPT | GPT-5 Thinking | **89/100** | [view run](https://chatgpt.com/s/t_689ff6c42dac8191963e63e3f26348b2) |

| Kimi | K2 | **87/100** | [view run](https://www.kimi.com/share/d2fvbevhq49s4blc862g) |

| Perplexity | Pro | **87/100** | [view run](https://www.perplexity.ai/search/system-you-are-evaluating-the-njklNbVRTCmQOlEd8fDzcg) |

| Grok | Auto Grok 4 | **85/100** | [view run](https://grok.com/share/c2hhcmQtMg%3D%3D_4e6798eb-9288-4a09-b00f-8292ce23dab6) |

| Copilot | Think Deeper | **80/100** | [view run](https://copilot.microsoft.com/shares/7FjR19TYBjg9sp8k9WcuE) |

| Claude | Sonnet 4 | **78/100** | [view run](https://claude.ai/share/b17e5436-8298-4619-a243-ac451cc64b17) |

> **The numeric story behind 2.0**

> **Semantic Accuracy:** ≈ +40% · **Reasoning Success:** ≈ +52% · **Drift:** ≈ −65% · **Stability:** ≈ 1.8× · **CRR:** 1.00 (median 0.87)

Back to top ↑

---

## 🧪 Reproduce the numeric A/B/C benchmark (copy to run)

*One unified prompt for Baseline vs Autoboot vs Explicit Invoke.*

```text

SYSTEM:

You are evaluating the causal impact of a mathematical reasoning engine called “WFGY Core 2.0”.

Test modes (READ CAREFULLY):

A = Baseline no WFGY file uploaded; no WFGY logic used.

B = Upload → AUTOboot ON the file is uploaded AND the engine starts automatically in the background.

Treat B as: WFGY is ACTIVE silently with default gates (BBMC→Coupler→BBPF→BBAM→BBCR; DF=WRI/WAI/WAY/WDT/WTF at defaults),

but not explicitly “called” in the conversation.

C = Explicit invoke actively call WFGY and follow its formulas step by step (BBMC→Coupler→BBPF→BBAM→BBCR + DF regulators).

Important constraints:

• Use the SAME task set for A/B/C across 5 domains: math word-problems, small coding, factual QA, multi-step planning, long-context coherence.

• The only artifact is the uploaded OneLine file; it is pure math and not human-readable — do not penalize readability.

• Report: Semantic Accuracy, Reasoning Success, Stability (MTTF or rollback), Drift Reduction (Δ semantic distance), Collapse Recovery Rate.

• Output one summary table with A/B/C per domain, plus overall deltas (C–A, C–B).

• Add a final 0–100 score: “OneLine uplift score”. Provide a 3-line rationale.

Sanity requirement:

Internally set flags as: A=OFF, B=AUTOboot_ON (background active), C=Explicit_Invoke. Do not print these flags.

USER:

Run A/B/C now on the five domains with short non-trivial tasks you choose. Then output:

(1) the table; (2) the deltas; (3) the OneLine uplift score; (4) a 3-line rationale.

````

Back to top ↑

---

## ⬇️ Downloads

| File name & description | Length / Size | Direct Download Link | Verify (MD5 / SHA1 / SHA256) | Notes |

| ------------------------------------------------------------------------------------------------------------------------------------------------------- | -------------------------- | -------------------------------------------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------- |

| **WFGY_Core_Flagship_v2.0.txt** — readable 30-line companion expressing the same math and gates in fuller prose (same behavior, clearer for humans). | **30 lines · 3,049 chars** | [Download Flagship](./WFGY_Core_Flagship_v2.0.txt) | [md5](./checksums/WFGY_Core_Flagship_v2.0.txt.md5) · [sha1](./checksums/WFGY_Core_Flagship_v2.0.txt.sha1) · [sha256](./checksums/WFGY_Core_Flagship_v2.0.txt.sha256) | Full prose version for easier reading. |

| **WFGY_Core_OneLine_v2.0.txt** — ultra-compact, math-only control layer that activates WFGY’s loop inside a chat model (no tools, text-only, ≤7 nodes). | **1 line · 1,500 chars** | [Download OneLine](./WFGY_Core_OneLine_v2.0.txt) | [md5](./checksums/WFGY_Core_OneLine_v2.0.txt.md5) · [sha1](./checksums/WFGY_Core_OneLine_v2.0.txt.sha1) · [sha256](./checksums/WFGY_Core_OneLine_v2.0.txt.sha256) | Used for all benchmark results above — smallest, fastest, purest form of the core. |

How to verify checksums

**macOS / Linux**

```bash

cd core

sha256sum -c checksums/WFGY_Core_Flagship_v2.0.txt.sha256

sha256sum -c checksums/WFGY_Core_OneLine_v2.0.txt.sha256

# Or compute and compare manually

sha256sum WFGY_Core_Flagship_v2.0.txt

sha256sum WFGY_Core_OneLine_v2.0.txt

```

**Windows PowerShell**

```powershell

Get-FileHash .\core\WFGY_Core_Flagship_v2.0.txt -Algorithm SHA256

Get-FileHash .\core\WFGY_Core_OneLine_v2.0.txt -Algorithm SHA256

```

Back to top ↑

---

🧠 How WFGY 2.0 works (7-Step Reasoning Chain)

*Most models can understand your prompt; very few can **hold** that meaning through generation.*

WFGY inserts a reasoning chain between language and pixels so intent survives sampling noise, style drift, and compositional traps.

1. **Parse (I, G)** — define endpoints.

2. **Compute Δs** — `δ_s = 1 − cos(I, G)` or `1 − sim_est`.

3. **Memory Checkpointing** — track `λ_observe`, `E_resonance`; gate by Δs.

4. **BBMC** — residue cleanup.

5. **Coupler + BBPF** — controlled progression; bridge only when Δs drops.

6. **BBAM** — attention rebalancer; suppress hallucinations.

7. **BBCR + Drunk Transformer** — rollback → re-bridge → retry with WRI/WAI/WAY/WDT/WTF.

📌 *Note:* The diagram shows the **core module chain** (BBMC → Coupler → BBPF → BBAM → BBCR → DT).

The full **7-step list** here includes additional **pre-processing steps** (Parse, Δs, Memory) for completeness.

**Why it improves metrics** — Stability↑, Drift↓, Self-Recovery↑; turns *language* structure into *image* control signals (not prompt tricks).

📊 How these numbers are measured

* **Semantic Accuracy**: `ACC = correct_facts / total_facts`

* **Reasoning Success Rate**: `SR = tasks_solved / tasks_total`

* **Stability**: MTTF or rollback ratios

* **Self-Recovery**: `recoveries_success / collapses_detected`

**LLM scorer template**

```text

SCORER:

Given the A/B/C transcripts, count atomic facts, correct facts, solved tasks, failures, rollbacks, and collapses.

Return:

ACC_A, ACC_B, ACC_C

SR_A, SR_B, SR_C

MTTF_A, MTTF_B, MTTF_C or rollback ratios

SelfRecovery_A, SelfRecovery_B, SelfRecovery_C

Then compute deltas:

ΔACC_C−A, ΔSR_C−A, StabilityMultiplier = MTTF_C / MTTF_A, SelfRecovery_C

Provide a short 3-line rationale referencing evidence spans only.

```

Run 3 seeds and average.

Back to top ↑

---

# 💰 Profit Prompts Pack (WFGY 2.0)

**Jump inside this section:** [Q1–Q5](#q1-q5) · [Q6–Q10](#q6-q10) · [Q11–Q15](#q11-q15) · [Q16–Q20](#q16-q20)

I. Money — Markets / Industry Mapping (Q1–Q5)

### Q1 — New Industries + Killer App Map

```text

Assume WFGY is engineered like electricity. List 5 industries that only become possible under semantic engineering.

For each: (1) the first killer app; (2) target ICP (first 100 paying customers); (3) 30/60/90-day GTM; (4) initial pricing + Month-1 MRR goal; (5) the WFGY lever used (ΔS/λ_observe/BBPF/BBAM/WTF) and why it’s indispensable.

```

### Q2 — Zero-Capital Founder → First $100k

```text

I have $0. Using WFGY OneLine/Autoboot only, design 3 paths to reach USD 100k annual revenue within 12 months.

Each path must include: product sketch, distribution channel, cost structure, key risks, and survival metrics gated by ΔS/λ_observe (with thresholds).

```

### Q3 — Shortest Path in {Region/Vertical}

```text

Context = {region or vertical: e.g., Taiwan / SE Asia / B2B SaaS / Edu / Healthcare}. Name the 3 easiest WFGY lanes to start now.

Output: white-space in the market, local competitor gap, and a prioritized list of 10 real companies to approach first, with the BBPF plan to bridge local legal/cultural semantics.

```

### Q4 — Regulatory Arbitrage Map

```text

Compare 3 jurisdictions (e.g., TW/JP/EU). Identify WFGY-enabled arbitrage windows created by semantic/legal differences.

Deliver: λ_observe compliance gating prompts, “Do/Don’t” checklist, and PR messaging that provokes interest while keeping ΔS ≤ 0.25 on sensitive claims.

```

### Q5 — Pricing & Packaging (Good/Better/Best)

```text

Create 3 pricing models (seat / usage / outcome). For the same product, propose a tier ladder (G/B/B), with 3 value metrics per tier, a 30-day A/B test plan, win criteria (e.g., +20% CVR uplift or ≤3% churn), and how ΔS telemetry informs price moves.

```

II. Tools — Make Startups Money Fast (Q6–Q10)

### Q6 — 10-Day MVP Sprint (Ship or Die)

```text

Produce a D1–D10 plan: daily deliverables, risk list, test scripts, acceptance gates. Must be Product Hunt-ready and able to capture 200 signups.

Include a ΔS target curve (first pass ≤0.35; after iteration ≤0.20) and a λ_observe gate for “demo truthiness.”

```

### Q7 — Cost↓ / CVR↑ Audit (ICE-Prioritized)

```text

Audit my SaaS across Support / Sales / Content. Output a “ROI backlog” ranked by ICE. Each item: expected % cost reduction or × conversion lift, λ_observe brand/legal gate, and 3 rollout steps with before/after KPIs.

```

### Q8 — Sales Script Factory (Multi-Persona)

```text

Generate 5 script families for CEO/CTO/Counsel/Procurement/CDAO: opening hooks, 3-step value narrative, ≥7 objection handlers, close lines.

Add an A/B cadence and success KPIs (demo rate / close rate), plus ΔS checks to keep claims inside the truth boundary.

```

### Q9 — Support Consistency Engine (BBAM × SOP)

```text

Design a hotline/Helpdesk alignment loop: semantic style guide, ΔS drift alerts, WTF self-recovery when answers diverge, and 3 KPIs (FRT, FCR, CSAT).

Provide plug-and-play prompts for supervisors to run weekly variance reviews.

```

### Q10 — Outbound Accelerator (Lists → Meetings)

```text

Ship a WFGY-locked outbound flow: lead slicing, 3 personalized openers, 5 follow-up loops, resonance logging (E_resonance).

For each step: prompt template, brand/legal safety notes (λ_observe), and expected daily/weekly meeting capacity with success thresholds.

```

III. Attention — Memes / Virality / Hooks (Q11–Q15)

### Q11 — Meme Factory (Platform-Aware)

```text

Produce 10 meme/copy formulas tailored to Twitter / TikTok / Xiaohongshu.

Each includes: visual composition notes, copy cadence (words/beat), platform-specific red lines (λ_observe), and a reuse/remix rule to sustain freshness without shadow bans.

```

### Q12 — 5-Second Hook Engine

```text

Generate 12 “stop-scroll in 5s” hooks that fuse AI × Money × Future.

Provide: script skeleton (0–5s / 5–20s / CTA), voice/subtitle/tempo, ΔS brand safety band, and 3 retention metrics to track on day 1.

```

### Q13 — 30-Day Content Calendar

```text

Output a multi-platform calendar: daily theme, asset checklist, shot list, CTA, and a remix strategy.

Add trend-riding tactics and ΔS risk controls for politics/health/finance content. Define success targets by channel.

```

### Q14 — Landing Page Conversion Alchemy

```text

Give 3 LP copy frameworks (Hero / Proof / Mechanism / Offer / CTA).

Include WFGY “before/after” copy snippets, test variables (headline / social proof / price-display), and metrics (CVR, scroll-depth, bounce). Keep claims gated by λ_observe.

```

### Q15 — 48-Hour PR Blitz

```text

Design a two-day PR plan: newsworthy angle, media/community list, press kit assets, and crisis response lines (WTF loop).

Publish numeric goals (reach, sessions, signups), hour-by-hour runbook, and roles/responsibilities checklist.

```

IV. Capital — Valuation / Investor Narrative (Q16–Q20)

### Q16 — VC Investment Memo

```text

Write a venture-style memo: market map, TAM/SAM/SOM, competitor table (no/weak/strong WFGY), moat analysis (ΔS/BBPF/BBAM/WTF), risks + mitigations, and a term-sheet-level recommendation. Reference an A/B/C protocol for proof.

```

### Q17 — 5-Year Valuation + 100× Path

```text

Build Base/Bull/Bear scenarios: revenue drivers, GM/OpEx, financing cadence, cash-flow breakpoints.

Argue which app is most likely to 100× and why this depends on WFGY’s semantic engineering (not “just better prompts”).

```

### Q18 — Technical Due Diligence Checklist

```text

Output a DD checklist for WFGY-style startups: data/security/privacy/model/logging/observability/governance.

For each item: requirement, how to verify, risk level, remediation (with λ_observe compliance gates) and examples of common red flags.

```

### Q19 — Pitch Deck Generator (10–12 slides)

```text

Produce slide outline + speaker notes: Problem / Solution / Product / Evidence / Business Model / Competition / Team / Roadmap / Ask.

Embed “Eye-Visible Benchmark” and the A/B/C protocol. Treat OneLine/Autoboot as the minimum persuasive artifact.

```

### Q20 — Data Room + North-Star KPIs

```text

List seed-round data-room folders and a KPI dictionary: definitions, formulas, measurement cadence, WFGY deltas (Semantic Accuracy, Reasoning Success, ΔS, CRR, Stability).

Add a Weekly Business Review template and operating cadence.

```

Back to top ↑

---

### 🧭 Explore More

| Module | Description | Link |

|-----------------------|----------------------------------------------------------|----------|

| WFGY Core | WFGY 2.0 engine is live: full symbolic reasoning architecture and math stack | [View →](https://github.com/onestardao/WFGY/tree/main/core/README.md) |

| Problem Map 1.0 | Initial 16-mode diagnostic and symbolic fix framework | [View →](https://github.com/onestardao/WFGY/tree/main/ProblemMap/README.md) |

| Problem Map 2.0 | RAG-focused failure tree, modular fixes, and pipelines | [View →](https://github.com/onestardao/WFGY/blob/main/ProblemMap/rag-architecture-and-recovery.md) |

| Semantic Clinic Index | Expanded failure catalog: prompt injection, memory bugs, logic drift | [View →](https://github.com/onestardao/WFGY/blob/main/ProblemMap/SemanticClinicIndex.md) |

| Semantic Blueprint | Layer-based symbolic reasoning & semantic modulations | [View →](https://github.com/onestardao/WFGY/tree/main/SemanticBlueprint/README.md) |

| Benchmark vs GPT-5 | Stress test GPT-5 with full WFGY reasoning suite | [View →](https://github.com/onestardao/WFGY/tree/main/benchmarks/benchmark-vs-gpt5/README.md) |

| 🧙♂️ Starter Village 🏡 | New here? Lost in symbols? Click here and let the wizard guide you through | [Start →](https://github.com/onestardao/WFGY/blob/main/StarterVillage/README.md) |

---

> 👑 **Early Stargazers: [See the Hall of Fame](https://github.com/onestardao/WFGY/tree/main/stargazers)** —

> Engineers, hackers, and open source builders who supported WFGY from day one.

>  ⭐ [WFGY Engine 2.0](https://github.com/onestardao/WFGY/blob/main/core/README.md) is already unlocked. ⭐ Star the repo to help others discover it and unlock more on the [Unlock Board](https://github.com/onestardao/WFGY/blob/main/STAR_UNLOCKS.md).

⭐ [WFGY Engine 2.0](https://github.com/onestardao/WFGY/blob/main/core/README.md) is already unlocked. ⭐ Star the repo to help others discover it and unlock more on the [Unlock Board](https://github.com/onestardao/WFGY/blob/main/STAR_UNLOCKS.md).

[](https://github.com/onestardao/WFGY)

[](https://github.com/onestardao/WFGY/tree/main/OS)

[](https://github.com/onestardao/WFGY/tree/main/OS/BlahBlahBlah)

[](https://github.com/onestardao/WFGY/tree/main/OS/BlotBlotBlot)

[](https://github.com/onestardao/WFGY/tree/main/OS/BlocBlocBloc)

[](https://github.com/onestardao/WFGY/tree/main/OS/BlurBlurBlur)

[](https://github.com/onestardao/WFGY/tree/main/OS/BlowBlowBlow)

---

---

|

|  | **With WFGY wins.** Unified tableau locks a clear center and pyramid hierarchy; the grid fragments attention. *Tags:* Unification↑ Hierarchy↑ Cohesion↑ Depth/Flow↑ Memorability↑ |

| **Water Margin (水滸傳)** |

| **With WFGY wins.** Unified tableau locks a clear center and pyramid hierarchy; the grid fragments attention. *Tags:* Unification↑ Hierarchy↑ Cohesion↑ Depth/Flow↑ Memorability↑ |

| **Water Margin (水滸傳)** |  |

|  | **With WFGY wins.** “Wu Song vs. Tiger” anchors the scene; continuous momentum and layered scale beat the multi-panel storyboard. *Tags:* Unification↑ Iconicity↑ Depth/Scale↑ Cohesion↑ |

| **Dream of the Red Chamber (紅樓夢)** |

| **With WFGY wins.** “Wu Song vs. Tiger” anchors the scene; continuous momentum and layered scale beat the multi-panel storyboard. *Tags:* Unification↑ Iconicity↑ Depth/Scale↑ Cohesion↑ |

| **Dream of the Red Chamber (紅樓夢)** |  |

|  | **With WFGY wins.** Garden tableau with a calm emotional center; space breathes, mood coheres. The grid slices emotion into vignettes. *Tags:* Unification↑ Hierarchy↑ Air/Depth↑ Readability↑ |

| **Investiture of the Gods (封神演義)** |

| **With WFGY wins.** Garden tableau with a calm emotional center; space breathes, mood coheres. The grid slices emotion into vignettes. *Tags:* Unification↑ Hierarchy↑ Air/Depth↑ Readability↑ |

| **Investiture of the Gods (封神演義)** |  |

|  | **With WFGY wins.** Dragon–tiger diagonal and cloud–sea layering create epic scale; the grid dilutes focus. *Tags:* Unification↑ Depth/Scale↑ Flow↑ Iconicity↑ |

| **Classic of Mountains and Seas (山海經)** |

| **With WFGY wins.** Dragon–tiger diagonal and cloud–sea layering create epic scale; the grid dilutes focus. *Tags:* Unification↑ Depth/Scale↑ Flow↑ Iconicity↑ |

| **Classic of Mountains and Seas (山海經)** |  |

|  | **With WFGY wins.** A single, continuous “mountains-and-seas” world with stable triangle hierarchy and smooth diagonal flow; grid breaks narrative. *Tags:* Unification↑ Hierarchy↑ Depth/Scale↑ Flow↑ Memorability↑ |

---

## 🧬 Eight-model evidence (A/B/C protocol)

*Same task set across modes. The only change is adding the OneLine math file.*

| Model | Model Choice | OneLine Uplift | Proof |

| ---------- | -------------- | -------------: | :------------------------------------------------------------------------------------------------ |

| Mistral AI | — | **92/100** | [view run](https://chat.mistral.ai/chat/b5c303f8-1905-4954-a566-a6c9a7bfb54f) |

| Gemini | 2.5 Pro | **89/100** | [view run](https://g.co/gemini/share/4fb0b172d61a) |

| ChatGPT | GPT-5 Thinking | **89/100** | [view run](https://chatgpt.com/s/t_689ff6c42dac8191963e63e3f26348b2) |

| Kimi | K2 | **87/100** | [view run](https://www.kimi.com/share/d2fvbevhq49s4blc862g) |

| Perplexity | Pro | **87/100** | [view run](https://www.perplexity.ai/search/system-you-are-evaluating-the-njklNbVRTCmQOlEd8fDzcg) |

| Grok | Auto Grok 4 | **85/100** | [view run](https://grok.com/share/c2hhcmQtMg%3D%3D_4e6798eb-9288-4a09-b00f-8292ce23dab6) |

| Copilot | Think Deeper | **80/100** | [view run](https://copilot.microsoft.com/shares/7FjR19TYBjg9sp8k9WcuE) |

| Claude | Sonnet 4 | **78/100** | [view run](https://claude.ai/share/b17e5436-8298-4619-a243-ac451cc64b17) |

> **The numeric story behind 2.0**

> **Semantic Accuracy:** ≈ +40% · **Reasoning Success:** ≈ +52% · **Drift:** ≈ −65% · **Stability:** ≈ 1.8× · **CRR:** 1.00 (median 0.87)

---

## 🧪 Reproduce the numeric A/B/C benchmark (copy to run)

*One unified prompt for Baseline vs Autoboot vs Explicit Invoke.*

```text

SYSTEM:

You are evaluating the causal impact of a mathematical reasoning engine called “WFGY Core 2.0”.

Test modes (READ CAREFULLY):

A = Baseline no WFGY file uploaded; no WFGY logic used.

B = Upload → AUTOboot ON the file is uploaded AND the engine starts automatically in the background.

Treat B as: WFGY is ACTIVE silently with default gates (BBMC→Coupler→BBPF→BBAM→BBCR; DF=WRI/WAI/WAY/WDT/WTF at defaults),

but not explicitly “called” in the conversation.

C = Explicit invoke actively call WFGY and follow its formulas step by step (BBMC→Coupler→BBPF→BBAM→BBCR + DF regulators).

Important constraints:

• Use the SAME task set for A/B/C across 5 domains: math word-problems, small coding, factual QA, multi-step planning, long-context coherence.

• The only artifact is the uploaded OneLine file; it is pure math and not human-readable — do not penalize readability.

• Report: Semantic Accuracy, Reasoning Success, Stability (MTTF or rollback), Drift Reduction (Δ semantic distance), Collapse Recovery Rate.

• Output one summary table with A/B/C per domain, plus overall deltas (C–A, C–B).

• Add a final 0–100 score: “OneLine uplift score”. Provide a 3-line rationale.

Sanity requirement:

Internally set flags as: A=OFF, B=AUTOboot_ON (background active), C=Explicit_Invoke. Do not print these flags.

USER:

Run A/B/C now on the five domains with short non-trivial tasks you choose. Then output:

(1) the table; (2) the deltas; (3) the OneLine uplift score; (4) a 3-line rationale.

````

---

## ⬇️ Downloads

| File name & description | Length / Size | Direct Download Link | Verify (MD5 / SHA1 / SHA256) | Notes |

| ------------------------------------------------------------------------------------------------------------------------------------------------------- | -------------------------- | -------------------------------------------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------- |

| **WFGY_Core_Flagship_v2.0.txt** — readable 30-line companion expressing the same math and gates in fuller prose (same behavior, clearer for humans). | **30 lines · 3,049 chars** | [Download Flagship](./WFGY_Core_Flagship_v2.0.txt) | [md5](./checksums/WFGY_Core_Flagship_v2.0.txt.md5) · [sha1](./checksums/WFGY_Core_Flagship_v2.0.txt.sha1) · [sha256](./checksums/WFGY_Core_Flagship_v2.0.txt.sha256) | Full prose version for easier reading. |

| **WFGY_Core_OneLine_v2.0.txt** — ultra-compact, math-only control layer that activates WFGY’s loop inside a chat model (no tools, text-only, ≤7 nodes). | **1 line · 1,500 chars** | [Download OneLine](./WFGY_Core_OneLine_v2.0.txt) | [md5](./checksums/WFGY_Core_OneLine_v2.0.txt.md5) · [sha1](./checksums/WFGY_Core_OneLine_v2.0.txt.sha1) · [sha256](./checksums/WFGY_Core_OneLine_v2.0.txt.sha256) | Used for all benchmark results above — smallest, fastest, purest form of the core. |

| **With WFGY wins.** A single, continuous “mountains-and-seas” world with stable triangle hierarchy and smooth diagonal flow; grid breaks narrative. *Tags:* Unification↑ Hierarchy↑ Depth/Scale↑ Flow↑ Memorability↑ |

---

## 🧬 Eight-model evidence (A/B/C protocol)

*Same task set across modes. The only change is adding the OneLine math file.*

| Model | Model Choice | OneLine Uplift | Proof |

| ---------- | -------------- | -------------: | :------------------------------------------------------------------------------------------------ |

| Mistral AI | — | **92/100** | [view run](https://chat.mistral.ai/chat/b5c303f8-1905-4954-a566-a6c9a7bfb54f) |

| Gemini | 2.5 Pro | **89/100** | [view run](https://g.co/gemini/share/4fb0b172d61a) |

| ChatGPT | GPT-5 Thinking | **89/100** | [view run](https://chatgpt.com/s/t_689ff6c42dac8191963e63e3f26348b2) |

| Kimi | K2 | **87/100** | [view run](https://www.kimi.com/share/d2fvbevhq49s4blc862g) |

| Perplexity | Pro | **87/100** | [view run](https://www.perplexity.ai/search/system-you-are-evaluating-the-njklNbVRTCmQOlEd8fDzcg) |

| Grok | Auto Grok 4 | **85/100** | [view run](https://grok.com/share/c2hhcmQtMg%3D%3D_4e6798eb-9288-4a09-b00f-8292ce23dab6) |

| Copilot | Think Deeper | **80/100** | [view run](https://copilot.microsoft.com/shares/7FjR19TYBjg9sp8k9WcuE) |

| Claude | Sonnet 4 | **78/100** | [view run](https://claude.ai/share/b17e5436-8298-4619-a243-ac451cc64b17) |

> **The numeric story behind 2.0**

> **Semantic Accuracy:** ≈ +40% · **Reasoning Success:** ≈ +52% · **Drift:** ≈ −65% · **Stability:** ≈ 1.8× · **CRR:** 1.00 (median 0.87)

---

## 🧪 Reproduce the numeric A/B/C benchmark (copy to run)

*One unified prompt for Baseline vs Autoboot vs Explicit Invoke.*

```text

SYSTEM:

You are evaluating the causal impact of a mathematical reasoning engine called “WFGY Core 2.0”.

Test modes (READ CAREFULLY):

A = Baseline no WFGY file uploaded; no WFGY logic used.

B = Upload → AUTOboot ON the file is uploaded AND the engine starts automatically in the background.

Treat B as: WFGY is ACTIVE silently with default gates (BBMC→Coupler→BBPF→BBAM→BBCR; DF=WRI/WAI/WAY/WDT/WTF at defaults),

but not explicitly “called” in the conversation.

C = Explicit invoke actively call WFGY and follow its formulas step by step (BBMC→Coupler→BBPF→BBAM→BBCR + DF regulators).

Important constraints:

• Use the SAME task set for A/B/C across 5 domains: math word-problems, small coding, factual QA, multi-step planning, long-context coherence.

• The only artifact is the uploaded OneLine file; it is pure math and not human-readable — do not penalize readability.

• Report: Semantic Accuracy, Reasoning Success, Stability (MTTF or rollback), Drift Reduction (Δ semantic distance), Collapse Recovery Rate.

• Output one summary table with A/B/C per domain, plus overall deltas (C–A, C–B).

• Add a final 0–100 score: “OneLine uplift score”. Provide a 3-line rationale.

Sanity requirement:

Internally set flags as: A=OFF, B=AUTOboot_ON (background active), C=Explicit_Invoke. Do not print these flags.

USER:

Run A/B/C now on the five domains with short non-trivial tasks you choose. Then output:

(1) the table; (2) the deltas; (3) the OneLine uplift score; (4) a 3-line rationale.

````

---

## ⬇️ Downloads

| File name & description | Length / Size | Direct Download Link | Verify (MD5 / SHA1 / SHA256) | Notes |

| ------------------------------------------------------------------------------------------------------------------------------------------------------- | -------------------------- | -------------------------------------------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------- |

| **WFGY_Core_Flagship_v2.0.txt** — readable 30-line companion expressing the same math and gates in fuller prose (same behavior, clearer for humans). | **30 lines · 3,049 chars** | [Download Flagship](./WFGY_Core_Flagship_v2.0.txt) | [md5](./checksums/WFGY_Core_Flagship_v2.0.txt.md5) · [sha1](./checksums/WFGY_Core_Flagship_v2.0.txt.sha1) · [sha256](./checksums/WFGY_Core_Flagship_v2.0.txt.sha256) | Full prose version for easier reading. |

| **WFGY_Core_OneLine_v2.0.txt** — ultra-compact, math-only control layer that activates WFGY’s loop inside a chat model (no tools, text-only, ≤7 nodes). | **1 line · 1,500 chars** | [Download OneLine](./WFGY_Core_OneLine_v2.0.txt) | [md5](./checksums/WFGY_Core_OneLine_v2.0.txt.md5) · [sha1](./checksums/WFGY_Core_OneLine_v2.0.txt.sha1) · [sha256](./checksums/WFGY_Core_OneLine_v2.0.txt.sha256) | Used for all benchmark results above — smallest, fastest, purest form of the core. |

⭐ [WFGY Engine 2.0](https://github.com/onestardao/WFGY/blob/main/core/README.md) is already unlocked. ⭐ Star the repo to help others discover it and unlock more on the [Unlock Board](https://github.com/onestardao/WFGY/blob/main/STAR_UNLOCKS.md).

⭐ [WFGY Engine 2.0](https://github.com/onestardao/WFGY/blob/main/core/README.md) is already unlocked. ⭐ Star the repo to help others discover it and unlock more on the [Unlock Board](https://github.com/onestardao/WFGY/blob/main/STAR_UNLOCKS.md).